By

Published: 12 Oct 2010

Serious misunderstandings and miscommunications can come from inadvertently using different meanings for the same seemingly simple term, such as the fundamental element of testing--"test case." A tool is provided to reveal the extent of such discrepancies and reach common consensus in your own organization.

What is a test case?

Students in my testing seminars frequently express frustration that different instructors use the same terms to mean different things and vice versa. The problem actually is more complicated, as I discovered while reading a book by a prominent testing authority colleague.

The author described a one-page form to fill out for each test case. The form included fields for the test case's ID, objectives, description, owner, various categorizations, and cross-references to relevant requirements and other test cases.

These are all valuable pieces of information, but a one-page form per test case seemed to me like a lot of writing. Certainly agile and exploratory folks wouldn't even consider such a document. I suspected neither would many other, less ideological, testers. It got me wondering, though, whether the author and I had different ideas of what a test case is.

Initial survey

I created a survey as a less confrontational way to address the test case definition question. Without explaining the reason for my questions, I sent the survey to about a dozen fellow testing authors and instructors, including the author who had prompted my question. The survey asked for each recipient's definition of "test case" and several other test terms.

Respondents answered similarly, basically that a test case consists of input(s) and/or conditions and expected results. Several quoted IEEE Standard 610: "A test case is a set of inputs, execution conditions and expected results for a particular test objective. A test case is the smallest entity that is always executed as a unit, from beginning to end."

The author in question and I seemed to use essentially identical definitions but with apparently different meanings. Contrary to presumptions, mere agreement on the words we use to define something may not capture critical distinctions. Mistaking another's meaning can lead unwittingly to inappropriate decisions and actions. Unawareness of differently interpreting seemingly clear statements exacerbates the risk.

Subsequent survey

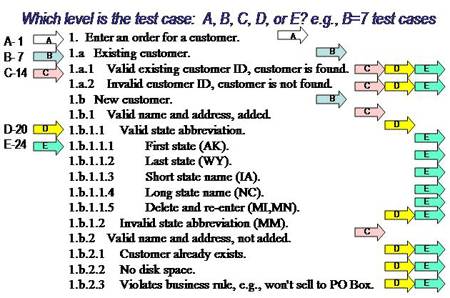

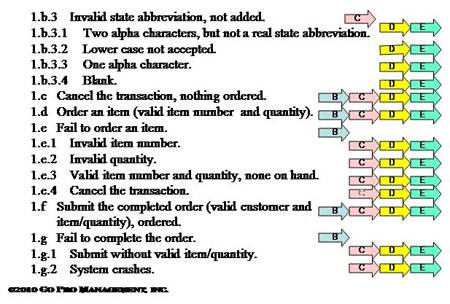

I changed the survey as shown below to include a concrete example.

The survey example above shows five different ways to interpret how many test cases there are in testing Enter an Order for a Customer. The survey asked recipients to examine the example and determine which one, and only one, of the five indicated possible interpretations of a test case fits what they think of as a test case.

That is, they could pick Level A, where there's a white arrow with an "A" in it, which means they feel the entire example is one big test case for Enter an Order for a Customer.

At Level B, where each place there's a blue arrow with a "B" in it represents a separate test case, the example consists of seven test cases.

At Level C, where each place there's a pink arrow with a "C" in it represents a separate test case, the example consists of 14 test cases.

At Level D, where each place there's a yellow arrow with a "D" in it represents a separate test case, the example consists of 20 test cases.

At Level E, where each place there's a teale arrow with an "E" in it represents a separate test case, the example consists of 24 test cases.

I asked the survey participants to pick one level: A=1, B=7, C=14, D=20, or E=24 test cases in the example. I use the same exercise in several of my testing seminars; and people also can do the exercise on my website.

I encourage you to pause at this point in this article before reading how others have answered, figure out your single (A, B, C, D, or E) answer, and send it to me on my website or at .

Survey findings

Only a few of the authors/instructors provided the requested one-letter answers. Most who previously had given lengthy verbal definitions declined to give a one-letter answer to this concrete example. Several, including the book author, nonetheless waxed on about various philosophical testing topics unrelated to the example, such as how long should be required to run a test case.

One instructor declared that none of the entries was a test case because it didn't have test input and expected result data values or test execution procedural instructions. (In deference to but not agreement with his point, I did subsequently add the above data values for the state abbreviations. A test case specification describes inputs and/or conditions and expected results in words.)

Inability of so many supposed testing experts even to give a straight, simple, concrete answer deepened my appreciation of why students found testing training frustrating.

Broader survey results

I acknowledge I haven't been able to total actual A-B-C-D-E counts from the hundreds of testers who have taken the survey example. Most response counts get buried in the bustle of classes. I can say with certainty that each choice has received many votes and none reliably dominates. Each class exhibits variability among the choices, and patterns of responses differ from class to class.

Is the survey is worthless? Just the opposite! The survey time and again shows convincingly that common definitions alone don't assure common interpretations. People use the term "test case" with unrecognized dramatically different meanings—by a factor at least of 24 to 1.

Meaningful communication and estimation is impeded when one person thinks a test case will take an hour while another says it will be only five minutes. Variability occurs as much within an organization as between organizations. The example has no "right answer."

Any of the choices will do, so long as everyone who works together uses the same interpretation. Use the survey within the testing group—first to measure current interpretations and then as a guide for establishing an agreed upon single interpretation.

What is a requirement?

The need for such test case interpretation choices often occurs because of the level of detail in which requirements are defined. Testers generally create test cases based on however the requirements are defined. The problem is that requirements very often are defined at only the grossest Level A.

Without relevant subsidiary requirements detail, testers don't know how to test adequately that requirements have been met. All the tests below the Level A "Enter an Order for a Customer" actually represent s presumed elaboration of the requirement. However, testers often don't know what an unelaborated high-level requirement really means.

Furthermore, developers don't know enough to develop the right product/system from high level requirements; and their tests are inadequate to catch the resulting defects.

Requirements need to be driven down to detail—first so developers can develop the proper products, and second so testers can confirm they've done so. The survey example exercise can be used just as effectively to guide meaningful communication and practices regarding interpretation of how many requirements are represented.

| |

Robin F. Goldsmith, JD is President of Needham, Mass., consultancy Go Pro Management, Inc. He works directly with and trains business and systems professionals in requirements, quality and testing, metrics, ROI and project and process management. A subject expert and reviewer for IIBA's BABOK, he is author of the Proactive Testing and REAL ROI methodologies and Discovering REAL Business Requirements for Software Project Success. |

Dig Deeper on Software development lifecycle