Why hybrid cloud is becoming the default for AI

AI workloads are reshaping cloud strategies. Hybrid cloud is no longer transitional -- it's a structural necessity for AI, driven by latency, governance and cost constraints.

For more than a decade, enterprise cloud strategy has followed a clear direction: consolidate.

Applications moved from fragmented on-premises environments into centralized public cloud platforms. The goal was operational simplicity, scalability and cost efficiency. Hybrid architectures were tolerated but rarely embraced. They were seen as transitional states, necessary during migration, but ultimately something to move beyond. That assumption is beginning to break down.

AI systems are introducing constraints that do not align with centralized infrastructure models. In many cases, the question is no longer whether workloads can be moved to a single environment. It is whether they should be.

This article will explore how hybrid cloud has emerged not as a compromise, but as a structural requirement.

AI workloads don't behave like traditional applications

Traditional enterprise applications are largely deterministic and request-driven. A user initiates a request, the system processes it and a response is returned. Latency matters, but within defined tolerances. Data access patterns are predictable. Governance is enforced at clear boundaries.

AI systems behave differently. Modern AI workloads, especially those involving retrieval-augmented generation, agentic workflows and continuous inference, operate across multiple dimensions simultaneously:

- They depend on contextual data distributed across environments.

- They execute multi-step reasoning pipelines rather than single transactions.

- They operate continuously, not just per request.

- They introduce feedback loops where outputs influence future behavior.

These characteristics fundamentally change where and how execution needs to occur.

Latency becomes a correctness constraint

In traditional systems, latency is primarily a performance concern. In AI systems, latency increasingly becomes a correctness constraint, one that directly influences the validity of system outputs rather than just their timeliness.

Consider a system that retrieves contextual data before generating a response. If the retrieval step introduces delay, the system may operate on stale or incomplete context. In real-time environments, financial systems, operational decision platforms or customer interaction layers, this delay can change the outcome, not just the experience. Latency is no longer just about speed, it becomes part of the decision boundary.

This represents a shift in how latency must be understood in system design: not as an optimization variable, but as a factor that shapes the semantic accuracy of decisions themselves.

This creates a new architectural requirement. Execution must occur where latency constraints preserve semantic correctness, not just user experience. In practice, this often means distributing components across environments:

- Inference near users or edge locations.

- Data retrieval close to governed data sources.

- Orchestration across centralized control layers.

No single environment satisfies all latency constraints simultaneously. This creates a structural implication for architecture in which execution placement must be determined by where correctness can be preserved under latency constraints, rather than where compute is most readily available. In this sense, distribution is not an optimization -- it is a requirement.

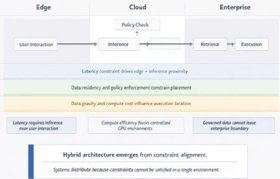

The diagram shows how latency affects not just system performance but the correctness of AI-driven decisions. In centralized architectures, retrieval and inference delays introduce stale or incomplete context, leading to degraded outcomes. In distributed architectures, placing retrieval, inference and interaction layers across edge, cloud and enterprise environments reduces latency and preserves semantic correctness.

Governance can no longer be centralized

Enterprise governance models have historically assumed that control improves with centralization. Bring data into one environment. Apply uniform policies. Enforce access controls consistently. AI disrupts this model.

AI systems frequently interact with:

- Regulated data.

- Proprietary enterprise knowledge.

- External data sources and APIs.

These interactions occur dynamically and often span multiple jurisdictions and compliance domains. Moving all data into a single environment is not always feasible or permissible. Instead, governance becomes distributed by necessity. Policies must be enforced where data resides, where decisions are made and where actions are executed.

This creates a different architectural model:

- Governance is no longer tied to location.

- Governance must be enforced across locations.

Hybrid architecture emerges not from fragmentation, but from policy constraints that cannot be centralized without violating regulatory or operational boundaries.

Cost gravity changes placement decisions

Cloud economics for traditional workloads are relatively predictable. Costs scale with usage, compute, storage and network traffic, and can be optimized through consolidation.

AI workloads introduce different cost dynamics:

- Inference costs scale with context length and model complexity.

- Data movement becomes a dominant cost driver.

- Continuous evaluation introduces persistent overhead.

- Training and fine-tuning create bursty, high-intensity compute demand.

These factors create what can be described as cost gravity, the tendency for workloads to remain close to where data and compute are most economically viable. For example:

- Moving large datasets across environments for inference can exceed compute savings.

- Centralized inference may increase latency and egress costs simultaneously.

- Continuous evaluation pipelines become cost-prohibitive in high-cost regions.

The result is not a simple optimization problem. It is a set of competing forces that pull different parts of the system into different environments.

Hybrid is not a strategy, it is an outcome

Enterprise discussions often frame hybrid cloud as a strategic choice: Should we adopt hybrid? How much should remain on-premises versus public cloud?

AI changes the nature of that question. Hybrid is not something organizations choose in the abstract. It is the natural outcome of competing constraints, latency requirements, governance boundaries and cost dynamics, that cannot be resolved within a single environment. These forces do not align neatly. They pull different parts of the system in different directions, forcing distribution. What appears as architectural complexity is, in many cases, the system resolving competing constraints that cannot be satisfied within a single environment.

This reframes hybrid cloud from an implementation choice into a constraint-driven outcome, emerging from the interaction of latency, governance, and cost forces that act simultaneously on different parts of the system.

Modern AI workflows span edge, cloud and enterprise environments, with latency, governance and cost constraints driving placement decisions. Rather than forming a linear pipeline, AI systems distribute execution across environments to satisfy competing constraints. Hybrid architecture emerges as a structural requirement rather than a design preference.

From location-centric thinking to constraint-centric architecture

Historically, infrastructure decisions were framed around location: where should an application run, and which cloud provider should be used?

AI systems require a different framing. The more relevant questions become: what constraints must this system satisfy, and where can those constraints be met?

This leads to a constraint-centric approach to architecture. Execution is placed where latency preserves correctness, not just performance. Data remains where governance requires it, rather than being centralized for convenience. Compute is deployed where cost efficiency is optimal, rather than where capacity is easiest to provision.

This shift, from location-centric to constraint-centric design, changes the unit of architectural decision-making. Systems are no longer placed based on infrastructure preference but decomposed and distributed based on where individual constraints can be satisfied.

The result is not fragmentation, but alignment. Systems distribute not because they are poorly designed, but because they are correctly designed for the constraints they operate under. As systems distribute, coordination becomes the central challenge. This is where control planes become essential, not as optional governance layers, but as the unifying mechanism that allows distributed systems to operate coherently. Control planes provide a way to enforce policy across environments, monitor behavior consistently, manage lifecycle transitions, and maintain visibility across distributed execution. They do not eliminate hybrid complexity. They make it operable.

Why this changes enterprise architecture decisions

Many CIOs still approach hybrid cloud as a temporary condition, something to simplify or eliminate over time. AI challenges that assumption. Hybrid is not a failure to consolidate. It is the result of systems operating under constraints that cannot be centralized without introducing tradeoffs.

Organizations that continue to treat hybrid as transitional often overcentralize workloads, introduce latency bottlenecks, increase data movement costs and weaken governance enforcement. The issue is not execution. The assumption is that consolidation is always the goal.

Recognizing hybrid as a structural outcome changes how systems must be designed. Architectures must assume distribution as a baseline condition, treat data locality as a constraint rather than a preference, incorporate latency as part of correctness and enforce governance as a distributed capability. This requires designing systems that operate across environments by default -- not as exceptions.

Hybrid cloud, in this context, is not an artifact of incomplete transformation. It is the natural state of AI systems operating under real-world constraints. This shift, from hybrid as a transitional state to hybrid as a structural outcome, has implications not just for where systems run, but how they are designed. The organizations that succeed will not be the ones that eliminate hybrid complexity. They will be the ones that design for it.

Varun Raj is a cloud and AI engineering executive with nearly two decades of experience designing large-scale cloud computing and artificial intelligence platforms. His work focuses on governance architectures that enable generative AI systems to operate safely and reliably in production environments. Raj contributes expert perspectives on cloud platform architecture, AI governance and operational trust through industry publications, technical forums, and invited discussions.

[61] copy.jpeg)