denisismagilov - Fotolia

What to include in a disaster recovery testing plan

Prepare software systems for disasters by putting together and testing a disaster recovery plan. Be ready for ransomware, fires, sabotage and a spilled cup of coffee on a laptop.

Software testers commonly assume that the application's infrastructure and hosting facility are normal, and assess the application only. That overconfidence can magnify the effects of a disaster.

Application performance and availability are subject to natural and man-made disasters. Disaster recovery is the practice of restoring software and hardware systems after ransomware attacks, a lost data center or other issues. Poor disaster recovery costs organizations money in lost revenue and potentially penalties. Even when the organization can recover a copy of its data, systems and applications might be offline for extended periods as team members rebuild and synchronize the environment.

A disaster recovery testing plan helps mitigate the risk. While there are various ways to test disaster recovery preparedness, few organizations even take that step. Google's "2019 Accelerate State of DevOps Report" found that only 40% of survey respondents practice disaster recovery testing on an annual basis or better. Only 12% use simulations that disrupt production systems -- and even fewer automate rolling production disruptions.

The report cites clear benefits to disaster recovery testing when implemented at the team and organization levels, most notably better service availability. But the cost, and risk of performance issues during tests, causes many organizations to balk at the idea.

"Companies may opt out of running disaster recovery testing in production because comprehensive disaster recovery testing requires interrupting data processing, communications, network, data center and other critical operations, which certainly affects system users," said Andrei Mikhailau, software testing director at ScienceSoft, a software and IT consultancy. Additionally, it's expensive to set up and take care of a close copy of the production environment for testing.

Why disaster recovery testing is important

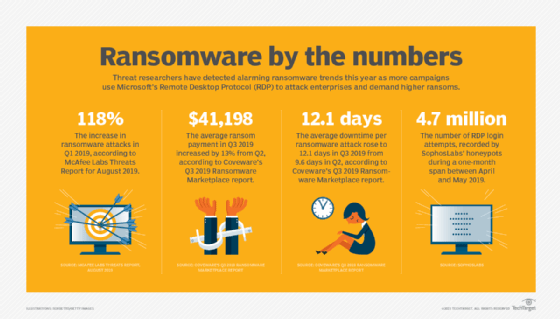

Enterprises must plan for how disruption and disaster will affect internal IT systems and their partner ecosystems. There's no shortage of ways a person, phenomenon or happenstance can take a system offline. Fire and earthquakes can close data centers. A major cloud provider can knock out services with a bad configuration change or failure to renew key credential certificates. Sabotage is another example, as attacks are on the rise.

"Ransomware is now the leading cause of disaster recovery events, said Sazzala Reddy, co-founder and CTO of Datrium, a disaster recovery tools provider. Ransomware criminals bank on companies' inability to recover rapidly, but a disaster recovery testing plan helps thwart them.

Disaster recovery demands testing. "Even if you have a perfect disaster recovery plan in place, you cannot assume that everything will work until you put it into practice," said Joseph George, vice president of global recovery services at Sungard AS, a disaster recovery services and hosting provider. Regular tests of disaster recovery preparedness help organizations identify and address the gaps in a plan and develop routines to call on when a real incident occurs.

How to test a disaster recovery plan

The State of DevOps Report assessed six types of disaster recovery testing, which fell into these groups:

- tabletop exercises;

- infrastructure failover;

- application failover;

- simulations that disrupt test systems;

- simulations that disrupt production systems; and

- automated and ongoing disruptions to production systems.

Application failover was the most common type of disaster recovery testing that surveyed organizations implemented (40%). Infrastructure failover (38%), tabletop exercises (28%) and simulations on test systems (23%) were other common measures. Even respondents classified as "elite performers" failed to reach 50% on any of these types of disaster recovery testing.

Few organizations do these more sophisticated kinds of disaster recovery testing because IT teams face many urgent priorities and tasks. "Dealing with a fire drill or a high-priority project of the day can often push important tasks such as disaster recovery planning and testing to a low-priority category, which results in those tasks being executed less frequently than desired," said Jamie Zajac, senior director of product management at Carbonite, a cloud backup service provider.

Many IT teams also place undue confidence in their cloud applications. Office 365, for example, can protect against data loss from a broken subscriber PC, but the service offers limited safeguards against malicious insiders, malware and accidental deletion.

Disaster recovery metrics

An organization can measure the effectiveness of its disaster recovery testing plan with key metrics. There are two particularly useful metrics to watch; measure them against thresholds in a business continuity plan.

Recovery time objective (RTO). RTO defines how long an organization should need to recover after a disaster. The shorter the RTO, the quicker the system is expected to recover. In some cases, exceeding the RTO is tied to negative consequences for the business.

Recovery point objective (RPO). This metric encompasses how much data loss an organization can incur over time. Measure RPO from the time of the first lost transaction. Data loss is never ideal, but organizations should define what is acceptable, then use it to inform policies for backups and data replication.

"It is important to test multiple scenarios, including partial disasters, to make sure you're able to recover properly," Sungard AS' George said.

Plot trends over time in RTO and RPO to provide an accurate measure of recovery readiness. George also recommends organizations find a way to keep disaster recovery plans updated and synchronized with production changes as part of the software development lifecycle.

Three more disaster recovery criteria to consider

To make it both effective and realistic, adapt the disaster recovery plan to account for these three areas.

Tier applications by value. Develop and test different levels of disaster recovery plans for various applications by priority. You can often split systems into multiple tiers and match disaster recovery requirements to those systems, Zajac said. For example, some critical systems might need high availability and failover with only minutes of downtime, whereas others can withstand hours of downtime with minimal business impact.

Minimize operator error. Make disaster recovery testing plans, procedures and tools -- if you use them -- easy to understand. The goal is to reduce the chance that a failover system fails. Script as many items as possible, as manual processes are inherently more error prone, particularly during the stress of a disaster. Keep the steps that do require a person short and easy to understand. "The more steps in a recovery runbook, the more chance for error," Zajac said.

Prepare the recovery team. Don't forget about the people. The organizational response itself gets tested in simulations, said Vaibhav Kamra, vice president of engineering at Kasten, a data management platform for Kubernetes. Kamra often hears about teams that stop at the review level and assume the plan will work -- without actually observing it in action. Regular tests mean that new team members get familiar with plans before an actual event occurs.