alphaspirit - Fotolia

The need for application-aware storage

Server virtualization leads to unpredictable workloads. Application-aware storage could be the answer.

The storage industry is well known for hype and marketecture. We have software-defined storage; public, private and hybrid cloud storage; flash and hybrid flash; solid-state drives; tiering within the array; cloud tiering; and 3D scale, scale up and scale out to name just a few. Some are legitimate descriptions of a specific architecture, but in many cases, the words become meaningless because every vendor has different definitions for the same terms. All of these terms carry some sort of promise regarding performance, economics or agility. Those are good things to focus on, but it's time to address the core storage requirement in virtualized environments and deliver application-aware storage.

The driving factor is pretty clear. From a storage standpoint, virtualization has changed the environment from one that supports fixed and predictable workloads to one that supports an unpredictable and ever-changing workload environment.

In the old days -- say, five years ago -- we had predictable performance. And that's what the vast majority of storage systems in use today were invented to handle. These systems weren't built to be "adaptive" to the changing requirements that server virtualization introduces; they didn't have to be. We had an application (or a series of applications) that ran on a physical server that didn't move and was dedicated to that app. We tested the workload and knew the performance requirements -- the I/O load and response times. So storage vendors supplied an array (or carved out a portion of an array) with a set number of spinning disk drives, controllers and data paths and everything ran like clockwork. That's not to say there were never performance issues, but because all the connections were physical and the workloads were known, troubleshooting was fairly straightforward.

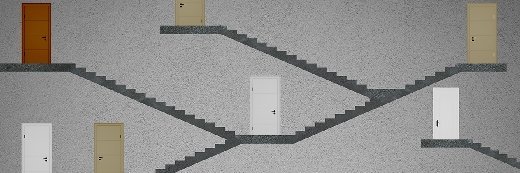

In a virtual server environment, multiple applications run on a single physical server and can be spun up quickly. The I/Os from different applications get mixed together, negating much of the performance tuning storage vendors have done to optimize application performance. The storage isn't aware of which application generated the I/O, which is known as the I/O blender effect. This creates storage performance problems such as elongated response times and application time outs. It also results in a troubleshooting and performance tuning nightmare as administrators navigate the maze of which virtual machines (VMs) live on which LUNs (and which ports, Fibre Channel switch zones and so on).

Unfortunately, the problem is often solved by the IT department allocating more storage and bandwidth to the virtual server environment. This can't be the answer. After all, server virtualization revolutionized server-side economics. It gives us better utilization, faster application provisioning, instant test and dev environments, and vastly better Capex and Opex. Stories abound about companies undergoing server consolidation exercises and eliminating hundreds of physical machines. But what's the point if all those savings need to be invested in the storage side of the equation to level the playing field?

In ESG's last round of storage research, we found that more than one-third of IT managers responsible for the storage environment expect server virtualization to impact data storage over the next 12 months to 18 months. Also, 43% of organizations cited the capital cost of new storage -- whether incremental capacity or net-new systems -- as a significant challenge related to server virtualization support. These certainly aren't the only storage challenges; other top challenges include virtual server storage capacity planning (36%) and limited I/O bandwidth (29%). It's also worth noting that only 5% of respondents reported not having encountered any storage-related challenges stemming from the support of server virtualization implementations.

Storage vendors aren't standing still. We're starting to see some quality of service (QoS) features emerge that allow storage administrators to tune performance by changing multiple parameters on the array in the hope of finding a combination of settings that will solve a performance issue. Application-specific agents or VM settings might be another option to enable better performance.

But that still doesn't get to the core of the issue: the storage has no direct awareness of the VMs causing the performance issues. Traditional storage takes a bottom-up approach, starting with disks, which are wrapped into LUNs, which are wrapped into volumes and then assigned to ports and data paths. And that's how they're managed: starting at the disk and mapping up to the server before getting assigned to the application. Performance tuning on a traditional storage array in this world often means creating LUNs with different performance characteristics and assigning those to ports, paths, servers and ultimately application workloads. Once that tuning is done, it's usually a fixed solution -- if a new workload is added, you need to start from scratch. That approach doesn't suit IT for real-world environments, especially at scale.

Users need application-aware storage solutions that can adapt to changing workloads, guarantee performance to specific workloads even when the surrounding environment changes, and that's aware of the applications and VMs running them. They also need storage that can handle bursty, unpredictable I/O loads, understand the importance of each workload and ensure important workloads get sufficient resources. Finally, users need a storage environment that mirrors the virtual server environment, closing the technology gap. To do this, storage needs a level of workload awareness to provide QoS at the VM/application level. That's how you take the extra hardware out of the process, and stop the cycle of spending server virtualization savings on storage.

About the author:

Terri McClure is a senior storage analyst at Enterprise Strategy Group, Milford, Mass.