What is a test case?

A test case is a set of actions performed on a system to determine if it satisfies software requirements and functions correctly. The purpose of a test case is to determine if different features within a system are performing as expected and to confirm that the system satisfies all related standards, guidelines and customer requirements. The process of writing a test case can also help reveal errors or defects within the system.

Test cases are typically written by members of the quality assurance (QA) team or the testing team and used as step-by-step instructions for each system test. The testing process begins once the development team has finished a system feature or set of features. A sequence or collection of test cases is called a test suite.

A test case document includes test steps, test data, preconditions and the postconditions that verify requirements.

Why test cases are important

Test cases define what must be done to test a system, including the steps executed in the system, the input data values entered into the system and the test results expected throughout test case execution. Using test cases allows developers and testers to discover errors that occurred during development or defects missed during ad hoc tests.

The benefits of an effective test case include the following:

- Guaranteed good test coverage.

- Reduced maintenance and software support costs.

- Reusable test cases.

- Confirmation that the software satisfies end-user requirements.

- Improved quality of software and user experience.

- High-quality products and more satisfied customers.

- More satisfied customers increase company profits.

Overall, writing and using test cases will lead to business optimization. Clients are more satisfied, customer retention increases, the costs of customer service and fixing products decrease, and more reliable products are produced, which improves the company's reputation and brand image.

Example of test case format

Test cases must be designed to fully reflect the software application features and functionality under evaluation. QA engineers should write test cases so only one thing is tested at a time. The language used to write a test case should be simple and easy to understand, active instead of passive, and exact and consistent when naming elements.

The components of a test case include:

- Test name. A title that describes the functionality or feature that the test is verifying.

- Test case ID. Typically a numeric or alphanumeric identifier that QA engineers and testers use to group test cases into test suites.

- Objective. Also called the test case description, this important component describes what the test intends to verify in one to two sentences.

- References. Links to user stories, design specifications or requirements that the test is expected to verify.

- Prerequisites. Any conditions that are necessary for the tester or QA engineer to perform the test.

- Test setup. This component identifies what the test case needs to run correctly, such as app version, operation system, date and time requirements and security specifications.

- Test steps. Detailed descriptions of the sequential actions that must be taken to complete the test.

- Expected results. An outline of how the system should respond to each test step.

Before writing a test case, QA engineers and testing team members should first determine the scope and purpose of the test. This includes understanding the system features and user requirements as well as identifying the testable requirements.

Next, testers should define how the software testing activities are performed. This process starts by identifying effective test case scenarios -- or functionality requiring testing. To identify test case scenarios, testers must understand the functional requirements of the system.

Once the test case scenarios are identified, the non-functional requirements must be defined. Nonfunction requirements include operating systems, security features and hardware requirements. Prerequisites for the test should also be pointed out.

The next step is to define the test case framework. Test cases typically analyze compatibility, functionality, fault tolerance, user interfaces (UIs) and the performance of different elements.

Once these steps are completed, the tester begins writing the test case.

Test case writing best practices

An effective test case design is as follows:

- Accurate, or specific about the purpose.

- Economical, meaning no unnecessary steps or words are used.

- Traceable.

- Repeatable, meaning the document is used to perform the test numerous times.

- Reusable, meaning the document is reused to successfully perform the test again in the future.

To achieve these goals, QA and testing engineers use the following best practices:

- Prioritize which test cases to write based on project timelines and the risk factors of the system or application.

- Create unique test cases and avoid irrelevant or duplicate test cases.

- Confirm that the test suite checks all specified requirements mentioned in the specification document.

- Write test cases that are transparent and straightforward. The title of each test case should be short.

- Test case steps should be broken into the smallest possible segments to avoid confusion when executing.

- Written test cases should be easy to understand and modify, when necessary.

- Keep the end user in mind whenever a test case is created.

- Do not assume the features and functionality of the system.

- Each test case should be easily identifiable.

- Descriptions should be clear and concise.

Types of test cases

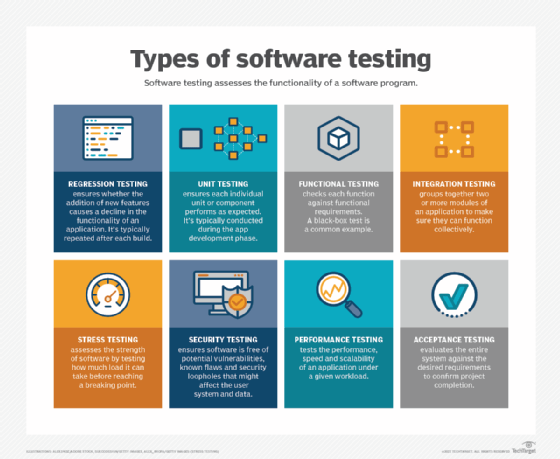

The various test case types include the following:

Functionality test cases. This is a type of black box testing that determines if an app's interface works with the rest of the system and its users by identifying whether the functions that the software is expected to perform are a success or failure. Functionality test cases are based on system specifications or user stories, allowing tests to be performed without accessing the internal structures of the software. This test case is usually written by the QA team.

Performance test cases. These test cases can help validate response times and confirm the overall effectiveness of the system. Performance test cases include a very strict set of success criteria and are used to understand how the system will operate in the real world. Performance test cases are typically written by the testing team, but automated testing is often used because one system can demand hundreds of thousands of performance tests.

Unit test cases. Unit testing involves analyzing individual units or components of the software to confirm each unit performs as expected. A unit is the smallest testable element of software. It often takes a few inputs to produce a single output.

User interface test cases. This type of test case verifies that specific elements of the graphical user interface (GUI) look and perform as expected. UI test cases can reveal errors in elements that the user interacts with, such as grammar and spelling errors, broken links and cosmetic inconsistencies. UI tests often require cross-browser functionality to ensure an app performs consistently across different browsers. These test cases are usually written by the testing team with some help from the design team.

Security test cases. These test cases are used to confirm that the system restricts actions and permissions when necessary to protect data. Security test cases often focus on authentication and encryption and frequently use security-based tests, such as penetration testing. The security team is responsible for writing these test cases -- if one exists in the organization.

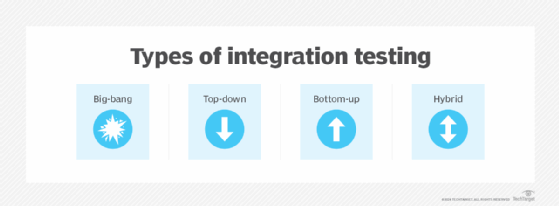

Integration test cases. This test case is written to determine how the different software modules interact with each other. The main purpose of this test case is to confirm that the interfaces between different modules work correctly. Integration test cases are typically written by the testing team, with input provided by the software development team.

Database test cases. This type of test case aims to examine what is happening internally, helping testers understand where the data is going in the system. Testing teams frequently use Structured Query Language queries to write database test cases.

Usability test cases. A usability test case is used to reveal how users naturally approach and use an application. Instead of providing step-by-step details, a usability test case will provide the tester with a high-level scenario or task to complete. These test cases are typically written by the design and testing teams and should be performed before user acceptance testing (UAT).

User acceptance test cases. These test cases focus on analyzing the user acceptance testing environment. They are broad enough to cover the entire system and their purpose is to verify if the application is acceptable to the user. User acceptance test cases are prepared by the testing team or product manager and then used by the end user or client. These tests are often the last step before the system goes to production.

Regression testing. This test confirms recent code or program changes have not affected existing system features. Regression testing involves selecting all or some of the executed test cases and running them again to confirm the software's existing functionalities still perform appropriately.

Test script vs. test case vs. test scenario

While they are all related to software testing, test scripts and test scenarios possess several important differences when compared to test cases.

First, a test scenario refers to any testable functionality of the software. Test scenarios are important because they verify the complete functionality of the application, they ensure the business processes and flows are aligned with the functional requirements, they determine the most critical end-to-end transactions as well as the real use of the app and they are used to easily create test cases. Furthermore, test scenarios can be approved by stakeholders -- such as developers, customers and business analysts -- to guarantee the application in question is being tested fully.

The key differences between a test case and a test scenario include the following:

- A test case provides a set of actions performed to verify that specific features are performing correctly. A test scenario is any testable feature.

- A test case is beneficial in exhaustive testing -- a software testing approach that involves testing every possible data combination. A test scenario is more agile and focuses on end-to-end testing of the software.

- A test case looks at what to test and how to test it while a test scenario only identifies what to test.

- A test case requires more resources and time for test execution than a test scenario.

- A test case includes information such as test steps, expected results and data while a test scenario only includes the functionality to be tested.

A test script is a line-by-line description of all the actions and data needed to properly perform a test. The script includes detailed explanations of the steps needed to achieve a specific goal within the program, as well as descriptions of the results that are expected for each step.

On the other hand, a test case describes the idea that is to be tested; it does not detail the exact steps to be taken. Therefore, test scripts are more detailed testing documents than test cases, but test cases are more detailed than test scenarios.

Challenges of test cases

While test cases guarantee benefits in terms of functional software, user acceptance and ultimately a company's bottom line, there are often challenges experienced by testing teams. Common challenges of test cases are as follows:

- Lack of clear requirements. Prior to testing, requirements must be well-documented otherwise a team might execute tests that are incomplete and unable to detect bugs or defects.

- Lack of communication. When there is insufficient communication between stakeholders and testing teams, there is a risk that test results will not adhere to stakeholders' expectations.

- Time constraints. Testing teams must operate within given time periods, as software projects often have set release schedules.

- Test environment difficulties. The hardware and software resources required to operate a testing environment require costs and effort by an organization to maintain. Testing teams must perform predictive maintenance to prevent issues before they arise.

- Insufficient post-testing documentation. Test results must be sufficiently documented and reviewed by multiple team members. Documentation will serve as tangible proof that test cases meet expectations. Regularly reviewing and updating documentation is yet another time-consuming task for test teams.

Software testing is one of many AI industry applications today, since AI automates various aspects. Learn how to gradually incorporate AI in software testing.