Evaluate proprietary vs. open source testing tools

Cost, complexity and support levels are key items when teams consider proprietary or open source functional testing tools for their development environments.

QA professionals have a lot of options when choosing an automated testing tool. To start, testers can consider two broad categories of tools: proprietary and open source.

Which category of tool is best for each IT organization depends on a few factors. For example, while proprietary tools are more expensive compared to Open Source tools, they often don't require as much heavy lifting to manage and maintain. What's more, proprietary tools come with vendor support.

In addition to weighing the benefits and drawbacks of proprietary and open source tools, testers should map out the exact features and capabilities they need, based on application requirements.

Tradeoffs between proprietary and open source

Cost is a major consideration when deciding between a proprietary vs. open source testing tool. While the latter is typically free, there are tradeoffs to consider.

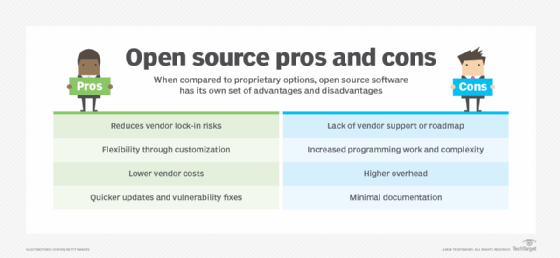

Whereas proprietary tools come with extensive vendor support, open source tooling offers more in terms of flexibility and customization to optimize the tool for a specific environment. For example, a team may choose to fork an open source tool's public repository and adjust it to their particular needs. Also, open source tooling can help IT organizations avoid the vendor lock-in of proprietary tools.

The open source community can provide different levels of support and troubleshooting. However, an open source community might not be as accessible or thorough, compared to support from a vendor.

For example, LoadView, a proprietary testing tool, can be costly. A monthly subscription for the starter package is $159 each month when you sign up for an annual contract -- $199 on a month-to-month plan -- but that only allows for a few tests. If testers need to run five or 10 tests, after 1,800 load injector minutes, the team is charged an extra $199 per test. This approach can become expensive, but the levels of support and broad feature set might justify these costs.

With open source options, financial cost is less of a concern. That said, QA professionals need certain skill sets -- and ample time -- to manage the tool themselves. For example, Vegeta is an open source tool one can find on GitHub. Even though the tool is free, users have to install it, determine which server to run it on and maintain it. If they need help deploying or using the tool, the only support available is what they can find online.

Other considerations for choosing a tool

In general, test automation tools -- regardless of whether they are open source or proprietary -- focus primarily on application performance testing or automated code checking. They can also, however, offer features related to software functionality, reliability, efficiency, maintainability and portability. Some provide capabilities for development planning, with those plans going into a tool like Jira or another Kanban board-style tool.

A few open source tools to consider are the following:

- Selenium. A Python testing tool.

- JMeter. A load and performance testing tool that can simulate certain amounts of users accessing a web application.

- Vegeta. Similar to JMeter, Vegeta is an HTTP testing tool that's meant to drill HTTP services with as many requests as the application can take.

A few proprietary options to consider are the following:

- Firebase. A cloud-based performance testing tool that enables QA professionals to test an application on various devices.

- BlazeMeter. A cloud-based performance testing tool that integrates with JMeter.

- LoadView. A cloud-based performance testing tool that emulates users accessing a website and front-end web APIs.

- LoadNinja. A cloud-based tool to create and record test scripts so teams can continuously run scripts without having to rewrite them.

Before choosing a QA tool, testers must understand an application's requirements. For instance, if QA professionals need to test a back-end application or ensure code quality, a performance testing tool that focuses on HTTP probably doesn't make sense. Alternatively, a performance testing tool that focuses on HTTP traffic is a good fit for QA professionals testing front-end applications and assessing how many users can reach a website before it starts to have performance issues.