How to build a test automation framework

To create an automated testing framework, establish plans for test creation, tools, reporting, logs and maintenance.

Automated testing projects frequently fall apart. That's because teams inevitably run into various obstacles that involve resources, maintenance and code policy. If a team can define how its resources and tests should work for given software code and projects, it can build a test automation framework that lasts -- one with valid, useful test scripts.

The point of an automated testing framework is to define and optimize tests to run quickly and autonomously.

Step 1. Lay out the plan and rules

As with any successful project, the first step to create a test automation framework involves detailing a plan.

Start with a general overview for the automated testing framework. Organize and define resources, tools and coding languages as well as reporting, error logging, maintenance and test script security. Essentially, decide on and document how the team will develop, store and execute automated tests.

Define how testers, or developers, should write automated tests as well as what coding languages they will use. Alternatively, use a tool that both helps create a test automation framework and the scripts themselves.

Finally, decide how the team will handle Version control. Many test automation tools are prescriptive about source control and coding. These tools eliminate a lot of guesswork, as they only leave you with a few decisions to make about assigning resources to tasks.

If you build a custom test automation framework, you must define additional items. Both paths work, but plan accordingly. For example, if you choose a test automation tool, ensure team members receive specialized training on how to use it.

Don't forget script security, in addition to version control. Automated tests are valuable scripts. Protect them from overwrites, and your testers from the time wasted to fix errors and restore lost code because of insufficient safeguards in place.

When you build a test automation framework, determine the following:

- Who will code the test scripts, and who will execute them?

- Who is responsible for maintenance?

- At what stage of the development cycle will you execute tests and how often?

- Where will you store the test scripts and test framework documentation?

- What test management or automation tools do you need?

- What version control system will you use to safeguard test scripts?

Step 2. Organize resources and tools

Identify who will manage and develop code, particularly test scripts. These team members should have strong organizational or project management skills. Coding skills may enhance test script creation, but a general understanding is all that's needed. Confirm the team has the resources, knowledge and dedication for the task. Otherwise, you might deprioritize the creation of automated tests if you attempt to add the work onto the existing resources, particularly if there's other critical work.

Execute tests frequently. When you determine the structure of test cases in a framework, account for the duration of the test execution as well as how frequently the tests run. Also, account for time spent analyzing failed tests for defects.

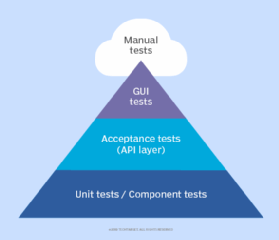

Software teams need different types of automated test suites. For example, smoke tests can cover critical functionality, and a regression suite can include integrated tests, if needed. Each type of test needs an execution plan.

Store the documentation and test scripts in a convenient location accessible to all team members to keep work on track and the team productive. If necessary, provide training on the version control system.

As the final planning step, state any tools the team will use to build a test automation framework or scripts. Even if you want to build from scratch, detail what the team should use for test script development, so it can be as productive as possible.

Step 3. Set policies for reporting, logs and maintenance

Include reporting and error logging in your automated testing framework. If you don't, it will add considerable time to analyze failures in automated test scripts one by one.

Most developers can create a useful error log accessible to anyone, either within the application or a shared folder. Logs and reports help testers fix script errors and identify application errors for repair. The more thorough the information collected on the error, the more easily and quickly the team can remedy it.

Error logging and reporting are key parts of a defined maintenance plan. Many test automation efforts fail when maintenance falls behind. If you don't determine a structure and a point person for maintenance work, the team can easily get bogged down with tests that need updates. Make maintenance part of the planned test cycle to avoid team surprises and obstacles.

Test case maintenance isn't a new concept. Teams have always needed to update and synchronize manual test cases with application changes. Every time a code change happens, testers should update affected automated test scripts to avoid false failures.

One idea is to cycle the tests. After you establish a reliable suite of automated smoke and regression test cases, bundle them in test suites that fully cover the application functionality even if you need to pull several tests out of execution for maintenance. Or you can also pull out automated test scripts that require maintenance and replace them with manual tests in the interim.

Step 4. Take the framework for a test drive

After you build a test automation framework, give it a trial run. Test the test framework itself. Develop a small suite of automated smoke tests detailed enough to verify an application's critical functionality in a defined area.

For example, if you automate a healthcare system where physicians enter medication orders, create an automated test suite that covers the physician's full workflow when they enter in a medication. Include any necessary alert functionality or alternate workflows, such as different steps for specific medication types, like narcotics.

You'll know if the test framework needs adjustment based on the smoke test suite. Execute the automated tests first, check the results and then assess the tests by executing them manually. Did the automated tests miss any defects that manual tests found? Were failures easy or difficult to analyze? Did failures occur in the test scripts or in the application code? Adjust the framework as needed. Then, automate another smoke test suite that tests a different workflow in the application.

Accordingly, you will build a valid series of automated test suites while you prove out the framework. Make changes to the process early on to eliminate obstacles down the road. In the end, you'll get valid automated test suites and a successful test automation implementation.