Getty Images/iStockphoto

How autonomous AI workloads reshape cloud cost management

AI workloads break traditional cloud cost models. As agents and inference loops drive spend, organizations must embed behavior-aware cost governance into system architecture.

For more than a decade, cloud cost management has rested on a stable assumption: Workloads are predictable, human-initiated and bounded by provisioning decisions. That assumption is eroding.

AI workloads -- particularly inference-heavy, agent-driven systems -- consume resources continuously and adapt execution paths dynamically and operate with limited human intervention.

As a result, cloud costs are no longer driven primarily by instance sizing, utilization curves or reserved capacity decisions. They are increasingly shaped by the following examples of system behavior:

- How agents reason.

- How context is retrieved.

- How often models are invoked.

- How feedback loops amplify execution over time.

AI is not simply increasing cloud spend; it is invalidating the economic model most FinOps practices rely on. Post-hoc optimization, static budgets and capacity-based controls struggle to keep pace with autonomous systems that decide when to run, what to access and how long to operate.

Regaining control requires a shift from capacity-centric cost management to behavior-aware governance, treating cost as a runtime property shaped by architectural decisions rather than a financial afterthought addressed after deployment.

Why traditional cloud cost models are breaking

Classic cloud cost models were designed for workloads with three defining characteristics:

- Requests were human-initiated or application-triggered.

- Execution paths were largely deterministic.

- Resource consumption scaled predictably with load.

Under these assumptions, cost governance focused on capacity decisions such as instance types, autoscaling thresholds, reserved instances and budget alerts. Optimization happened after the fact, informed by billing reports and historical usage patterns. AI workloads violate all three assumptions.

Inference-centric systems rise

Inference-centric systems continuously generate activity, often without direct user interaction. Agent-based architectures introduce non-deterministic execution paths, where one inference call can trigger many others. Context retrieval, tool invocation and iterative reasoning loops compound resource consumption in ways that are difficult to predict upfront.

Industry observations already reflect this shift. The FinOps Foundation's State of FinOps 2026 Report notes that AI spend governance is becoming a mainstream need rather than a niche or experimental effort. Spend accumulates through repeated inference and coordination rather than through a single dominant resource. The issue is not that AI workloads are expensive in isolation -- it is that their cost emerges from behavior, not provisioning.

From capacity consumption to behavioral spend

In traditional cloud environments, cost scaled roughly with utilization. More traffic meant more instances, which meant higher bills. In AI-driven systems, cost often scales with decision complexity rather than traffic volume.

A single request could trigger the following:

- Multiple model invocations.

- Retrieval across several vector indexes.

- Tool calls to external services.

- Iterative reasoning cycles across agents.

Each step could be inexpensive on its own. Together, they create compounding cost patterns that are difficult to observe using conventional infrastructure metrics. Token usage, context length and model routing decisions become as important as CPU or memory utilization -- yet many cost tools remain blind to these dimensions.

This explains why traditional FinOps controls struggle. Reserved capacity discounts and static budgets do little to constrain systems that dynamically decide how much work to perform. Post-billing analysis identifies overspend only after it has occurred, offering limited leverage in environments where execution paths change continuously.

Why post-hoc optimization fails for AI workloads

Most FinOps practices operate downstream of execution. Teams review monthly bills, identify anomalies and adjust configurations or budgets accordingly. This approach assumes workloads are stable enough for retrospective analysis to be effective. AI systems undermine that assumption.

Autonomous agents can change behavior between billing cycles, reacting to new data, updated prompts or revised policies. Optimization recommendations based on last month's usage could become obsolete by the time they are applied.

More importantly, many AI cost drivers are invisible in standard billing data. Cloud invoices rarely explain why a model was invoked, which agent triggered it, or whether the invocation added value. Without this context, cost optimization becomes guesswork.

In enterprise environments, this manifests as a loss of explainability. Cloud teams can report spend but struggle to explain why it occurred or which behaviors drove it. The problem is not merely higher bills -- it is reduced predictability and diminished control.

A new model: Behavior-aware cost governance

To address these challenges, organizations must treat cost as a runtime control problem, not a reporting exercise. Behavior-aware cost governance embeds economic constraints directly into system design and execution, enabling teams to shape cost outcomes while AI systems operate.

This approach introduces three key shifts:

- From static budgets to dynamic policy enforcement.

- From infrastructure metrics to inference-level observability.

- From after-the-fact optimization to real-time control.

Instead of asking whether workloads stayed within budget, teams ask whether systems behaved within acceptable economic boundaries.

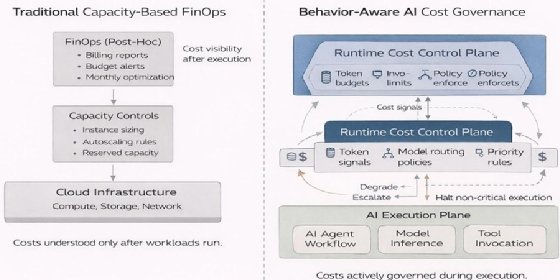

Traditional FinOps governs cost after execution using infrastructure-level metrics. AI-native cost governance enforces economic constraints at runtime based on inference behavior and agent execution. Figure 1 illustrates the comparison of traditional cloud cost management focused on provisioning and utilization compared to AI-native governance that enforces policies at inference, agent and workflow levels during runtime.

Architectural controls that enable cost as a runtime property

Behavior-aware cost governance is not a tooling feature; it is an architectural pattern. Several controls are emerging as foundational in AI-native cloud environments.

1. Inference-level cost visibility

Teams must observe cost at the point of incurrence -- during inference. This includes tracking token usage, model selection, context size and invocation frequency per agent or workflow.

Without this granularity, it is impossible to distinguish productive AI activity from runaway execution loops. Organizations that introduce inference-level observability consistently report improved ability to correlate spend with business outcomes rather than treating AI costs as opaque overhead.

2. Policy-driven model routing

Not all tasks require the most expensive model. Cost-aware architectures dynamically route requests based on precision, latency and budget constraints. Lower-cost models handle routine tasks, reserving premium models for high-value decisions.

Embedding these policies at runtime enables systems to adapt economically without manual intervention. Crucially, routing decisions must remain auditable to support governance and accountability.

3. Token and execution budgets

Static project budgets provide limited control over autonomous systems. Instead, teams define execution budgets that constrain the amount of work an agent or workflow can perform. These budgets operate at token, step, or time boundaries, enforcing limits during execution rather than after billing.

When limits are reached, systems can degrade gracefully, escalate to humans, or halt non-critical tasks -- preserving predictability without disabling functionality.

4. Feedback loop control

Agentic systems often contain feedback loops where outputs influence subsequent actions. Left unchecked, these loops can amplify costs rapidly. Architectural controls that limit recursion depth, invocation frequency or context expansion help prevent exponential cost growth.

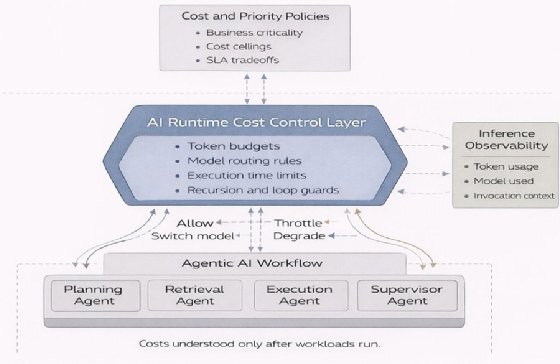

Figure 2 illustrates runtime governance enforcing token budgets, model routing policies and execution limits across agent-based AI workflows, providing real time economic control during inference.

Implications for cloud and FinOps teams

The shift to behavior-aware cost governance has organizational implications. Cloud teams, FinOps practitioners and AI engineers must collaborate more closely to align architectural decisions with economic outcomes.

FinOps teams expand from cost reporting into policy design, helping define acceptable cost behaviors and tradeoffs. Cloud architects play a central role in embedding these controls into platforms, ensuring economic constraints are enforced consistently across workloads.

As AI systems gain autonomy, cost governance -- like security and reliability -- moves closer to runtime execution.

Action items for cloud teams

Organizations experimenting with AI can take several practical steps:

- Instrument inference early. Capture token usage, model selection and invocation context before scaling deployments.

- Define economic policies explicitly. Translate budget constraints into enforceable runtime rules.

- Evaluate orchestration and routing capabilities. Favor platforms that support dynamic model selection and policy enforcement.

- Integrate cost signals into observability. Treat economic metrics as first-class signals alongside performance and reliability.

- Align stakeholders around behavior, not spend alone. Shift conversations from monthly bills to system behavior and value creation.

Conclusion

AI workloads are forcing a fundamental rethink of cloud economics. As systems become autonomous and adaptive, costs emerge from behavior rather than provisioning. Traditional capacity-based models -- optimized after the fact -- struggle to provide control, predictability or accountability.

Organizations that succeed in scaling AI will be those that embed cost governance directly into system architecture, treating economics as a runtime property shaped by design decisions. In doing so, they move from reacting to AI spend to actively governing how AI systems consume resources -- a shift that is becoming essential as AI moves from experimentation to production infrastructure.

Varun Raj is a cloud and AI engineering executive specializing in enterprise-scale cloud computing, cloud modernization and AI-native architectures. His work focuses on designing and operationalizing large-scale cloud, data and distributed systems, including generative AI and multi-agent platforms, as durable, production-grade capabilities rather than isolated implementations.

He concentrates on platform-level design across hybrid and multi-cloud environments, combining Kubernetes-based infrastructure, AI orchestration and governance controls to enable reliable, cost-efficient and responsible AI adoption. His experience spans highly regulated industries such as healthcare and financial services, where cloud and AI systems must meet strict requirements for reliability, security and compliance.