WavebreakmediaMicro - Fotolia

IT wrangles with test automation benefits and challenges

Test automation is a valuable practice, but it presents challenges for teams and individuals alike. A gradual approach to automation helps boost the odds of successful adoption.

ORLANDO, Fla. -- Organizations adopt test automation to expedite software delivery through the removal of manual tests, but many continue to weigh test automation's numerous technical and cultural challenges.

Test automation and continuous testing were frequent topics of conversation among attendees here at the STAREAST conference, and adoption and maturity varied among attendees. While most lauded the expertise of QA professionals in manual test execution, the consensus was that test automation is here to stay, and as organizations weigh test automation's benefits and challenges, the practice will continue to shape dev and test responsibilities.

"When you're able to implement [test] automation the right way in the pipeline, as close to when the developer checks in their code as possible, you get real tangible results," said Adam Auerbach, co-head of the DevTestSecOps practice at EPAM Systems, a software development consultancy based in Newtown, Penn.

Test automation enables organizations to improve productivity and time to market, theoretically with no impact to software quality, he said. "Being able to deploy early and often has less risk, but also has bigger benefits from a customer perspective, because now you can iterate faster on their feedback," he said.

Adam Auerbach

Adam Auerbach

Many STAREAST attendees arrived eager to implement or augment their test automation suites.

One testing center of excellence (TCOE) manager at a Fortune 500 food distribution company, who asked to remain anonymous, described his company's test automation efforts over an 18-month span. The company previously executed manual tests, but then used Selenium on two of its customer-facing apps, and slimmed execution time of its regression test automation suite from four weeks to just one day.

The company plans more test automation as it moves from an iterative Waterfall method to Agile. For automated tests on some of its heavier back-end systems, which rely on SAP, the company switched to Micro Focus' Unified Functional Testing. The tool change, along with a transition from Waterfall to Agile that de-siloes QA, will help the company automate even more of its test suite in the future.

"Getting new enhancements out to our customer base quicker, and to be able to be more consistent with our testing, getting a more consistent response back from them as well -- that's success," the TCOE manager said. "It's good to get those early successes, and then be able to sell the whole benefit of automation to IT management."

Another attendee, a senior QA analyst for a public sector agency in Washington, D.C., said his team will take another crack at test automation after problems on its previous attempts to use Katalon Studio for a five-year-old app. He hopes to get developers on board with more testing, as the team concentrates on product quality in advance of a move to the cloud, and gradually enforce test automation through small wins.

Jeffery Payne

Jeffery Payne

"We always push that you need to do this incrementally and move people slowly," said Jeffery Payne, CEO at Coveros, a software consultancy based in Fairfax, Va., that last year acquired TechWell, which hosts STAREAST. "[Testers] are just not going to show up day two and start writing code."

Payne recommends automating everything under the UI, where human eyes don't offer much benefit over well-scripted automated tests. "Unit tests, component tests, API testing, you ought to automate the heck out of all that," he said. "That should be your automation focus."

To see test automation benefits, overcome these challenges

Part of teams' struggle to adopt test automation derives from an inconsistent approach to software delivery. Waterfall teams -- whether they call themselves Agile or not -- will see inherent delay in test execution, Auerbach said.

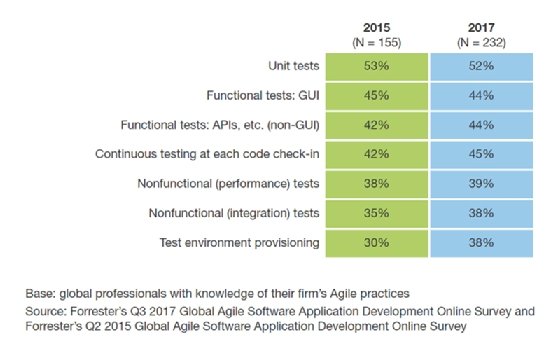

Among test automation adopters, barriers limit the maturity of the practice. According to Forrester Research report, "The Path To Autonomous Testing: Augment Human Testers First," test automation adoption remained largely flat from 2015 to 2017.

The lack of test scripting skills inhibits a team's success with automated tests. "It's a people, process and technology issue," said Diego Lo Giudice, Forrester Research analyst and author of the report. "It's [about] understanding all the types of automation that you might need to do in your application landscape in your environment. And it's [also] understanding if you've got the skills, because if you have to go beyond the user interface [for tests], it becomes more developer-led."

Diego Lo Giudice

Diego Lo Giudice

As developers take on more testing responsibilities, testers must pick up the skills necessary to script these tests, lest management replace them with engineers. Testers themselves will be expected to perform a variety of tasks that help stand up automated tests, such as service virtualization, integrating tools for test data management or using containers for the test environment, Auerbach said.

"Your testers have to be technical," he said. "In order to mature your automation process, you need to solve some of those other issues as well. Really, this is all engineering. ... It's probably just as complex as writing an application."

Auerbach performs a sonar analysis on new clients' test code to evaluate their frameworks, coding techniques and application architectures. More often than not, self-taught programmers will run into instability issues down the road, he said. Tools such as Cucumber or more visual-based tools can lower the learning barrier, but require a deep understanding of how to maintain these tests to perform reliably over time.

There's still a need for testers

As priorities shift to a more fluid, de-siloed and code-intensive QA process, developers can't take on all of the test automation responsibilities themselves. Payne said he knows of an organization that laid off its QA staff and turned over all testing responsibilities to developers, only to reverse course and try to hire them back a year later when quality took a nosedive.

"We've got to get management to understand technology better, and how you adopt technology," he said. "They're just clueless; they just don't understand how you go about making these transformations successfully."

Payne also cautioned against hard deadlines, which can cause teams to take shortcuts and build poorly constructed automated tests -- a recipe for failure.

While developers can aid QA through scripts and versioning of test cases, they can only spare so much time. Forrester's Global Business Technographics Developer Survey indicated 52% of international developers spend less than an hour per day on nonfunctional testing, or no time at all. Quality and speed don't necessarily have to compete, but developers can only handle so much.

AI could enable more efficient automation of UI and exploratory tests over time, but those capabilities are presently limited. This is why tester expertise is vital, not only to guarantee quality in an increasingly mobile-focused landscape on the front end -- one that values user feedback more than ever -- but also to serve as a necessary sanity check on automated tests.

"Don't try to automate the UI and process flows through the UI. Do that using exploratory testing, your skill sets of your testers," Payne said. Use testers' expertise in test design, and think through the kinds of tests that are useful to help drive that automation. "If we don't have good tests, it doesn't matter if we automate them," he said.