RDMA over Fabrics: a big step for SSD access

Shared storage access for servers has been the most basic requirement for storage networks. Performance demands for multiple systems accessing data continually increase due to improvements in compute and a desire to get more work done from infrastructure investments.

These demands have been met with technology developments to deliver storage networking and performance for access to data. The next big step function is with RDMA over Fabrics. RDMA is Remote Direct Memory Access and the fabric is the storage network.

RDMA over Fabrics is about increasing performance for access to shared data and taking advantage of solid-state memory technology. RDMA over Fabrics can be a logical evolution of the current shared storage architectures and continue on the path to accelerate operations to increase value from the investments in applications, servers, and storage.

RDMA over Fabrics sends data from one memory address space to another over an interface using a protocol. RDMA is a zero-copy transfer where data can be sent to or received from a storage system from/to the application memory space without the overhead of moving it between other locations as required by some protocol stacks.

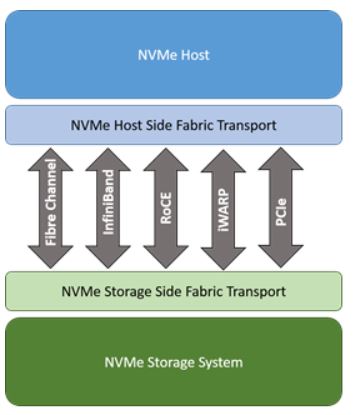

RDMA allows data transfers with much less overhead and a faster response time from lower latency. NVMe (Non-Volatile Memory express) is the protocol used for RDMA over Fabrics. Think of the protocol as the language for communication, and independent of the physical interface. Both ends of the communication — server and storage — must speak the same language for the transfer.

Solid-state technology – including flash storage — is memory, accessed as memory segments. NVMe provides that access. When SCSI is used, a translation must occur to access the memory-based storage, which causes more latency. NVMe provides for parallel conversations to occur to use the physical interface more effectively.

There are competing options for the fabric interface. High performance Fibre Channel storage networks at Gen 6 (32 Gigbits per second) can support RDMA with HBAs. These Gen 6 switches and adapters are backwards compatible with current transfer environments.

Other options for RDMA over Fabrics include RoCE (RDMA over Converged Ethernet), iWARP (Internet Wide Area RDMA Protocol), InfiniBand, and PCIe. RoCE is a similar concept to FCoE. iWARP uses Transmission Control Protocol (TCP) or Stream Control Transmission Protocol (SCTP) for transmission. InfiniBand is an RDMA-based protocol used in high-performance computing and inter-system communication. PCie is a limited distance interface.

Each method has its own options, and a set of vendors promoting them.

New technology that promises to deliver improvements always attracts great interest and becomes the subject of discussion and investigation. However, the final judgement of the value of the technology doesn’t occur until it is effectively deployed. Disruptive changes tend to be cause delays and may prevent deployment despite the potential value. Technology that can be seamlessly introduced with compatibility with current operations will be put to use more quickly. To understand the value of RDMA over Fabrics and how to take advantage of this new technology, it is important to recognize how it can be introduced into operational environments.

A useful characteristic for RDMA is the ability to use memory access for shared storage over a storage network as an internal memory extension. This would be especially useful for databases that could not fit within internal processor memory. It would provide much higher performance than traversing a protocol stack to deliver I/O to a storage device.

The adoption rate will be determined by the immediacy of the need, the ability to deploy with the least risk or disruption, and the economic justification for making the transition. IT architects and directors should investigate RDMA over Fabrics with solid-state storage as part of their storage strategy.

(Randy Kerns is Senior Strategist at Evaluator Group, an IT analyst firm).