Getty Images

Solving quantum computing's longstanding no-cloning problem

University of Waterloo proposes encrypted qubit encodings that enable one-time reconstruction, reframing quantum data resilience, security and storage.

Researchers at the University of Waterloo in Canada recently made significant progress toward solving a longstanding challenge in quantum computing: protecting quantum information without relying on classical, copy-based redundancy techniques. Their research paper, entitled "Encrypted Qubits Can Be Cloned," was published in Physical Review Letters in January 2026.

In their paper, Dr. Achim Kempf and Dr. Koji Yamaguchi introduced a protocol that suggests a new way to protect quantum information without producing multiple usable copies. Instead of using classical data replication strategies to ensure data resilience and availability, the protocol creates multiple encrypted encodings of the state. This enables the original state to be reconstructed once from the encodings under controlled conditions.

This breakthrough carries significance for both quantum data storage and security. To understand the significance of this for enterprise-scale quantum computing, however, it is helpful first to review some fundamental differences between classical and quantum computing.

Classical vs. quantum

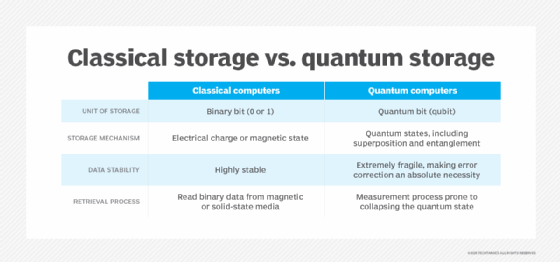

In classical computing systems, data is stored as bits that have a definite, fixed binary value of either 0 or 1. This characteristic enables perfect data copying and supports the backup, replication and failover strategies that are used to protect data today.

In quantum computing, however, data is stored in qubits. A qubit can exist in a superposition of both 0 and 1 states at the same time, and its quantum state is described mathematically by probability amplitudes.

The problem is that, according to the laws of quantum mechanics, it is impossible to perfectly reproduce an arbitrary quantum state without violating the linear structure of quantum mechanics and creating mathematical inconsistencies. This principle is known as the no-cloning theorem.

No-cloning theorem

The no-cloning theorem is a fundamental principle of quantum mechanics that was first established in 1982 through independent proofs by William Wootters, Wojciech Zurek and Dennis Dieks. Essentially, the theorem forbids creating an exact copy of an arbitrary unknown quantum state.

In this context, the word "forbid" does not signify a human-imposed ban or a constraint that's created by hardware or software. Instead, it describes a mathematical restriction that prevents the direct application of copy-based data-resilience techniques to quantum information. Any attempt to use classical copy-based replication on quantum data would either destroy the original state during measurement or violate the mathematical laws of quantum mechanics.

No-cloning workaround

To address this challenge, Canadian researchers Achim Kempf and Koji Yamaguchi proposed a protocol that, in theory, demonstrates how an arbitrary, unknown quantum state can be reconstructed once by using multiple encrypted encodings derived from the same original state. This approach shifts attention from copy-based resilience to reconstruction-based recovery, without violating the no-cloning theorem.

Here's how it might work:

- The protocol transforms an unknown quantum state into multiple encrypted encodings that are mathematically related.

- When the original state is needed, the protocol invokes a controlled, single-use reconstruction procedure.

- The reconstruction operation uses correlations embedded in the encodings to recover one valid instance of the state.

In principle, the proposed protocol enables encrypted encodings to be placed on different devices or nodes, creating redundancy at the storage layer. If one encoding is lost or corrupted before recovery is attempted, it may still be possible to use a correlated encoding to recover the unknown state.

Because the protocol relies on a single-use decryption operation, the remaining encodings will still exist after reconstruction, but they cannot be used to produce another valid reconstruction of the same state. This is important because it provides redundant recoverability instead of redundant duplication, while still supporting the no-cloning theorem.

Implications for security and storage

Kempf and Yamaguchi's research has the potential to significantly impact cloud security and cloud storage in the quantum era. "This breakthrough will enable quantum cloud storage, like a quantum Dropbox, a quantum Google Drive or a quantum STACKIT, that safely and securely stores the same quantum information on multiple servers," said Dr. Achim Kempf, the Dieter Schwarz Chair in the Physics of Information and AI at Waterloo.

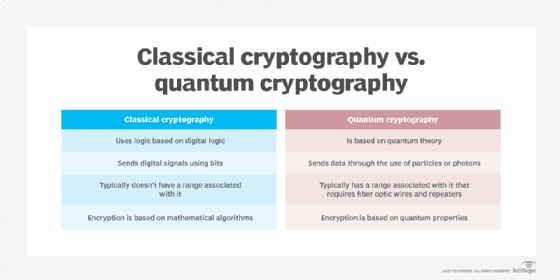

In classical computing, security often relies heavily on assumptions about mathematical hardness. For example, RSA and elliptic-curve cryptography rely on problems that are computationally impractical for classical computers to solve in a reasonable amount of time.

In contrast, quantum computing has the potential to provide security guarantees grounded in physical law as well as mathematics. Classical cryptography and security protocols could be used to govern authentication, encryption and key management for data at rest, while the physical laws of quantum mechanics could be used to detect unauthorized interception attempts for data in transit.

Should an intruder attempt to measure quantum states transmitted as part of a quantum key distribution protocol, for example, the resulting disturbance would alter those states, increasing observable error rates.

Future outlook

While enterprises are unlikely to use single-use quantum reconstruction in the near future, Kempf and Yamaguchi's research is important because it suggests that security in the quantum era may depend on a combination of mathematical and physics-based protections, rather than mathematics alone.

Ironically, if the foundation of data resilience shifts from maintaining many identical copies to guaranteeing the ability to recreate information when needed, the no-cloning theorem may evolve from a perceived limitation into a guiding design principle for secure quantum architectures.

Enterprises that recognize this shift early on may be more inclined to view post-quantum cryptography (PQC) as one component of quantum-era security rather than the final destination. In turn, this may position them to design secure quantum services earlier and gain a competitive advantage as quantum computing evolves.

Margaret Rouse is an award-winning writer and technologist known for her ability to explain the value of emerging technology to business users.