WavebreakmediaMicro - Fotolia

How to integrate DevOps QA testing on AWS

QA testing is an overlooked, but important, part of DevOps. For workloads on AWS, use CodeBuild, CodeCommit and other tools to make the most of your workflow.

Businesses that combine DevOps practices with AWS and infrastructure as code should have rapid release cycles and more reliable software. But in this push for more speed, an important step in that process is often overlooked: testing.

Modern software delivery pipelines typically implement automated tests. So, if you want to build software in the cloud, it's important to be aware of the common patterns and practices for quality assurance (QA) testing on AWS.

Here are some common automated testing strategies to know when you set out to build and deploy applications on AWS.

Unit testing

Software developers use language-specific unit testing frameworks to target individual pieces of their code with a series of tests. The tests are usually run by external programs that vary from one language to the next.

In a release pipeline workflow, you typically run unit tests during the build stage. When a code change is detected in source control, the build stage can compile the code and run the unit tests. This process defines continuous integration (CI).

Organizations can use CodeBuild, a managed CI service, to compile source code, run tests and produce software deployment packages.

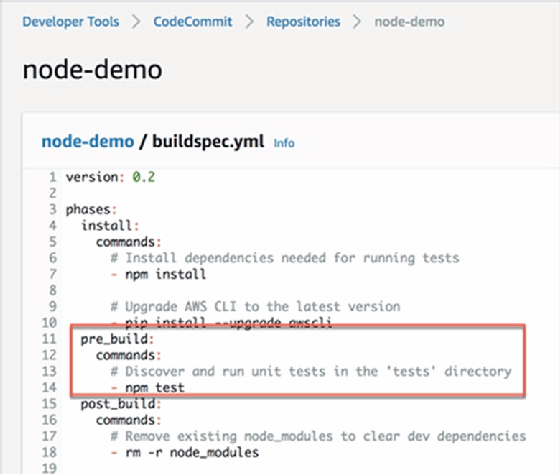

CodeBuild relies on a buildspec.yml file to understand how to compile application code and run any required unit tests. The example in Figure 1 is a build specification that sits alongside the application source code for a Node.js application in an AWS CodeCommit repository. As you can see, the build is configured to execute a unit test included within the application source repository.

DevOps teams will immediately get the result of the test when the build runs. If the build fails, the team can address the problem right away.

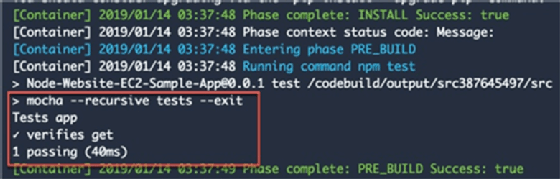

You can see in Figure 2 that CodeBuild invoked the Mocha test framework to execute the tests for this Node.js application. In addition to Node.js, CodeBuild supports a number of runtimes, including Java, Python, Ruby, Go, Android, .NET Core for Linux and Docker.

Teams can integrate CodeBuild and CodeCommit with AWS CodePipeline, which automates the software development process. It's also common to see more stages further down the pipeline that perform additional tests against the application.

Functional and UI testing

After you run a build, execute tests and generate a software deployment package, it's a common practice to deploy the application to a staging environment for further validation.

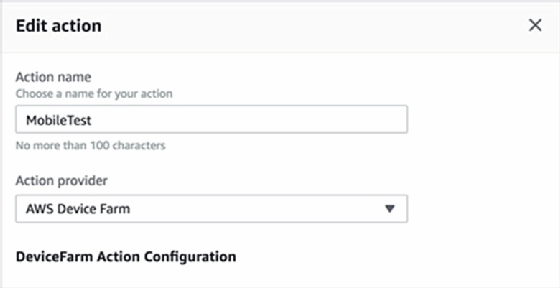

You can perform functional tests automatically or manually during this stage. DevOps teams can use AWS Device Farm to test and interact with Android-, iOS- and AWS-hosted web applications.

You can add individual actions to the various stages within a release pipeline. You can see an example of this in Figure 3, where an action has been created to test the application with AWS Device Farm.

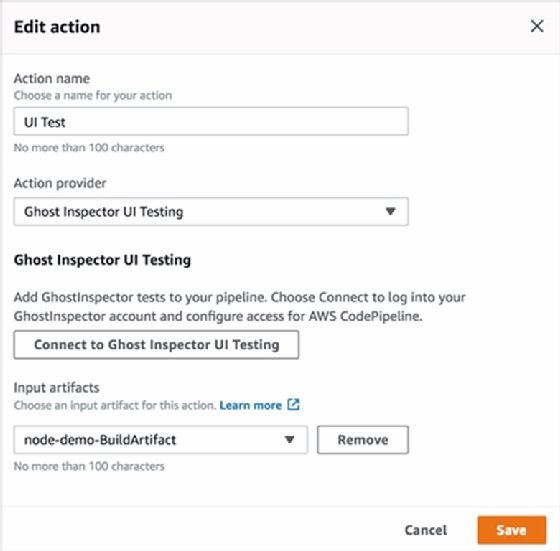

CodePipeline test actions also support multiple third-party integrations. You can see in Figure 4 below that Ghost Inspector can be configured to automatically run UI tests against code after the build is successfully completed.

Teams can also use external tools to perform UI testing on AWS. Selenium and ChromeDriver are both popular options that automate user activity performed via a web browser. You can run these tools on EC2 instances, in containers on Amazon Elastic Container Service or through Lambda functions.

Performance testing on AWS

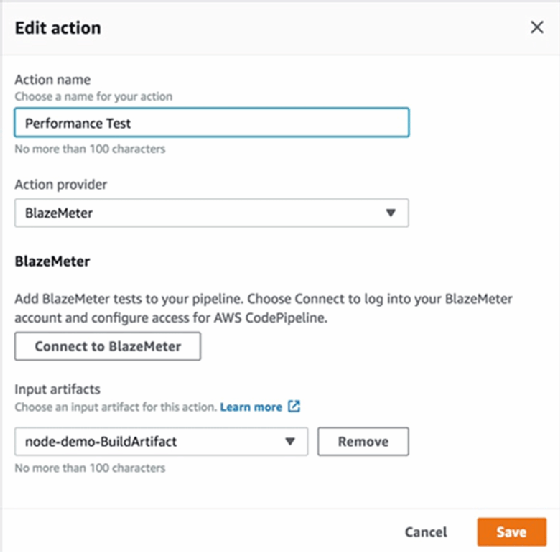

Load simulation is another common test you can automate as part of a software release pipeline. There are countless tools and services in the industry that execute these performance tests, and AWS CodePipeline integrates with a few existing providers, including BlazeMeter -- shown below -- and StormRunner Load.

You can still use your existing, on-premises tools and services for testing on AWS. There are significant benefits, though, that come when you pair AWS' managed developer services with third-party integrated testing platforms. It provides an interesting option for teams that want to focus more on building applications and less on managing infrastructure.