Getty Images/iStockphoto

AWS sees role for quantum in data protection, but not for years

AWS execs believe advances in quantum computing will have a trickle-down effect on data protection, but enterprise-based quantum services and use cases are still a ways off.

BOSTON -- The capabilities of quantum computing are years -- and potentially decades -- away from enterprise adoption.

Still, AWS sees potential in laying the infrastructure groundwork for quantum services and applications now to establish a baseline ahead of rival vendors like IBM or Microsoft Azure.

At its Center for Quantum Networking, AWS is researching and developing how to expand its footprint in quantum computing, which uses unique behaviors of quantum physics to solve intricate problems such as biological or financial simulations faster than existing computing methods. While faster, quantum computing currently relies on complex and fragile quantum networks to move data around, a problem the AWS Center is working to solve.

Advances in quantum networks could lead to runoff benefits for data protection, such as quantum encryption. But at this point, it remains to be seen how that technology will affect infrastructure IT teams managing storage or data, according to Paul Nashawaty, an analyst at TechTarget's Enterprise Strategy Group.

"I don't see a lot of infrastructure plays around quantum," Nashawaty said. "It's too expensive and way too early. The big push right now is having data scientists understand scope."

Data security entanglements

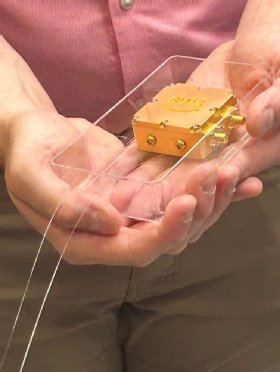

Researchers at the AWS Center for Quantum Networking said developing standardized and easily manufactured quantum repeaters, or communication links between quantum systems, will enable better transmission of quantum data across wide networks -- a critical business need for a cloud hyperscaler.

Traditional repeaters work well for bits of data but corrupt quantum data, encoded in qubits, during transmission, requiring new paradigms to move quantum data in a cheap and efficient way.

AWS is exploring the capabilities of quantum with Amazon Braket, its fully managed quantum computing service. But it is far from the only technology vendor building out a quantum portfolio. Both Microsoft Azure and Google Cloud Platform are researching quantum technologies alongside data center hardware suppliers such as IBM and Dell Technologies.

Enterprises looking to use quantum computing in the future will likely experiment with applications in the cloud first before opening their wallets for quantum, Nashawaty said.

"Hyperscalers probably provide more availability and 'instant-on' for more common use cases," he said. "Hyperscalers are a path to maturity, dipping your toe in and trying [quantum]."

The knock-on effect of developing quantum technology would spill over into other parts of the AWS business, primarily in AI. But executives for storage and data protection expect some benefits to trickle into their parts of the stack.

Nancy Wang, general manager of AWS data protection services, said during a press tour Tuesday of the AWS Center for Quantum Networking that she excepts quantum cryptography to advance data security by further improving zero-trust networks and anonymizing customer data moving across the network.

For data protection, quantum computing could help prevent data exfiltration by obfuscating a clear path out a customer's data center, according to Wang.

Future perfect

Until then, AWS will continue to focus on more traditional advances for its data protection services. Specifics include automatic protection of data by policy at creation and ways to recover immutable data from compromised accounts.

"Customers tell us that as they create applications using infrastructure as code, they also want to enable protection of that data by default," she said.

AWS will also continue to explore new partnerships with other data protection and backup vendors, with four coming to AWS backup services in the coming months.

AWS Backup, the hyperscaler's native backup service, launched in 2019 and provides services to more than 100,000 customers that, combined, total more than an exabyte of data, according to Wang.

Wayne Duso, vice president of storage, edge and data governance at AWS, expects the value of data storage to increase in the years to come as more enterprises look to build AI applications from data lakes. Customers should stop viewing storage as a commodity to house data and start seeing it as a means to quickly access valuable data for the enterprise, he said.

"Storage is not there for storage sake; it's there for data's sake," he said. "Anyone who tells you it's a commodity, I'd ask where [that view] is coming from."

Tim McCarthy is a journalist from the Merrimack Valley of Massachusetts. He covers cloud and data storage news.