Getty Images

MemVerge to help balance HPC resources more efficiently

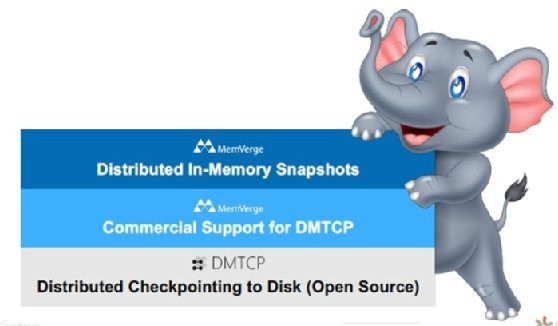

Partnering with the DMTCP Project, MemVerge supports the open source distributed multithreaded checkpointing technology, which could change the high-performance computing game.

MemVerge is partnering with the Distributed MultiThreaded Checkpointing Project to help accelerate the development of checkpointing technology, which is used by high-performance computing systems to prioritize jobs and share resources.

At Supercomputing 21, an international conference for high-performance computing (HPC), MemVerge executives said its aim is to bolster the open source DMTCP technology, hoping it will become more production-ready. Plus, AI is blurring the line between enterprise and HPC use cases, and the development of DMTCP could help make a stronger HPC enterprise case.

Checkpointing, said Mark Nossokoff, a senior research analyst at Hyperion Research, is a snapshot of the job or application running as well as all the resources needed to run it. In other words, it gives users everything necessary to restart the job where it left off.

"[Checkpoint is the] technology to graciously capture and subsequently restore the state of a simulation or modeling activity in the event of a failure," Nossokoff said.

What MemVerge brings to the table

MemVerge uses storage class memory, like Intel Optane, for in-memory computing that is lower in cost and persistent in its product Big Memory Machine. Critical to this product is its ZeroIO snapshots, storage snapshots written to the persistent memory as opposed to sending I/O to storage, thus saving time. ZeroIO snapshots are a combination of checkpointing technology and storage snapshots, according to Charles Fan, CEO and co-founder of MemVerge, making checkpointing an enabler technology for the vendor.

MemVerge believes checkpointing technology being more widely adopted will be good for the broader market of big memory computing. MemVerge is also supplying commercial support for the technology, which industry experts said is important for the growing adoption of the technology. Government entities can't fully go into production with a strictly open source technology without some form of commercial support.

Broader adoption, including government use, can expand the current use cases, mainly in HPC, and move checkpointing to new areas.

MPI and current use cases

Gene Cooperman, a professor at Northeastern University and lead for the DMTCP technology team, said the latest iteration of the technology now supports Message Passing Interface (MPI), which allows computing clusters used in HPC environments to pass messages between each other, he said. For example, if a computer within a cluster fails, the MPI will tell the others to connect to a standby unit.

Through a project called MPI-Agnostic Network-Agnostic (MANA) for MPI, users can intercept MPI transmissions to learn the state of the jobs being run, preparing for the checkpoint, and do so transparently. MANA aims to support all MPI implementation and interconnect combinations.

Cooperman said transparent checkpointing and MANA for MPI could capture the status of massive jobs with no specialized checkpoint code. If a job needs to stop at an allocated time, users can then resume when computational resources become available again.

The technology can also help HPC environments prioritize jobs. MANA for MPI can pause jobs currently running on the system by using the checkpointing technology, making the HPC technology available for more urgent jobs.

Overcoming shortfalls

While checkpointing technology helps to ease the stop and start process, it doesn't eliminate all of the issues.

Checkpointing is like saving a video game, and DMTCP is like saving a distributed game with a lot of players, MemVerge's Fan said. A shortfall to checkpointing is that you must pause to save, and in the distributed game analogy, that would mean coordinating a pause with multiple players.

That's where MemVerge's big memory technology comes into play. Fan believes using storage class memory to save jobs to persistent memory as opposed to disk will reduce the pause while using more cost-effective media versus HPC resources.

Reducing downtime could result in savings, as pausing a supercomputer is a huge expense, according to Cooperman. But Cooperman believes advancements in checkpointing can do much more that.

"System-level checkpointing is the holy grail of checkpointing," he said. "This will change the way people do HPC."

In supercomputing, leftover resources are applied to a backfill queue or used for smaller jobs. When complete system-level checkpointing is possible, different queues won't be needed as system administrators can ensure all resources are being used by checkpointing and moving jobs into available spaces.

Still, while industry experts see potential in the technology, from saving time in HPC to various use cases in the enterprise, its potential is largely hypothetical at this point.