Sergey Nivens - Fotolia

Zero-trust security model means more than freedom from doubt

A zero-trust security model has a catchy name, but the methodology means more than not trusting any person or device on the network. What you need to know.

Unless you've been living under a rock, chances are you've heard about the zero-trust security model. The name is enticing. It implies all devices, resources, systems, data, users and applications are to be treated as untrusted. Cybersecurity professionals don't want to think of themselves as overly trusting, so zero trust seems like the right way to approach enterprise cybersecurity. But the moniker, while catchy, is somewhat misleading.

The zero-trust security model actually refers to an architecture that features a highly distributed, granular and dynamic trust network. Each one of those terms is important. Let's see why.

Highly distributed means the model extends trust (or the lack of trust) to every possible user and resource, regardless of where it resides. That includes cloud-based applications and data, as well as remote users and devices. In other words, zero trust fundamentally breaks the perimeter-based security model where devices, applications and users within a firewall are trusted, while those outside the border aren't.

Highly granular means this trust network is defined at an exceedingly granular level. For example, a device itself may not be labeled either trusted or untrusted, but individual applications on the device may be. Or, the application may not be trusted, but individual microservices or containers that comprise it may be.

Finally, highly dynamic means trust levels may be extended or withdrawn as needed, possibly very rapidly. There's no fixed state. A microservice or container may be trusted one instant, and untrusted the next.

Making the shift to zero-trust heralds big changes

Moving from the conventional static, perimeter-based model to a zero-trust version has profound implications for security architectures and operations. With a zero-trust security model, until authentication and validation occurs, every user, system, application, code component, byte of data and network or infrastructure device is considered untrusted. That forces the enterprise cybersecurity team to map the environment at an unprecedented level, and impose a very precise set of policies. Moreover, it requires a data-centric approach to security, as well as a detailed asset inventory. It also means providing authentication, authorization and access control at every level, and encryption wherever viable.

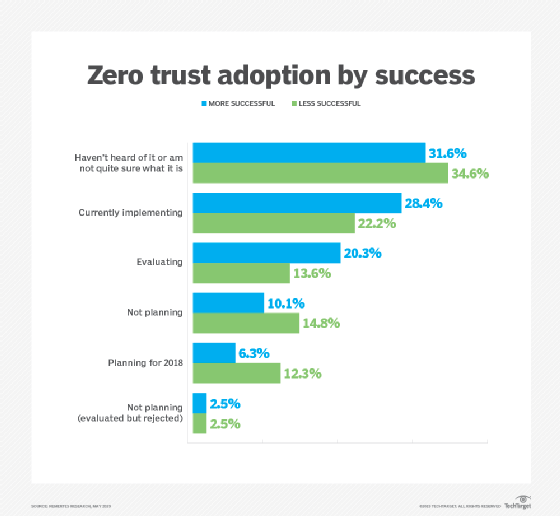

For these reasons -- among others -- only 20% of enterprises have adopted zero-trust security (see chart below). It's worth noting, however, that Nemertes has found cybersecurity organizations with a proven track record of successfully detecting and remediating threats are more likely to have rolled out -- or to be considering -- zero trust than their less-effective counterparts.

Changing role of the firewall

As the distinction between "trusted inside" and "untrustworthy outside" blurs, the role of the firewall changes significantly. It no longer serves to keep the bad guys out and the good guys in. Instead, the role of the firewall -- and the network overall -- shifts to green-lighting only those communications transmitted and received in a specific way (as determined by port, protocol and other factors).

We call this deep segmentation, and it is not wed to firewalls or perimeters. Enterprises deploying a zero-trust security model can avoid the chokepoint posed by the traditional firewall-centric security model. Vendors embracing deep segmentation include startups such as 128 Technology; traditional network and security providers such as Palo Alto Networks, Cisco and Juniper are also moving in this direction.

A data-centric, automated approach to security

Zero trust also means taking an enterprise-wide, security-centric approach to data classification, data loss prevention (DLP) and protection, and security automation. As a result, cybersecurity specialists need to be aware of the data that must be protected, to what degree and its location. They also need to automate security-related processes as much as possible.

Not surprisingly, this is exactly what's happening. Enterprises committed to implementing zero trust are three times more likely than those that aren't to deploy data classification and twice as likely to deploy DLP and security automation, according to Nemertes research.

Technologies that assist in zero trust

A number of technologies mesh well into a zero-trust security model architecture, among them advanced endpoint security (AES), behavioral threat analytics (BTA), cloud access security brokers (CASB), and network access control (NAC).

AES software protects endpoints from malware through a variety of mechanisms, such as micro-virtualization, which aren't based on recognizing malware signatures. The approach goes far beyond traditional anti-malware in that it actually contains and manages applications running in endpoints, and it often applies AI and machine learning to detect misbehavior and attempted incursions.

AES suppliers include emerging vendors such as Bromium, Carbon Black, Comodo, Crowdstrike, Cylance and Invincea, as well as the more current versions of endpoint security products from traditional security players such as McAfee, Sophos, Symantec and Trend Micro. Operating systems vendors -- particularly Microsoft -- are also jumping in.

BTA software integrates feeds from multiple sources (syslogs, analytics platforms such as Splunk and security information and event management) to identify and display anomalous behavior of users, devices and systems based on their actual behavior on the network. Most products use machine learning to set a baseline of ordinary behavior and detect anomalies relative to that. Vendors include Bay Dynamics, Gurucul, Exabeam and Splunk/Caspida.

CASB comprises on-premises or cloud-based software that automatically detects employees' cloud usage, assesses the associated business and technical risk and enforces policies. In some cases, CASB combines with BTA to deliver anomaly-driven policy implementation. Because cloud migration is a key driver for zero-trust security, CASB must be part of any zero trust implementation. Zero trust adopters are more than twice as likely as non-adopters to have deployed CASB. CASB vendors include BitGlass, Microsoft, Netskope, Skyhigh and Symantec.

NAC devices, which authorize other devices to access the network based on security policies, complements BTA. NAC watches what gets on the network while BTA watches what those things do once there. The benefit of NAC is that it stops anything on the network that shouldn't be there.

To deploy NAC effectively, network and security engineers need a fine-grained security policy and an accurate, up-to-date inventory of assets -- each prioritized by security classification. These two components -- policy and asset classification -- are also critical for zero trust. Vendors that provide NAC include Cisco, Forescout, HPE/Aruba and Trustwave.

In tandem with the move to a zero-trust security model is the trend toward treating everything -- including security functions -- as code. This means firewalling becomes a function rather than a device, for example. Oversight of that function is more like managing an application rather than a physical component. That, in turn, drives the need for development best practices, especially automation.