Getty Images

The ins and outs of low-code application testing

When teams use low-code for application development, it can save time and money. But don't neglect testing for potential issues just to deploy the app sooner.

While many low-code development tools provide value through highly responsive design, traditional testing approaches -- manual or automated -- can take longer than the actual development process in low-code scenarios. For example, it might take an hour for a developer to add a new screen in low-code, but a classic regression test process might require a full system shakedown.

Another element to consider in this scenario: When screens are imbued with drag-click functionality, testing the screen using traditional GUI or unit tests can take longer than creating the screen itself. As such, teams may consider skipping these expensive, time-consuming steps in the interest of faster delivery.

However, low-code still contains code, and humans still make programming mistakes. So, each IT organization should nevertheless develop a cost-effective test strategy that addresses these risks and outlines potential consequences.

What should low-code tests focus on?

Thanks to the use of abstracted proxy components -- often an API -- many proprietary low-code development platforms are equipped to handle common application defects, such as browser compatibility, screen size and race conditions. However, complex back-end issues, like field validation, corrupted application data, third-party API integrations and feature regressions, still present a formidable challenge.

Regression errors, in particular, pose a major risk because the custom code often found in low-code applications can change in ways the original creator didn't anticipate. Just as testing procedures for web-based applications need to adapt for things like mobile optimization, these new low-code problems call for a revamped approach to test design. Unfortunately, this can be difficult given the priority developers tend to place on rapid deployment in low-code scenarios. As such, it's important that testers and developers collaborate in order to mitigate the risk of both costly delays and halting defects.

The scope of low-code testing

Early low-code adopters typically used the platforms to create tiny snippets of on-demand functionality, such as a new organizational calendar integration. Ideally, these snippets would resemble something like an Excel-based macro, but their scope could can easily encompass full business application functions.

Low-code unit testing tends to be an afterthought, if it happens at all, given the amount of new code these platforms can end up producing. Unit tests can be particularly painful if they require any amount of manual testing. A low-code test approach should reduce these risks efficiently, without adding time or cost.

Making low-code testing cost-effective

Let's create an example economic model of a software product's release values. First, start with two software teams, each capable of doing one "point" of software work per day. Once a point gets to production, that point delivers one business point of value per day. On day two, the first team realizes one point of value. The second team wants to perform a full, standardized round of testing, so their release schedule is once every three months using a Waterfall model.

At the end of the second day, the Waterfall team has delivered no value -- and they will continue to deliver no value for three months. At one point per day and 22 business days per month, the first team will deliver over 2,000 points of value at day 66. If we think of points as business value delivered per day, then the Waterfall team only starts showing value to the customer on day 67.

This example isn't perfect because teams and points aren't created equal, but the core lesson to take away is that a frequent release schedule reduces the time it takes to add business value. If that example became an actual project, the team would introduce uncertainty and defects during those three months, and the final regression test would likely take multiple rounds of testing, fixing and retesting.

When teams slow down their development cycle to run more tests, it creates a slower delivery. This approach also means more time elapses between test cycles, and that enables more untested changes to build on top of each other. The number of testing -- and fixing -- cycles increases, which negates any potential economic benefit. The biggest challenge teams face is how to release more often, while keeping good enough test coverage.

Low-code testing for mobile apps

Mobile app stores pose a particular testing wrinkle. It can take hours, if not days, for an app marketplace to approve a new version of a mobile application.

Since it takes so long to deploy fixed code within a live server or app store, development teams might want to tackle fewer quality problems in each iteration.

This timing concern isn't typically as much of an issue for web apps, where patching a configuration file might take as little as a few seconds or minutes.

Teams that optimize for test value will want to consider how they can create an appropriately balanced release cadence. Low-code makes it easy to push to production more frequently; testing should add value and follow suit from the release perspective.

Where low-code testing can add value

Don't oversimplify testing to "just finding bugs." A more expansive definition includes how testing provides information to decision-makers. The problems that testing can identify go beyond one-off bugs and errors. QA can also find shortcuts and/or new potential features that developers can easily create and add to the application.

For example, Google Maps was originally just a navigation service, but it didn't take much to add suggestions for gas stations, restaurants and hotels along the way. Often, insight such as this comes from those who live outside of the actual development process. To that end, mix-up testing -- sometimes called cross-module testing -- enables these outside developers to test each other's work with limited bias and provide information for the team quickly. Thankfully, this approach doesn't require organizations to create any new roles and can usually be accomplished within a quick time frame.

In the end, the actual approach to testing should be driven in part by the technology -- both the infrastructure that exists for it and the bugs that slip through.

Examples of testing in low-code platforms

There are plenty of options for low-code development platforms out there, each with its own set of testing capabilities and tooling. To provide a little insight, however, the following examples demonstrate how this works in two of today's prolific platforms: Mendix and Microsoft Power Apps.

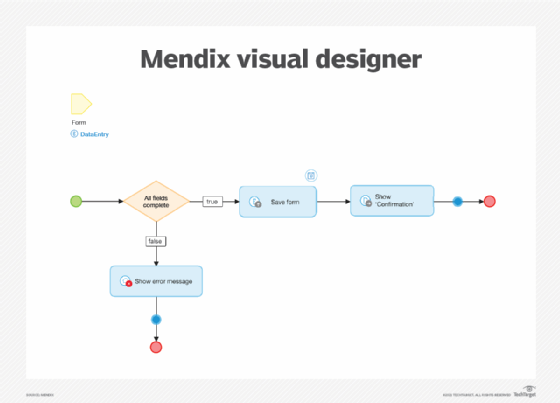

Mendix

The Mendix platform is primarily driven by workflow design. For smaller applications, it may be possible to express that design as a series of connected, testable nodes. More complex scenarios, however, may call for teams to adjust procedures in accordance with the Recent, Core, Risk, Configuration, Repaired and Chronic, or RCRCRC, heuristic. If the team wants to implement automation, it can look to external tools, like SoapUI and Selenium IDE. Mendix also provides its own plugin for unit testing.

Microsoft Power Apps

This suite of tools uses a drag-and-drop interface to create a web or mobile application. Users typically get started with a data source, such as a spreadsheet or a cloud-based Microsoft Access database. For large-scale applications, Microsoft provides higher-level tooling for comprehensive test studio automation. By creating smoke tests around functional code and important application features, it might be possible to check a release for major flaws with every push -- and even commit changes multiple times per day.

The tests shown in the examples above are purely meant to check if an application is in compliance with its software requirements. But teams shouldn't limit their understanding of QA, particularly low-code testing. Extensive testing documentation can be a wellspring for feature ideas and simple UI innovations that, ultimately, help organizations build better apps in the future.