GP - Fotolia

Are containers on Windows the right choice for you?

Containers have grown in popularity, but there are some considerations to weigh before you decide to migrate Server 2008 workloads to this technology.

It's nearly the end of the road for Windows Server 2008/2008 R2. Some of the obvious migration choices are a newer version of Windows Server or moving the workload into Azure. But does a move to containers on Windows make sense?

After Jan. 14, 2020, Microsoft ends extended support for the Windows Server 2008 and 2008 R2 OSes, which also means no more security updates unless one enrolls in the Extended Security Update program. While Microsoft prefers that its customers move to the Azure cloud platform, another choice is to use containers on Windows.

Understand the two different virtualization technologies

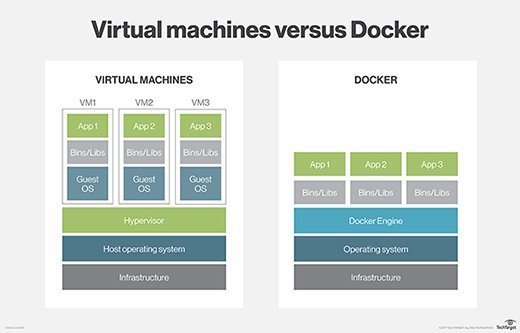

If you are thinking about containerizing Windows Server 2008/2008 R2 workloads, then you need to consider the ways containers differ from a VM. The most basic difference is a container is much lighter than a VM. Whereas each VM has its own OS, containers share a base OS image. The container generally includes application binaries and anything else necessary to run the containerized application.

Containers share a common kernel, which has advantages and disadvantages. One advantage is containers can be extremely small in size. It is quite common for a container to be less than 100 MB, which enables them to be brought online very quickly. The low overhead of containers makes it possible to run far more containers than VMs on the host server.

However, containers share a common kernel. If the kernel fails, then all the containers that depend on it will also fail. Similarly, a poorly written application can destabilize the kernel, leading to problems with other containers on the system.

As a Windows administrator considering containerizing legacy Windows Server workloads, you need to consider the fundamental difference between VMs and containers. While containers do have their place, they are a poor choice for applications with high security requirements due to the shared kernel or for applications with a history of sporadic stability issues.

Another major consideration with containers is storage. Early on, containers were used almost exclusively for stateless workloads because containers could not store data persistently. Unlike a VM, shutting down a container deletes all data within the container.

Container technology has evolved to support persistent storage through the use of data volumes. Even so, it can be difficult to work with data volumes. Applications that have complex storage requirement usually aren't a good fit for containerization. For example, database applications tend to be poor candidates for containerization due to complex storage configuration.

If you are used to managing physical or virtual Windows Server machines, you might think of setting up persistent storage as a migration specific task. While there is a requirement to provide a containerized application with the persistent storage that it needs, it's a one-time task completed as part of the application migration. It is important to remember that containers are designed to be completely portable. A containerized application can move from a development and test environment to a production server or to a cloud host without the need to repackage the application. Setting up complex storage dependencies can undermine container portability; an organization will need to consider whether a newly containerized application will ever need to be moved to another location.

What applications are suited for containers?

As part of the decision-making process related to using containers on Windows, it is worth considering what types of applications are best suited for this type of deployment. Almost any application can be containerized, but the ideal candidate is a stateless application with varying scalability requirements. For example, a front-end web application is often an excellent choice for a containerized deployment for a few reasons. First, web applications tend to be stateless. Data is usually saved on a back-end database that is separate from the front-end application. Second, container platforms work well to meet an application's scalability requirements. If a web application sees a usage spike, additional containers can instantly spin up to handle the demand. When the spike ebbs, it's just a matter of deleting the containers.

Before migrating any production workloads to containers, the IT staff needs to develop the necessary expertise to deploy and manage containers. While container management is not usually overly difficult, it is completely different from VM management. Windows Server 2019 supports the use of Hyper-V containers, but you cannot use Hyper-V Manager to create, delete and migrate containers in the same way that you would perform these actions on a VM.

Containers are a product of the open source world and are therefore managed from the command line using Linux-style commands that are likely to be completely foreign to many Windows administrators. There are GUI-based container management tools, such as Kubernetes, but even these tools require some time and effort to understand. As such, having the proper training is essential to a successful container deployment.

Despite their growing popularity, containers are not an ideal fit for every workload. While some Windows Server workloads are good candidates for containerization, other workloads are better suited as VMs. As a general rule, organizations should avoid containerizing workloads that have complex, persistent storage requirements or require strict kernel isolation.