Getty Images

What is the best OS for Docker?

Admins that want to run Docker containers can choose from Linux distros, including Ubuntu Core, RancherOS and others. Compare options for Docker OSes.

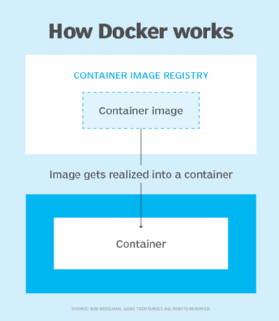

Containers have gained popularity because they make it easy to deploy and update services and applications as portable, self-contained packages to scale. Given how many different methods of deploying containers there are, you need to make the right choice for your needs.

One choice is Docker, which users can install on many different operating systems. For example, if your purpose is developing for Docker, you can install it on any given Linux distribution, macOS or even Windows. But, when it comes to deploying those to users, it gets much more complicated. That is precisely why admins use specialized operating systems to deploy and manage containers.

4 OS options for Docker systems

Those specialized operating systems were designed, from the ground up, to serve as the ideal hosts for container deployments. What's different in these operating systems? Here's a shortlist:

- There's no bloat. Docker-specific OSes are generally minimal, so there aren't extra libraries, runtimes or services running. This means more system resources for containers.

- The kernel is limited to features. Even the kernels for container-specific OSes have been stripped down to heighten security, increase simplicity and improve performance.

- Security is key. Containers and operating systems designed specifically for Docker require certain security functions to take these considerations into account. For example, Security-Enhanced Linux (SELinux) is employed, as well as atomic updates. In most cases, those OSes have also tuned SELinux for containerized deployments.

There are various options for operating systems that work well for Docker.

1. RancherOS

RancherOS is a compatible fit for Docker deployments because it is an operating system made from Docker containers. RancherOS does not take up much storage, coming in at around 20 MB. That minimum footprint makes for an even smaller attack plane for bad actors. It also leads to a stable and secure OS.

Admins can easily patch and update RancherOS in seconds. Also, updates and rollbacks are simple. And, because they are atomic, it's easy to recover from an update that goes astray. RancherOS runs on virtualization platforms, cloud providers and even bare-metal servers.

Admins can find instructions on how to deploy RancherOS, which includes ISO files for bare metal and even deploying to VMs from the RancherOS GitHub site.

2. Ubuntu Core

This container-native OS was designed to deliver a fast, reliable and secure platform for container deployments at scale.

One useful trait of Ubuntu Core is that, if you're already accustomed to either Ubuntu or Debian Linux, you shouldn't have much problem leaping. The best features found in Ubuntu Core include Docker app data that can be configured for automatic backup before an update.

Updates are transactional, so if something fails, the system rolls back. Those updates are also fast. Ubuntu Core includes AppArmor kernel security, which is tuned to isolate applications running as Docker containers.

3. Alpine Linux

Alpine Linux is not geared specifically toward running containers. Instead, it's a lightweight operating system that is often used as a base image for creating container deployments.

Admins can use Alpine Linux on bare metal or as a container itself. And, yes, even though Alpine Linux wasn't created as a container-specific OS, it can be used as such. The biggest downfall of using Alpine Linux as a container host is that it requires considerable work to make it do so. Because of that, your best bet with Alpine Linux is to use it as a base image for developing containerized deployments.

4. Mesosphere DC/OS

DC/OS stands for Distributed Cloud Operating System and is an open source OS based on the Apache Mesos distributed systems kernel. This OS can manage multiple machines either from the cloud or a data center, and admins can deploy containers, services and even legacy applications to those connected machines.

DC/OS also provides all the other services required for container deployments, such as networking, service directory and resource management. DC/OS gives containers a boost through a "fast data" pipeline for the processing of data as it is collected for real-time insights and analytics. That can be a huge service to anyone using containerized applications for big data purposes.