Getty Images/iStockphoto

NVMe speeds vs. SATA and SAS: Which is fastest?

The NVMe protocol is tailor-made to make SSDs fast. Get up to speed on NVMe performance and how it compares to the SATA and SAS interfaces, plus recent specifications.

The NVMe protocol has become the industry standard for enabling communication between a computer's host software and an SSD or other type of non-volatile memory subsystem. NVMe speeds are substantially better than those of traditional storage protocols, such as SAS and SATA.

The non-volatile memory express standard is based on a family of specifications maintained and published by NVM Express Inc., a consortium of more than 100 tech companies and industry leaders. The set of specifications defines how the host software communicates with NVM across supported transports.

The NVMe specifications define both a storage protocol and a logical host controller interface. The specifications were originally designed and optimized for client and enterprise systems that use PCIe-based SSDs, although they have since been expanded to include other types of transports, including Transmission Control Protocol (TCP) and Remote Direct Memory Access (RDMA).

As of NVMe 2.1, the set includes the following 11 specifications:

- The NVMe Base Specification defines a protocol for enabling communication between host software and NVM systems through supported transports.

- The five NVMe Command Set specifications define individual command sets to support independently evolving technologies. The specifications are NVM Command Set, NVMe Zoned Namespaces Command Set, Key Value Command Set, Subsystem Local Memory Command Set and Computational Programs Command Set.

- The three NVMe Transport specifications define individual transports to support independently evolving technologies. The specifications are NVMe over PCIe Transport, NVMe over RDMA Transport and NVMe over TCP Transport.

- The NVM Express Management Interface Specification defines an architecture and command set for managing NVMe storage. The specification makes it possible to carry out tasks such as discovering, monitoring and configuring NVMe devices in different operating environments.

- The NVMe Boot Specification defines a standard process for booting over NVMe interfaces. The specification makes it possible for OSes to boot from different NVM interfaces.

Despite the additions, PCIe continues to be the primary transport used for NVMe devices. PCIe is a serial expansion bus standard that enables computers to attach to peripheral devices. A PCIe bus can deliver lower latencies and higher transfer speeds than older bus technologies, such as the PCI or PCI Extended standards. With PCIe, each bus has its own dedicated connection, so the buses don't have to compete for bandwidth.

Expansion slots that adhere to the PCIe standard can scale from one to 32 data transmission lanes. The standard defines seven physical lane configurations: x1, x2, x4, x8, x12, x16 and x32. The configurations are based on the number of lanes; for example, an x8 configuration uses eight lanes. The more lanes there are, the better the performance -- and the higher the costs.

The PCIe version is another factor that affects performance. In general, each version doubles the bandwidth and transfer rate of the previous version, so the more recent the version, the better the performance. For instance, PCIe 3.0 delivers a transfer rate of 8 gigatransfers per second; PCIe 4.0 doubles the transfer rate to 16 GTps; PCIe 5.0 doubles the rate again to 32 GTps; and PCI 6.0 delivers a transfer rate of 64 GTps.

NVMe speeds and performance

NVMe was developed from the ground up specifically for SSDs to improve throughput and IOPS, reduce latency and increase speeds. NVMe-based drives can theoretically deliver bandwidths up to 256 GBps, assuming the drives are based on PCIe 6.0 and use 16 PCIe lanes.

Most of today's NVMe drives are based on PCIe 4.0 or 5.0 and are typically four-lane devices. However, these drives can vary significantly in terms of throughputs and IOPS. Some of the top SSDs offer throughputs as high as 14 GBps, although most deliver much lower rates. On the other hand, some SSDs barely reach 50,000 IOPS, while others exceed 1 million.

Two of today's top SSDs are the Kioxia CM7 and Micron 9550. Both are based on PCIe 5.0 and offer throughputs up to 14 GBps. The Kioxia drive can deliver up to 2.7 million IOPS, while the Micron drive promises 3.3 million. Compare these to the Samsung 990 Pro, a PCIe 4.0 drive with throughputs closer to 7 GBps and IOPS topping out at 1.4 million.

NVMe drives can also vary significantly in terms of latency rates. Although many of the top drives can achieve rates below 20 microseconds, others come closer to 100 µs or substantially exceed these rates.

That said, metrics that measure SSD speeds, such as throughput or transfer rate, can vary widely. These figures should be thought of as trends rather than absolutes. Factors such as workload type -- write vs. read or random vs. sequentially -- can make a significant difference in performance. Even so, it's clear that NVMe significantly outperforms protocols such as SAS and SATA on every front, especially when used with PCIe 4.0 or 5.0.

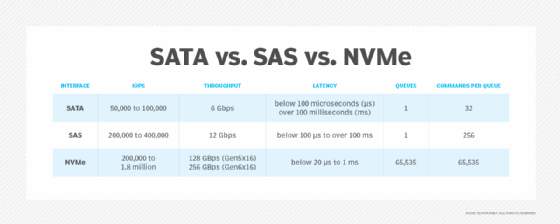

NVMe uses a more streamlined command set to process I/O requests, compared to SATA or SAS. NVMe requires fewer than half the number of CPU instructions and provides a more extensive and efficient system for queuing messages. For example, SATA and SAS each support only one I/O queue at a time. The SATA queue can contain up to 32 outstanding commands, and the SAS queue can contain up to 256. NVMe can support up to 65,535 queues and up to 65,535 commands per queue.

This queuing mechanism lets NVMe make better use of the parallel processing capabilities of an SSD, something the other protocols cannot do. In addition, NVMe uses RDMA over the PCIe bus to map I/O commands and responses directly to the host's shared memory. This reduces CPU overhead even further and improves NVMe speeds. As a result, each CPU instruction cycle can support higher IOPS and reduce latencies in the host software stack.

SAS and SATA speeds and performance

SAS and SATA are common protocols used to facilitate connectivity between host software and peripheral drives. The SATA protocol is based on the Advanced Technology Attachment standard, and the SAS protocol is based on the SCSI standard.

The SATA and SAS protocols were developed specifically for HDD devices. Although SAS is generally considered to be faster and more reliable, both protocols can easily handle HDD workloads. If a system runs into storage-related roadblocks, it is often because of the drive itself or other factors, not because of the protocol.

SSDs have changed this equation. Their higher IOPS can quickly overwhelm the older protocols, causing them to reach their limits before they can take full advantage of the drive's performance capabilities. As a result, users cannot fully realize an SSD's benefits.

Part of the challenge is that SATA and SAS each support only one I/O queue at a time and those queues can contain only a small number of outstanding commands compared to NVMe. SATA-based drives can attain throughputs of only 6 Gbps, with IOPS topping out at about 100,000. Latencies typically exceed 100 µs, although some newer SATA-based SSDs can achieve much lower ones.

SAS drives deliver somewhat better performance; they provide throughputs up to 12 Gbps and IOPS averaging between 200,000 and 400,000. However, lower IOPS are not uncommon. In some cases, SAS latency rates have fallen below 100 µs, although this is not enough to offset the other limitations that come with SAS.

NVMe 2.1

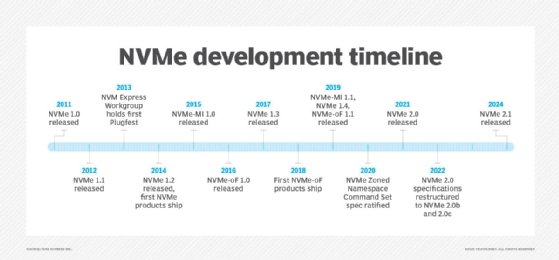

On Aug. 6, 2024, the NVM Express consortium released NVMe 2.1. The release includes a number of new and enhanced features, with the bulk of changes occurring within the NVMe Base Specification.

The Base Specification has been reorganized to better accommodate requirements that are common to PCIe and fabric implementations. The specification also defines more than 20 new features. For example, it enables a host to manage a controller's live migration from one NVM subsystem to another. It also adds support for the Power Loss Signaling function used by Mini Express form factors, and it enables multiple NVM subsystems to present the same namespace.

Other new features in the Base Specification include the following:

- Enabling a host to detect whether a network management interface is available for an NVM subsystem.

- Enabling a host to retrieve version information for I/O command set specifications.

- Adding support for the Subsystem Local Memory Command Set and Computational Programs Command Set.

- Adding per-port endpoint management support for high availability use cases.

- Supporting the consolidation of discovery information from multiple Direct Discovery controllers.

- Providing a method for consistently expressing security protocol configurations of NVMe entities.

The NVMe 2.1 Base Specification also enhances many features. For example, the specification enables a host to notify a controller about its transition to a low power state and to define the maximum data area in the Telemetry Host-Initiated log page. In addition, the Error Information log page now includes an Opcode field for the command associated with an entry.

Like the Base Specification, the NVMe Command Set Specification also incorporates new features, such as Flexible Data Placement, Performance Characteristics Reporting and NVMe specification version reporting. The specification also improves Zoned Namespaces management and expands the controller's ability to communicate the preferred deallocation granularity.

NVMe 2.1 adds the Subsystem Local Memory Command Set Specification, which makes it possible to access memory in an NVM subsystem, and the Computational Programs Command Set Specification, which provides a mechanism for executing programs on a device.

The Management Interface Specification incorporated more changes than the transport specifications or the Boot Specification, although these were mostly in the form of feature enhancements, such as clarifying namespace change reporting and the NVM subsystem shutdown definition.

NVMe 2.0

The NVMe 2.0 specifications defined a number of new and enhanced features to support the emerging NVMe device environment, while maintaining compatibility with previous versions. The 2.0 specifications included feature and management updates that made it possible to use NVMe with rotational media, such as HDDs.

Released in 2021, NVMe 2.0 added two command set specifications:

- The Zoned Namespaces Command Set Specification defined a storage device interface that enables a host and SSD to collaborate on data placement. This helped align the data with the SSD's physical media, which improved performance and resource use.

- The Key Value Command Set Specification enabled applications to use key-value pairs to communicate directly with an SSD, while avoiding the overhead of translation tables between keys and logical blocks.

NVMe over Fabrics

In June 2016, the NVM Express consortium published the NVMe over Fabrics (NVMe-oF) specification, which extended NVMe's benefits across network fabrics, such as Ethernet, InfiniBand and Fibre Channel. The consortium estimated that 90% of the NVMe-oF specification was the same as the NVMe specification. The primary difference between the two was the way the protocols handled commands and responses between the host and the NVM subsystem.

In October 2019, NVM Express released the NVMe-oF 1.1 specification, which added support for TCP transport binding. NVMe over TCP made it possible to use NVMe-oF on standard Ethernet networks without making hardware or configuration changes.

The consortium no longer maintains the NVMe-oF standard as an independent project. The content was instead rolled into version 2.0 of the NVMe Base Specification, where it continues to be maintained as part of NVMe 2.1.

Purchasing considerations

One of the biggest SSD purchasing considerations is whether to select drives based on the SATA, SAS or NVMe protocol. Most enterprise data centers favor NVMe over the other two because of its superior performance.

If decision-makers opt for NVMe, they should consider four important factors, specific to PCIe:

- PCIe version. Each new generation of the PCIe specification brings with it greater performance, so organizations should try to opt for SSDs that adhere to the most recent version.

- PCIe lane count. Most NVMe SSDs are limited to four PCIe lanes -- but not all. The more lanes that a drive uses, the better the throughout.

- SSD form factor. PCIe SSDs come in multiple form factors, including M.2, U.2, add-in cards and Enterprise and Data Center Standard Form Factor (EDSFF). Decision-makers should consider factors such as budget, host location of the drives and amount of available space. EDSFF is an emerging technology that offers several benefits over other form factors in terms of performance, capacity and scalability.

- Storage environment. The hardware that houses the SSDs should support the same PCIe version as the drives to realize the greatest benefits. For example, if users run a PCIe 5.0 SSD on a PCIe 4.0 server, the drive runs at PCIe 4.0 speeds, not PCIe 5.0.

Robert Sheldon is a freelance technology writer. He has written numerous books, articles and training materials on a wide range of topics, including big data, generative AI, 5D memory crystals, the dark web and the 11th dimension.