yblaz - Fotolia

Elevate software-defined technology's role in the data center

SDS and SDN aren't a cure-all for infrastructure management ails. Proper implementation requires research and a suitable hardware setup. Here's a look at what this tech can do.

The evolving scope of software-defined technology seeks to abstract data center resources from the underlying hardware components. It's a powerful mix of virtualization, automation and orchestration that identifies and organizes disparate resources into pools or tiers, so IT administrators can manage and provision hardware to meet all workload requirements.

Properly implemented software-defined tools can accelerate vital data center tasks with greater consistency and fewer errors.

But more software isn't the perfect answer to every IT problem. Having the right parts in place, along with well-conceived rules, policies and processes, can make or break a software-defined initiative. Each technology has different needs to make it work in the data center, and organizations must gauge if it's the right time for implementation.

Software-defined storage accelerates performance

Traditionally, admins had to manually provision logical volumes, associate those LUNs with applications, and then actively monitor the performance and capacity of those LUNs over time.

Software-defined storage (SDS) uses software to abstract disk storage resources and storage-related services from the underlying disk hardware such as hard drive disk and solid-state drive components. The abstraction process offers performance-based pooling or tiering, which enables admins to aggregate storage capacity into common logical pools -- or tiers -- and then provision them to applications.

Admins no longer need to worry about "disks" or in what "box" those disks are installed. The benefit of SDS is tremendous flexibility to detect and organize available storage across the data center.

SDS tools can bring storage services to the entire storage infrastructure instead of just select servers or storage arrays. This software-defined technology includes storage conservation such as thin provisioning and data deduplication, as well as replication, snapshots and backup.

Properly implemented SDS can use automation and orchestration to accelerate the provisioning of storage capacity and services that are best-suited for the deployed applications. For example, an admin can call for a high-performance LUN for a database, enabling the SDS platform to deliver a large, top-tier LUN with data deduplication and replication services already associated.

SDS is one of the oldest software-defined technologies and is generally the easiest to adopt. SDS is a common element of hyper-converged infrastructure offerings, though admins can implement SDS with many proven proprietary tools. These include Dell EMC's UnityVSA from its Unity storage array and IsilonSD Edge from the Isilon scale-out network-attached storage system.

Organizations can also look at open source software-defined technology such as Ceph, FreeNAS, Gluster and OpenStack Swift. Software tools might impose some limitations on disk and storage subsystem compatibility, so admins should research and test SDS to ensure interoperability with existing storage hardware and services.

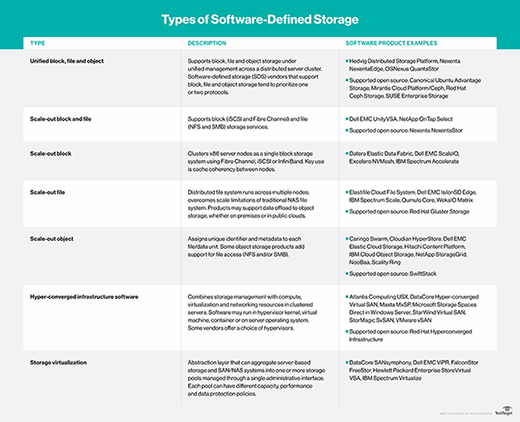

The biggest challenge with SDS is the large number of possible categorizations and available setups, including support for scale-out block storage, scale-out file storage, scale-out object storage, unified storage and simple storage virtualization.

Knowing the type of storage needed and the storage services required greatly affects the SDS product choice and potentially leads to vendor lock-in. Plus, automation requires some maintenance, and SDS might require greater regular oversight in the storage policies and workflows that are needed for automation and orchestration. Small organizations, businesses with static storage needs, or businesses with diverse storage needs might not find SDS beneficial.

Software-defined networking technology centralizes data control

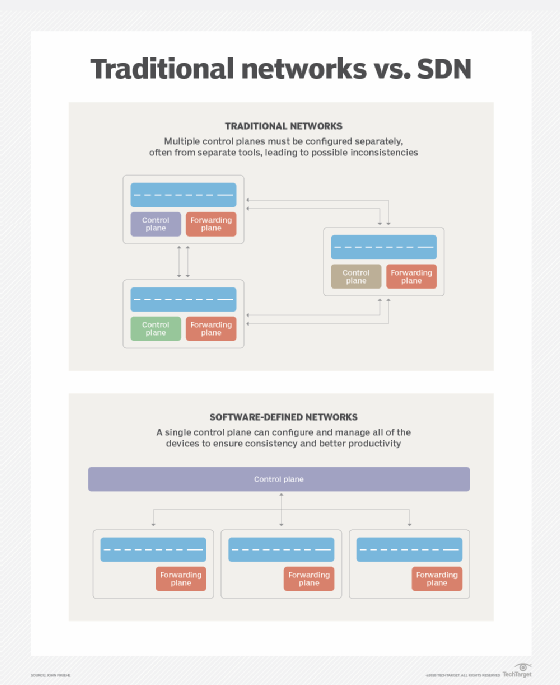

Often an admin must manually configure switches, routers, gateways and firewalls to establish, segment and secure suitable pathways for network traffic. If the network requires any changes, such as creating a new segment or adding more bandwidth to a segment, the admin must manually alter the network configuration, often risking workload disruption and security flaws in the process.

Software-defined networking (SDN) seeks to overcome the decentralized and complex nature of networks by using software tools and intelligent network devices to streamline control over the network and have admins programmatically exercise that control.

This software-defined technology separates the flow of data from the data flow controls -- a feat impossible with traditional switch or router architectures -- enabling an admin to organize, manage and monitor the network without needing to touch actual network devices.

SDN works on three layers: infrastructure, control and application. The infrastructure layer network includes all of the network hardware, such as switches, routers and cables.

The control layer is the software that provides the SDN intelligence. The control layer is usually installed on a server, and it manages the flow of traffic across the entire network.

The application layer provides network services, such as load balancers, intrusion detection and prevention, and firewalls. Normally, these capabilities are deployed as discrete devices. With SDN, applications provide functionality by running on servers that the control layer directs. The control layer and application layer communicate through common APIs, such as OpenFlow and Open Network Environment.

SDN builds on this kind of functional data and control separation to add virtualization and automation. The addition of virtualization enables admins to abstract network traffic from the physical network, and supports advanced capabilities such as microsegmentation, which further controls traffic flow. Automation and orchestration enables network control and management tasks to take place faster with fewer errors and greater autonomy.

However, SDN requires substantial changes to how admins architect and manage enterprise networks. SDN provides granular control and security that is best-suited to extremely busy and dynamic networks often found in cloud and multi-tenant environments.

Virtualization and application-based network services has simplified networks and vastly enhanced the use of generic servers and basic switch devices, instead of vendor-specific or specialized hardware.

The work involved in architecting, programming and operating the SDN is really only justified when a business needs rapid change and high scalability. Everyday business networks with only occasional changes might choose to forego software-defined technology and use a more static infrastructure.

Editor's note: This article is the first part in a series on software-based data center technology. Read part two here.