Managing unstructured data to boost performance, lower costs

Is unmanaged, unstructured data clogging up your primary storage? Get control of this costly, performance-sapping situation and start managing unstructured data cost-effectively.

Unstructured data is the fastest-growing data around. While managing unstructured data can be complex, not managing it comes with a heavy price tag.

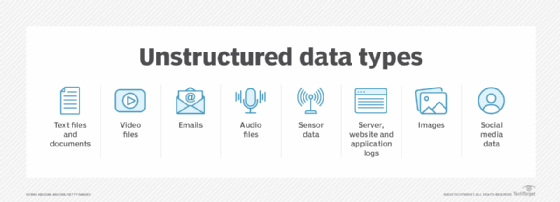

Unstructured data growth is no longer driven by the usual suspects -- documents, spreadsheets, presentations, photos, videos and audio. The impetus behind its growth today is sources such as logs, IoT devices, social media, CCTV, sensors, metadata and even search engine queries. This is in part due to the rise of IoT devices and AI-generated data.

The challenge IT leaders face in managing unstructured data is how to do it cost-effectively. Unstructured data isn't easily classified or indexed, nor is it easily stored in traditional databases. Additionally, it typically doesn't originate in databases equipped to analyze it, such as JSON, key-value and XML databases. That means the data must be extracted, transformed and loaded into a useful database. It's a labor-intensive, time-consuming and error-prone process that requires scripts or an outside service provider.

Moving data around can also create multiple copies of it, meaning more storage, rack space, switch ports, software licenses, power, cooling, cables, transceivers, allocated overhead and administrators. That doesn't make financial sense. There are methods for picking optimum services for unstructured data management and keeping costs down, however.

Managing unstructured data -- or not

The most common approach to unstructured data management is simply to not manage it at all. Many IT shops opt to add capacity to their primary storage systems rather than classify, manage, analyze or even archive unstructured data. They might use deduplication or other data reduction technologies to shrink the data a bit, but it still tends to be large and unwieldy. More problematic, however, is that simply adding capacity as a way of dealing with rampant data growth can be financially unsustainable.

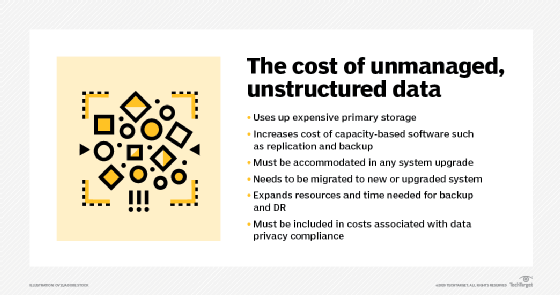

Data consumes capacity -- often, primary storage capacity. And, once consumed, that capacity isn't available for other data. Primary storage is the most expensive storage, usually consisting of some type of flash SSD media. Storage system software and many other types of software, such as backup and replication, are often licensed or subscribed to based on capacity. In other words, as a data set grows, the cost of keeping that data increases, even if the business isn't using it.

Admins should typically refresh storage systems every three to five years. When a system is upgraded, the new system must include capacity for all existing unstructured data, as well as any data that will be stored over the new system's life, adding more infrastructure and costs. In addition, the data must be migrated from the old to the new storage system. That takes time, effort and software or scripting.

As an organization accumulates more unstructured data, it's not just the storage costs that increase, but the cost of backing up that data. After all, it's not just primary storage that a business consumes. Secondary storage capacity is needed, too, because all of that unstructured data must be backed up.

Besides the cost of backing up unstructured data, recovering data following a system failure can be costly. The time it takes to restore cool and cold data can delay getting systems back up and running, adding even more costs to this outdated process.

Keeping unstructured data on primary storage also raises potential issues because of global privacy laws and regulations, such as the California Consumer Privacy Act and the EU's GDPR. Compliance isn't optional, and significant financial consequences result from failing to comply. That means IT organizations must know if personally identifiable information (PII) is contained in the unstructured data they're keeping and what it is. The more unstructured data an organization has on hand, the more difficult it is to know with any degree of certainty whether that data contains PII.

When an organization faces litigation, its data is almost always subject to subpoena. Legal counsel will typically use e-discovery software to comb through an organization's data, looking for any evidence of wrongdoing. Organizations often use data lifecycle management policies to automatically purge any data not legally required to be retained and no longer useful to the business. Shrinking the volume of unstructured data can reduce the cost of e-discovery and decrease the chances that the discovery process will reveal something negative about the company.

Unstructured data management tools

The key to managing unstructured data to optimize performance and lower costs is capturing, harvesting, parsing and analyzing metadata. In some cases, such as with data containing PII, that means analyzing the content itself. Several companies have products and services aimed at managing unstructured data and its costs. These products include Aparavi, open source iRODs, Komprise, Spectra Logic StorCycle, Starfish Storage and StrongLink. Other unstructured data management and storage tools include Snowflake or Google Cloud Storage.

When organizations manage unstructured data correctly, processes change in a good way. Data is moved, archived or deleted from costly primary storage to more cost-effective secondary, cloud or tape storage. The data management software determines where to move it based on the characteristics and performance requirements of the unstructured data. Organizations maintain access through client software, symbolic links, global namespace or a combination of those.

These intelligent and autonomous data management systems have different ways of accessing and classifying unstructured data. They can mount the file or object storage with administrative privileges (iRODs, Komprise, Spectra Logic StorCycle, Starfish Storage and StrongLink) or run in the computational systems (Aparavi) capturing the metadata, classifying the content, copying, moving, archiving and deleting data. This reduces the capacity consumed in the primary storage and backup or the replicated data in the secondary storage.

How to pick an unstructured data management system

Begin by looking for a data management system that will enable access to data that has been moved to cold storage without the need to rehydrate it. While cold data might occasionally need to be moved back to primary storage, rehydration should be policy-driven rather than something that happens automatically any time users access data.

When picking the best intelligent or autonomous unstructured data management system, answer the following seven questions about your requirements and the products you're considering:

- How much data will be moved or migrated upfront and over time?

- Do you require both metadata and data indexing?

- Are you doing anything to index data now, and if so, what is it about your current process that is not working as well as it should?

- What levels of scalability and performance are required? Will you need a system that scales into exabytes or will one that goes into low petabytes be sufficient?

- Are you only interested in indexing the data, or do you also want to move less frequently accessed data into one or more cold storage tiers?

- How automated, simple and intuitive do you want the management system to be?

- How is each system licensed or subscribed to?

Done right, the total cost of managing unstructured data should be less than the previous approach of not managing it at all.

Brien Posey is a 22-time Microsoft MVP and a commercial astronaut candidate. In his over 30 years in IT, he has served as a lead network engineer for the U.S. Department of Defense and as a network administrator for some of the largest insurance companies in America.

Marc Staimer is the founder, president and CDS of Dragon Slayer Consulting in Beaverton, Ore. The consulting practice focuses on strategic planning, product development and market development.