Fotolia

Scaling microservices takes conceptual skills and good tooling

Independent scalability is a major benefit of microservices, and simultaneously a complex and challenging thing to implement. Scale microservices with a focus on users' priorities.

IT teams can face several challenges when scaling microservices-based applications. This article details the obstacles to microservices scaling and maps out the options that an IT organization has to overcome them.

With a monolithic application, IT teams can carry out straightforward, well-established tactics to scale both vertically and horizontally. For example, a load balancer can allocate traffic across various resources as needed. If there is too much of a load on the application, teams can even spin up new instances of the application to create more room for workloads.

A monolithic application is deployed as a single unit behind the load balancer. All you need to do is add more resources as transaction volume increases. However, the components of this monolith often don't scale independently, so you might need to deploy more resources for the entire application even if you only experience demand for one individual component.

On the other hand, a microservices-based application comprises a collection of loosely coupled services built to run on a heterogeneous mix of platforms. Because of the distributed nature of a microservices-based architecture, IT teams must scale traffic differently than they would with a monolithic application. They must devise scalability strategies that protect microservices-based applications from unexpected outages and help maintain fault-tolerance.

Scaling concepts

In addition to understanding the architecture of an application, teams must have a grasp on scalability and the reasons for it.

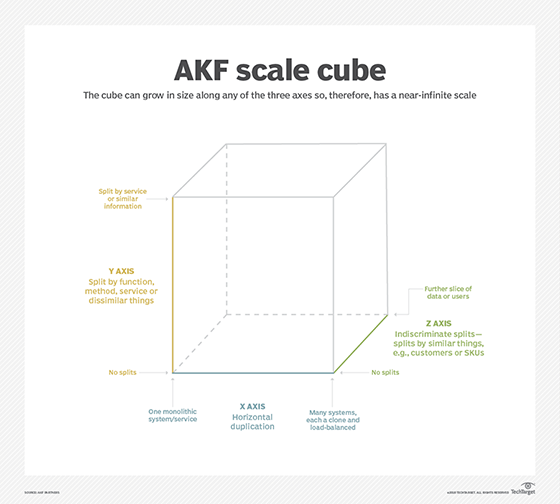

The scale cube, established in The Art of Scalability by Martin L. Abbott and Michael T. Fisher of AKF Partners, is a three-dimensional scaling model that illustrates three approaches to application scaling. The scale cube's X, Y and Z axes represent the three different scaling approaches. The traditional monolithic scaling method that replicates application copies falls along the X axis. Microservices-based application scaling or types of scaling that break monolithic code fall along the Y axis. Lastly, Z-axis scaling involves the strategy of splitting servers based on geography or customer base in order to strengthen fault isolation.

How a microservice scales is fundamental to running it successfully. There are two metrics to be aware of: qualitative growth scale and quantitative growth scale. The qualitative growth scale refers to where a service integrates into the microservice ecosystem, and the quantitative growth scale is how you measure the traffic that the microservice can handle.

The qualitative growth scale is used to relate the microservice's scalability to a high-level business metric. As an example, you can have a microservices-based application that scales with the number of users. Meanwhile, the quantitative growth scale takes advantage of a key data metrics, like requests per second or transactions per second, to measure when to scale a microservice.

Ways to monitor and optimize performance

End-user performance is the most important aspect of a microservices-based application. Users notice slow and unintuitive application performance immediately. Even if a team uses the best technologies and tools to build a microservices-based application, that IT strategy doesn't pay off if there is no improvement in user experience.

Teams should prioritize application performance and the end user's perspective to efficiently address microservices scaling issues. To prevent performance problems in a microservices-based application, take advantage of an application delivery controller (ADC). ADCs provide Layer 7 load balancers that adeptly facilitate scaling automation. Choose ADC systems that are designed to handle microservices applications. Use them to monitor and optimize the performance of services in real time.

To scale a microservices-based application effectively, teams must also track performance and efficiency goals. An effective monitoring system alongside a scaling strategy can help maintain optimal performance for a microservices-based application.

Tracing the problems

All software application teams should take advantage of logging; however, tracing is difficult to carry out in a microservices-based application. A microservices architecture comprises several services and service instances, likely spread across multiple systems.

Every service instance has the capability to write log data, such as errors and load balancing issues. The application support staff must aggregate the logs, and search and analyze them when needed. Consider taking advantage of a correlation ID to trace the initiating event and subsequent ones in a flow.

Allocate resources appropriately

IT teams should remember that resource availability and allocation play a vital role in scaling a microservices application. Resource allocation specifically presents several challenges. The first layer, or the hardware layer, must be appropriate for the microservices ecosystem. Prioritize particular microservices for CPU, RAM and disk storage allocation. For example, teams must give mission-critical microservices the highest priority when it comes to resource allocation.

While many microservices-based applications run with a stateless back-end architecture and on containers for deployment simplicity, this doesn't mean that they can scale automatically. Teams must understand scalability problems, set achievable goals with qualitative and quantitative consideration, and then apply resource-appropriate measures -- with a view toward performance and the end user's perspective.