Composable disaggregated infrastructure right for advanced workloads

Composability provides the agility, speed and efficient resource utilization required to support advanced workloads that continue to grow ever-more sophisticated and comprehensive.

Enterprises are generating more data than ever, but they also need applications that can make sense of all this information. Many are turning to AI, big data analytics and other advanced technologies that can derive meaningful insights from the data. Such applications require adequate IT infrastructure to support them, however. That infrastructure sometimes can even include high-performance computing environments.

Infrastructure alone is seldom enough. Requirements can vary significantly from one application to the next and even fluctuate within the same application. These changing application requirements make it difficult for traditional infrastructure to meet the demands of the advanced workloads. For this reason, many organizations are now looking to composable disaggregated infrastructure.

Big data analytics and other advanced applications

In today's enterprise, data can come from transactional systems, IoT devices, social media sites, web logs, user devices, monitored IT systems and a variety of other sources. By deriving insights from this data, organizations can realize multiple benefits. For example, they might use this information to optimize IT operations or increase employee productivity. Alternatively, they might use it to improve customer services or gain a competitive edge.

Comprehensive data analysis requires applications that incorporate advanced technologies such as machine learning, deep learning, neural networks or predictive analytics. These applications need infrastructure that's flexible and scalable enough to accommodate the differences between them. For example, statistical machine learning might use a smaller data set and require fewer compute resources, whereas a deep neural network might demand higher performance and require a lot more data.

IT must be able to run both types of applications as efficiently and cost-effectively as possible, while still delivering the necessary performance. This, in itself, can be difficult enough, but individual applications can also present challenges. An advanced application might have varying requirements as it passes through multiple phases, needing different compute and storage resources at different times. For example, ingesting data can be an I/O-intensive operation, whereas training and validating a model can require significant CPU and memory resources.

Accommodating the fluctuating needs of multiple applications all vying for the same resources can be a daunting process and difficult goal to achieve, especially when trying to maximize resource utilization. Even a traditional high-performance computing (HPC) platform can fall short if application demand is unpredictable and continually changing. Advanced applications require the right mix of resources when they need them or else risk suffering serious delays.

Benefits of composable disaggregated infrastructure

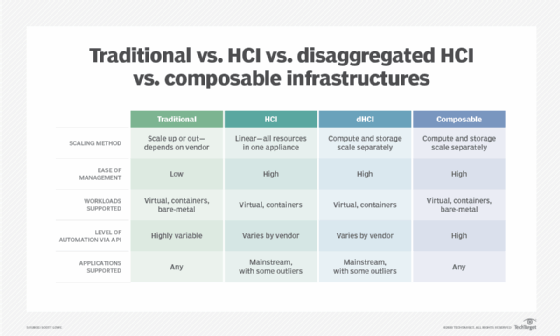

Traditional infrastructure can have a tough time keeping up with the demands of today's advanced workloads. These systems adhere to an often complex and inflexible architecture, making it difficult to accommodate changing workload requirements without overprovisioning or underutilizing resources. That's not to say these systems can't be updated or repurposed, but such processes can be time-consuming and costly, while resulting in infrastructure that's just as rigid.

To complicate matters, vendors optimize many systems for specific workloads, with hardware and software tightly bundled, making it even more difficult to respond quickly to changing application requirements. For example, hyper-converged infrastructure (HCI) has proven extremely popular in recent years because it simplifies deployment, but it also suffers from fixed resource ratios and limited agility. Disaggregated HCI has changed the picture somewhat, but dHCI still targets specific workloads, and it can't support applications running on bare metal.

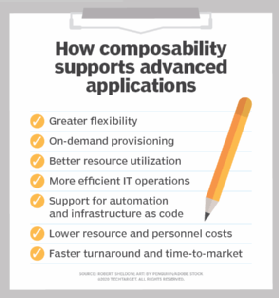

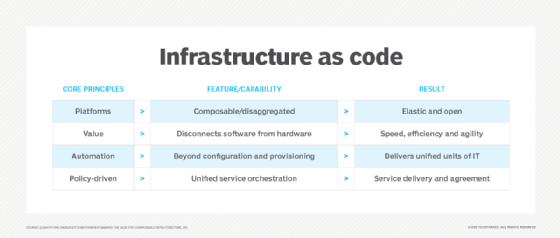

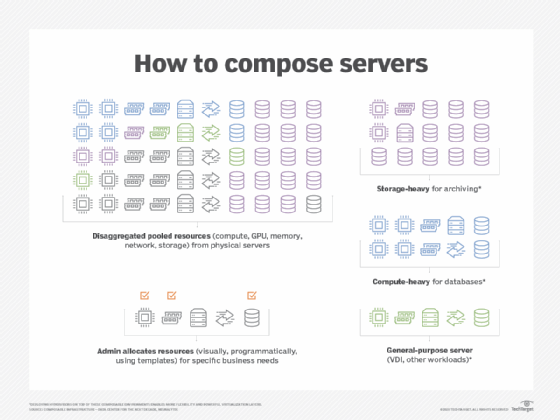

Because of the limitations of other infrastructure, many organizations are turning to composable disaggregated infrastructure, which disaggregates the hardware into logical resource pools. From these pools, users can provision resources on demand to accommodate fluctuating application requirements. Not only can this help meet the infrastructure and provisioning needs of today's advanced applications, but it can also lead to more-effective resource utilization. In addition, composable infrastructure opens the door for automation and infrastructure as code, leading to greater agility and faster time to market.

With composable disaggregated infrastructure, enterprises can right-size configurations as workload requirements change, assembling and reassembling resources in response to evolving application and business requirements. Resources become elastic building blocks for delivering an optimal environment that's provisioned and configured to support a specific workload without having to wait on lengthy IT allocation processes. When the application no longer needs those resources, they're returned to the resource pool and ready for use by other applications.

At the heart of a composable platform is the management software that abstracts the physical compute, storage and network hardware to make them available as services that can be accessed as needed -- much like cloud-based services. When the platform receives a request for application resources, the composable software dynamically composes the components and ensures their availability until they're no longer needed.

In this way, composable disaggregated infrastructure can accommodate a wide range of workloads -- including AI and advanced analytics -- whether running in VMs, in containers or on bare metal.

Composability in the real world

Composable disaggregated infrastructure is emerging as a compelling option for deploying applications that incorporate advanced technologies such as machine learning and deep learning. In fact, several composable offerings are already on the market, such as HPE's Synergy platform, Dell EMC's PowerEdge MX modular infrastructure and Liqid's composable infrastructure software platform.

As composable architecture continues to mature, numerous industries stand to benefit. This include healthcare, financial services, managed service providers, university and government researchers -- just about any organization planning to run advanced workloads.

In fact, composability is already making serious headway. For example, researchers at Texas A&M University's College of Engineering recently received a $3.9 million grant from the National Science Foundation to acquire a next-generation composable HPC platform. Researchers will use the platform to explore new ways of utilizing machine learning in data-driven discovery, which could benefit a wide range of scientific fields that rely on AI technologies and big data practices, such as cybersecurity, genomics, agricultural sciences, climate modeling, biomedical imaging and many others.

Universities aren't the only institutions jumping on the composable disaggregated infrastructure bandwagon. Liqid recently signed a $32 million contract with the U.S. Department of Defense to provide the Army Corps of Engineers with two composable HPC systems that incorporate Liqid and Intel technologies. The Corps of Engineers will use the systems to run AI applications that help deliver essential military and civilian engineering services around the world.

The composable disaggregated infrastructure industry is still relatively young and is as dynamic as the composable platforms themselves. But composable architecture has made great strides in the last few years and is likely to continue to do so. Composability won't necessarily benefit all workloads or environments But it could go a long way in accommodating applications, especially those that incorporate AI and other advanced technologies.