Getty Images

Understand the rise in leaf-spine architecture

Leaf-spine networks can help cut costs, reduce carbon footprints and optimize network traffic for data centers. Learn why this network architecture is on the rise.

Traditionally, enterprise data centers focus on data storage and disaster recovery and haven't always been able to meet the demand for real-time, multiuser data retrieval. Data centers must adapt to support the demands of continuously growing data traffic and evolving technologies like blockchain, as well as manage their environmental impact.

The shift to more data-centric business models and the rise of blockchain technology have data centers shifting from storage and asynchronous use to real-time analysis and on-demand data processing. Colocated data centers offer the best combination of scalability, availability and environmental controls for blockchain operations. However, many data centers are starting to adopt architectures that can accommodate the data processing demands of blockchain operations and other data-centric businesses.

Embrace new architectures like leaf-spine, switch to virtualized servers and replace old, inefficient hardware to ensure that data centers can keep up with business requirements. Facility owners can scale their operations easier, reduce their carbon footprint and maximize efficiencies.

Traditional data center infrastructure and design struggles with blockchain

Private networks housed within a data center use infrastructure and architecture optimized for north-south communication between clients and servers across core, distribution, aggregation and access switches. Virtualization and other complex server applications increase the server-to-server communications, or east-west communications, which can overload communication traffic. This leads to bandwidth bottlenecks and unexpected latency through switch oversubscription.

Decentralized networks, such as blockchain and internet connections, rely on an uninterrupted network, no matter where they're located, as do the data stores used by big data applications. These resources must always remain highly available, and the infrastructure they sit on must be efficient enough to handle routine traffic spikes.

Data centers handling blockchain and data-heavy processes require proper infrastructure to handle concurrent intermittent high bandwidth. The core, aggregation and access layers of the traditional data center cannot handle these types of workloads efficiently. They use spanning tree protocols to prevent traffic loops, which detect loops and block the connections or links forming them. Without anywhere else to go in the traditional north-south traffic pattern, data bottlenecks at this switch, leading to downtime or latency.

Changing traffic patterns in the data center

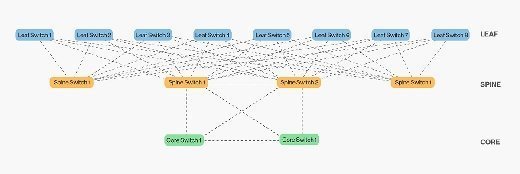

Leaf-spine architecture transforms a traditional data center into one that can handle omnidirectional traffic. It overcomes the limitations of the traditional traffic model by taking a horizontal approach to network design and includes one or two tiers of switch meshes that connect to multiple traffic layers.

In leaf-spine architecture, each switch gathers and consolidates traffic from clients and feeds it through a central network line to the server. This setup consolidates network traffic into packets and moves them between servers more efficiently because devices no longer must wait for open server connections.

Horizontal -- or east-west -- hosts are equidistant. Any individual host can talk to another host on the same leaf switch. Traffic moves predictably through the leaf-spine, a key requirement for high-performance computing clusters, multi-tiered web applications, and any other service or application dependent on real-time activity and low latency. Network monitoring tools can help optimize traffic patterns across the leaf-spine and reduce latency even more.

Additional technologies, such as Shortest Path Bridging and Transparent Interconnection of Lots of Links, enable all links between leaf and spine to forward traffic. This means the network can automatically scale as traffic grows. With these technologies, facility owners can gather exact statistics for cost estimation.

Reducing technology spending

Traditional data center networks use many-to-fewer connection options between switch layers, which can drive up costs. Newer architectures reduce costs by increasing the number of connections each switch can handle. Data centers require less hardware because newer switches can carry and accept traffic from any direction so organizations don't waste connections and bandwidth.

Facility owners can realize even more cost savings with the leaf-spine architecture through lower deployment and maintenance costs, too. The scalability and efficiency of the leaf-spine means fewer devices to deploy and maintain, so this architecture requires fewer resources.

Reducing carbon footprint and power needs

Modern business has hardware working harder, longer and hotter than before. Consequently, traditional data centers require more cooling and use more power overall.

Compared to traditional ones, newer architectures are cooler as they move data faster, optimize traffic patterns and use hardware that generates less heat overall. So, even the virtualized servers that run hotter than nonvirtual ones generate less heat overall because they can run the same workload with fewer of them.

Leaf-spine designs are more environmentally friendly because they reduce the number of hops in a network's design. They require fewer aggregation switches and redundant paths between access and interconnection switches. Latency and power requirements go down with leaf-spine, and it requires less cooling overall.