sdecoret - stock.adobe.com

Infrastructure requirements for physical AI systems

Physical AI systems are becoming a priority for companies because of their strategic and operational advantages. But businesses must contend with their infrastructure needs.

Physical AI systems offer an unprecedented level of machine autonomy that can benefit multiple sectors, such as heavy industry, manufacturing, energy retail, transportation and healthcare. However, adoption headwinds are significant and can become an obstacle to wider implementation.

According to Deloitte's 2026 "State of AI in the Enterprise" report, in a survey of 3,235 business and IT leaders, 58% said they're already using physical AI and 80% expect to begin using it within two years. Industrial and retail warehouses are increasingly advanced and intelligent. Unmanned systems and routing engines are meeting exponential increases in supply-chain demands. AI-driven robotic arms and autonomous mobile robots are selecting, assembling and transporting a variety of items while reducing accidents and improving operational efficiency. To realize the benefits of these deployments, businesses must adopt complex IT systems that incorporate digital sensors, machine vision, edge computing, cybersecurity and data analytics.

Discover the infrastructure and costs necessary to deploy physical AI systems within the enterprise. Learn about physical AI infrastructure best practices and strategies for organizational adoption.

What physical AI systems can offer businesses

Using sensor data, physical AI models, sometimes called embodied AI, develop an understanding of their real-world environment. These models then use the data they collect to reason and interact with the environment to autonomously achieve an organization's goals.

Physical AI use cases span a range of economic sectors. In manufacturing, physical AI can detect anomalies early in production, reduce defect rates and identify emerging issues before they escalate. These embodied systems can use image analysis, video streams and sensor inputs to improve overall monitoring and outperform human inspections. In other high-risk industries, physical AI uses real-time visual and situational analysis to evaluate hazardous environments and reduce frontline worker exposure.

While many sectors can benefit from the capabilities of physical AI, not every business is prepared to deploy this technology.

The costs and demands of physical AI systems

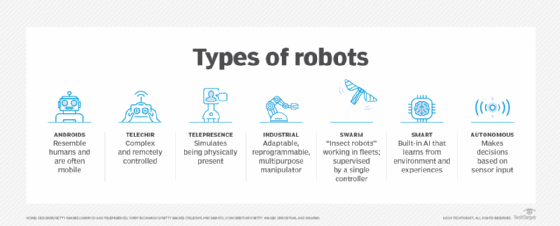

In contrast to cloud-based, virtual AI deployments, like chatbots and generative and agentic AI, physical AI relies on a continuous stream of sensing, understanding, decision-making and event execution within real environments. These include remote devices, such as industrial IoT machines, robots and autonomous vehicles, that can perceive movement, interpret context, assess risk and take specific steps to achieve their goals.

The following are the four primary stages involved in developing physical AI:

- The perception stage. This is the integration of static remote devices, like cameras; light detection and ranging, or lidar; sensors; and computer vision.

- The adaptive reasoning stage. The physical AI model draws conclusions from sensory and data inputs.

- The execution stage. This stage bridges the gap between digital reasoning and edge devices' direct actions.

- The continuous learning stage. Robots and physical devices use neural processing to automatically update and self-adjust actions based on new experiences without wholesale retraining.

From perception to continuous learning, physical AI demands several things, the most critical being maintaining accurate data sources. Appropriate security measures are also necessary to safeguard hardware and device integrity at the edge. Human-in-the-loop controls provide the human oversight that reinforces risk management and reliability. Edge technology integrates new levels of computational power and networking. GPUs and neural processing units further enable the parallel processing and real-time training simulations that physical AI models require. These data, security, edge computing and AI hardware costs can be substantial, despite the affordability of cloud services.

According to the Deloitte report, business leaders cite cost as the key barrier to physical AI deployments. However, research indicates a gradual shift towards affordability. Bank of America Global Research predicted that hardware costs for a humanoid robot will decrease from $35,000 in 2025 to about $17,000 by 2030.

Polaris Market Research predicted that the global edge AI hardware market, valued at $21.86 in 2024, will grow at a compound annual growth rate of 17% during its 2024-34 forecast period. This would yield a market size of $107.5 billion USD by 2034. The demand driving this growth could result in a reduction in overall hardware costs.

Power demands have also become a potential obstacle to widespread AI adoption, and the present operational costs of physical AI systems can compound these challenges. In addition to overall electricity consumption, some physical AI deployments require thermal management systems for certain use cases. And in other deployments, edge processors must manage highly variable power demands, switching from low-power idling to maximum compute in short bursts.

Strategies for deploying physical AI systems

The fundamental advantage of physical AI is its fast adaptation and integration with existing IT systems. Data-centric architecture, APIs and edge deployments make deployments possible. Organizations can extend data center capabilities into an environment and ensure submillisecond processing for model inferencing and autonomous operations. Localized, static RAM can further reduce data movement. Hybrid cloud edge architectures help process vast amounts of unstructured data. Mesh networking and software-defined WANs connect discrete edge environments, supporting the hybrid architecture.

Wi-Fi 6/7 and Ethernet time-sensitive networking can deliver ultra-low latencies and reliable wireless communications necessary for autonomous robots in manufacturing and collision avoidance for driverless vehicles. Recent advances in 5G/6G enable the ingestion of massive amounts of real-time data from geographically widespread, dense sensor networks. These telecom radio access networks and 5G/6G base stations enable the kinds of massive interconnectivity that transform isolated physical AI initiatives into interactive, distributed compute platforms.

Shifting compute from centralized data centers to edge environments and the embodied devices themselves can reduce energy consumption and data transmission costs. While it's true that the power intensity of some physical AI devices requires energy-intensive processing at the edge, the sustainability possibilities are also considerable. These include improved energy efficiency through autonomous, managed resources, and the prevalence of battery power and renewable energy to support on-device computing.

With the infrastructure in place, businesses need to implement operational strategies for physical AI. A strategy that emphasizes incremental adoption and structured integration will prevent workflow disruptions and ensure that legacy IT equipment integrates seamlessly with physical AI sensors, machinery or autonomous devices. Early deployments should operate under close administrative supervision. Oversight should include mapping data flows, evaluating decision-making processes and identifying whether specific physical AI systems require additional sensors or connectivity to prevent processing and operational bottlenecks.

IT leaders must also focus on organizational readiness and change management, assessing whether teams are prepared to process information from physical AI and to work alongside intelligent systems. Complete executive buy-in and clear communication help demonstrate how physical AI can support a workforce, add value and further enhance safety. Adoptions could benefit from controlled deployment pilots and gradual rollouts to ensure that physical AI performs reliably and meets expectations across a range of conditions.

Kerry Doyle writes about technology for a variety of publications and platforms. His current focus is on issues relevant to IT and enterprise leaders across a range of topics, from nanotech and cloud to distributed services and AI.