The role of NVMe network storage for the future

This chapter excerpt from 'Building a Future-Proof Cloud Infrastructure' examines the role networking and storage protocols, like NVMe, could have in the remote storage market.

The COVID-19 pandemic led to a rapid adoption of work-from-home models and, in turn, presented the storage industry with a challenge: finding the right balance among speed, performance and cost in remote environments.

But the cost of performance and efficiency has always come at a premium, too. Many in the storage industry are turning to more efficient -- but more expensive -- storage hardware, such as SSDs, but enterprises still widely use HDDs due to their lower costs.

SATA and SAS storage interfaces keep costs low, but NVMe is poised to be "the name of the game" in storage going forward as the need for speed increases, said Silvano Gai, author of Building a Future-Proof Cloud Infrastructure from Pearson, in an interview with TechTarget. Organizations are adopting NVMe rapidly, specifically NVMe-oF, according to Enterprise Strategy Group research.

"NVMe was done as a reaction to SCSI, as an alternative to SCSI. That had nothing to do technically; it was more [of] a business and industry decision," Gai said. "The advantage of NVMe compared to SCSI is that, in NVMe, there is huge support for parallel operations and pending queue. And that, of course, dramatically increases the throughput when you go towards SSDs."

Capabilities for cloud and remote storage also help drive the rise of NVMe network storage. Large organizations like Facebook and Microsoft have begun to adopt NVMe cloud storage because of its higher IOPS and reduced latency. The protocol will likely expand across the industry in the coming years.

Storage is "possibly the most natural candidate for disaggregation," Gai wrote in the book, as organizations look to lower latencies and more efficiently distribute storage resources. Separating compute from storage through disaggregation enables organizations to scale applications without losing performance.

Additionally, as data continues to grow in sheer volume, many have turned to the different NVMe protocol specifications, such as NVMe-oF, as they strive to remove inefficiencies and meet their ever-expanding storage needs, without sacrificing performance.

Below is an excerpt from Chapter 6, "Distributed Storage and RDMA Services," from Building a Future-Proof Cloud Infrastructure. In this chapter, Gai discusses remote storage, distributed storage, and the rise of SSDs and NVMe network storage. To view all of Chapter 6, click here.

6.2.4 Remote Storage Meets Virtualization

Economies of scale motivated large compute cluster operators to look at server disaggregation. Among server components, storage is possibly the most natural candidate for disaggregation, mainly because the average latency of storage media access could tolerate a network traversal. Even though the new prevalent SSD media drastically reduces these latencies, network performance evolution accompanies that improvement. As a result, the trend for storage disaggregation that started with the inception of Fibre Channel was sharply accelerated by the increased popularity of high-volume clouds, as this created an ideal opportunity for consolidation and cost reduction.

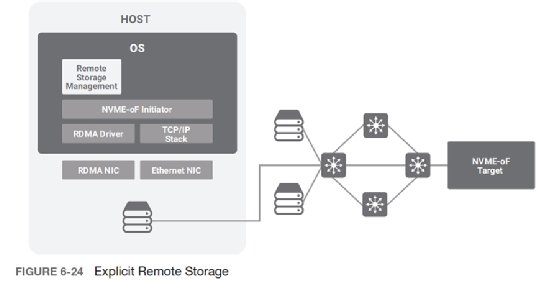

Remote storage is sometimes explicitly presented as such to the client; see Figure 6-24. This mandates the deployment of new storage management practices that affect the way storage services are offered to the host, because host management infrastructure needs to deal with the remote aspects of storage (remote access permissions, mounting of a specific remote volume, and so on).

In some cases, exposing the remote nature of storage to the client could present some challenges, notably in virtualized environments where the tenant manages the guest OS, which assumes the presence of local disks. One standard solution to this problem is for the hypervisor to virtualize the remote storage and emulate a local disk toward the virtual machine; see Figure 6-25. This emulation abstracts the paradigm change from the perspective of the guest OS.

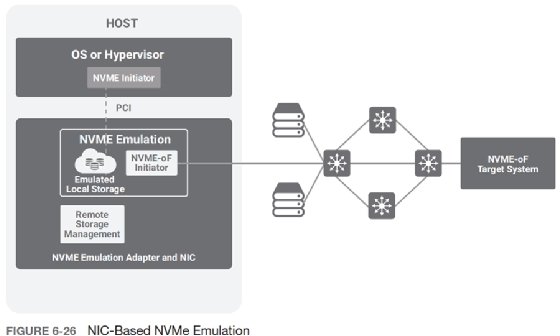

However, hypervisor-based storage virtualization is not a suitable solution in all cases. For example, in bare-metal environments, tenant-controlled OS images run on the actual physical machines with no hypervisor to create the emulation. For such cases, a hardware emulation model is becoming more common; see Figure 6-26. This approach presents to the physical server the illusion of a local hard disk. The emulation system then virtualizes access across the network using standard or proprietary remote storage protocols. One typical approach is to emulate a local NVMe disk and use NVMe-oF to access the remote storage.

One proposed way to implement the data plane for disk emulation is by using so-called "Smart NICs"; see section 8.6. These devices typically include multiple programmable cores combined with a NIC data plane. For example, Mellanox BlueField and Broadcom Stingray products fall into this category.

Enterprise Strategy Group is a division of TechTarget.

TechTarget editor Jen English contributed to this report.