Maxim_Kazmin - Fotolia

Google Filestore adds Elastifile tech for high-scale tier

Google's new Elastifile-based Filestore High Scale gives customers a higher-performing file storage option over the cloud provider's original basic options.

The first Google Filestore product to integrate technology from the cloud provider's 2019 acquisition of Elastifile emerged this week in a beta release of an SSD-based "High Scale" tier.

Google claims that Filestore High Scale can scale up to 320 TB and deliver a maximum throughput of 16 GB per second and 480,000 IOPS for its Compute Engine and Kubernetes Engine instances that require file storage. The new Elastifile-based managed service aims at high-performance workloads, including data analytics, media rendering, genomics processing, financial modeling and electronic design automation.

Marc Staimer, president of Dragon Slayer Consulting, said Google added Filestore High Scale to compete with the other major cloud providers and improve performance and scalability over the basic cloud file storage that it originally offered.

Staimer said enterprises generally use cloud file storage from the major providers when they also run the applications that use it in the public cloud, because "latency can kill performance." But they pay a substantial premium to do so. Staimer estimated that, over a three-year period, on-premises file storage would cost less than 10% of the 30-cents-per-GB that major cloud providers charge.

But Staimer said some organizations want to get out of the data center business entirely, and others find the elasticity and convenience of public cloud file storage make sense for certain applications -- especially if they need a distributed file system that can provide access to users or clients in different regions through a single global namespace.

Using Google Filestore for COVID-19 research

Christoph Gorgulla, a postdoctoral research fellow at Harvard Medical School, uses the Google Cloud Platform (GCP) for the VirtualFlow application that he developed to virtually screen billions of molecules against a target protein to speed the discovery of drug candidates and potential treatments for diseases. Harvard researchers are now using VirtualFlow to find therapies for COVID-19.

VirtualFlow's storage requires a file system that can handle the heavy load that thousands of GCP virtual machines concurrently generate. Gorgulla said researchers don't have the time to set up, manage and monitor a complex file system cluster, and Google's Elastifile-based cloud file storage removes the burden.

The research team first tried Google's legacy Filestore, but Gorgulla said it wasn't powerful enough. Other alternatives they considered included Lustre, Quobyte, Ceph, BeeGFS, PingFS and OrangeFS. Gorgulla said Harvard selected the Elastifile-based Google Filestore for its "extremely powerful" performance, customization capabilities and simplicity of use, with its helpful graphical user interface.

Gorgulla said Google Filestore High Scale might cost a bit more than some other options. But he said the cloud file system enables the team members to expand storage on the fly if they need more capacity and to deliver the performance VirtualFlow needs, with the GCP-based compute nodes of the SLURM cluster connected to Filestore High Scale.

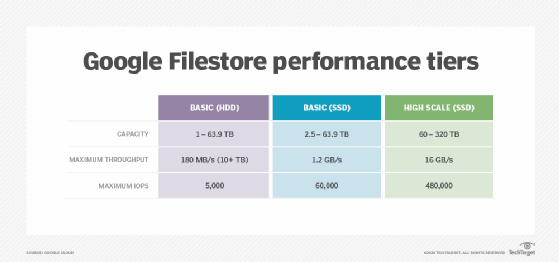

Google Filestore performance tiers

Google Cloud will now have three Filestore performance tiers: Basic with HDDs, Basic with SSDs and the new High Scale with SSDs. All three support NFS and use a unified API, so customers can move between tiers without changes to their applications. But data does need to be copied between the two tiers, a Google spokesperson said.

The Basic HDD and SSD options target workloads such as enterprise applications and test and software development and offer a maximum capacity of 63.9 TB. The Basic SSD tier can provide up to 1.2 GB per second of throughput and 60,000 IOPS.

Filestore Basic is the same product that Google Cloud offered in the past and does not use the Elastifile technology. Google collaborated with Elastifile on a fully managed file storage service that launched in early 2019 and acquired the startup last summer. Elastifile's scale-out file system technology is now included only in the new High Scale option, but a Google spokesperson said Google will improve all Filestore tiers.

Cloud file storage pricing

Pricing for Google Filestore varies by region. The monthly charge for the new High Scale SSD service, which is currently available only in select regions, is 30 cents per GB in Iowa (us-central1). The fee for the Basic SSD/premium option is the same, and the cost for the Basic HDD/standard service is 20 cents per GB.

Google's cloud competitors have also been fortifying their file storage. Amazon's NFS-based Elastic File System offers options for standard (30 cents per GB/month) and infrequent access (25 cents per GB/month). Amazon also has FSx for Windows File Server for business applications and FSx for Lustre for high-performance workloads. Vendors such as NetApp and Qumulo also offer file storage options on AWS.

Microsoft's cloud file storage includes the SMB-based Azure Files, at 24 cents per GiB per month (22.4 cents per GB), and Avere vFXT for Azure, a high-performance file system that can cache active data in Azure compute for high-performance workloads.

Juan Orlandini, chief architect in the cloud and data center transformation division at IT consultancy Insight Enterprises, said the consumption of cloud file services has been lower than the use of on- premises file storage largely because the developers who build applications in cloud-native format learned to write to object storage APIs. He said, by contrast, developers who write applications for on-premises systems grew with NFS and CIFS/SMB file services. As a result, the public cloud initially did not offer file services that were performant or scalable, and object storage consumption has tended to be limited in on-premises environments, he said.

"Over time, that's starting to change," Orlandini said. "More and more traditional workloads are being moved into the public cloud, and they are written and expect file services. So you can see native file services from all of the major cloud providers, and they're also partnering directly with the likes of NetApp and [Dell] EMC. I think what we're going to see over the next few years is a leveling out of which protocol is consumed, as both developers and applications either become more familiar with one of the modes or their workloads start shifting where they live."