Sergey Bogomyako - stock.adobe.c

New Intel Ice Lake processors boost performance, security

Intel launches third-generation Xeon Scalable processors that bolster security, accelerate common data center workloads by 46% on average and support up to 40 cores per processor.

Intel at long last launched new performance-boosting third-generation Xeon Scalable server processors -- code-named Ice Lake -- to anchor a data center portfolio that includes its Optane persistent memory and storage and Ethernet adapters.

A 46% speed increase on average for common data center workloads isn't the only advantage that Intel claims the server processors, which were originally due for release in late 2019, can bring. Ice Lake also integrates Intel's Deep Learning (DL) Boost technology to improve AI application performance and adds features such as Software Guard Extensions (SGX) and Crypto Acceleration to enhance security.

Built with Intel's 10-nanometer process technology, the new third-generation Xeon Scalable processors enable up to 40 cores per processor, compared to 28 at the peak with the prior Cascade Lake processors. The new Intel technology also supports up to 6 TB of system memory and up to eight channels of DDR4-3200 memory per server socket.

PCIe 4.0 support added

Intel Xeon Scalable processors also finally add support for performance-doubling PCIe 4.0 to enable 64 lanes of PCIe Gen 4 in a single socket and 128 lanes in a dual server socket. AMD's Epyc processors have supported Gen 4 PCIe since 2019, when enterprise NVME-based PCIe 4.0 SSDs also started to roll out. The main option for the PCIe 4.0 SSDs until now has been AMD-based servers from vendors such as Dell, Lenovo and Supermicro.

Kuba Stolarski, a research director in IDC's enterprise infrastructure practice, said PCIe 4.0 support is not the only area where Intel is playing catch-up. Another example is support for chiplets -- what he said Intel calls "die disaggregated" -- that semiconductor makers combine to build powerful processors for workloads such as AI or machine learning. Even Intel's SGX security technology is not new with the Ice Lake release and had run into trouble before, he added.

But the combination of security technologies like the SGX platform, crypto acceleration and memory encryption is a key area where Intel hopes to differentiate with its Ice Lake release, especially for applications that run in or send data to and from multiple and remote locations, Stolarski said.

"Groundbreaking announcements are few and far between in this market," Stolarski said. "Where Intel leads is in their market share, their OEM distribution channels, trust with the customer base -- and they have been doing a lot of work with large customers to help them fine-tune the performance of their Intel-based infrastructure."

More VMs per server

Stolarski noted that Ice Lake's increased performance envelope -- from cores to speeds and memory bandwidth -- would enable enterprise server buyers to run more VMs and applications on a smaller footprint to consolidate workloads and give them the means to expand their applications' resource capacity as their needs grow.

Intel partner Lightbits Labs said its testing showed Ice Lake-equipped servers could meet a customer's requirements for 9 M IOPS and 2 PB of capacity with five storage servers compared to eight nodes with the prior Cascade Lake processors. Lightbits' LightOS software-defined storage lets customers pool fast NVMe-based PCIe flash drives in a cluster of Ethernet-networked x86 servers.

Intel invested in and collaborated with Lightbits Labs last September to optimize the startup's composable software-defined storage for its Xeon Scalable processors, second-generation Optane dual in-line memory modules (DIMMs), QLC-based 3D NAND SSDs, Ethernet 800 Series network adapters and field-programmable gate arrays.

Kam Eshghi, chief strategy officer at Lightbits Labs, said the new Ice Lake processors enable the LightOS storage to increase per-server performance by 50% to 60% and storage density by six times over Intel's Cascade Lake predecessors. Eshghi said the new memory architecture enables Lightbits to use more Optane DIMMs and expand per-socket memory to store more metadata and facilitate greater data reduction and higher usable capacity.

Delays put AMD in position to gain share

Daniel Newman, principal analyst at Futurum Research and CEO of Broadsuite Media Group, said, "Ice Lake marks an important moment for Intel. This generation has been long awaited, and the delays drew media and market ire that momentarily shifted the landscape for Intel and also put AMD in a position to gain market share."

The original late 2019 launch date got pushed to the fourth quarter of 2020, Newman said. Intel launched its Ice Lake processors for mobile and client devices on Aug. 1, 2019, but spent more time on the server processors. A company spokesperson said Intel was sampling Ice Lake to customers throughout 2020 and went into production at the end of last year.

Newman said strong benchmarks and early reports on Ice Lake would put Intel into a solid competitive position against AMD's latest variants, especially given the flexibility of the new platform to address the needs of its largest and most diverse clients.

"Intel's turning a corner -- not just with the release of its newest third-generation Xeon, but with its commitments to continue to innovate to meet the ever-changing demands of the cloud, on-prem and edge," Newman said. "Competition will continue to be tough -- not just from AMD, but from Arm as well. However, reports of Intel's demise have been largely overstated."

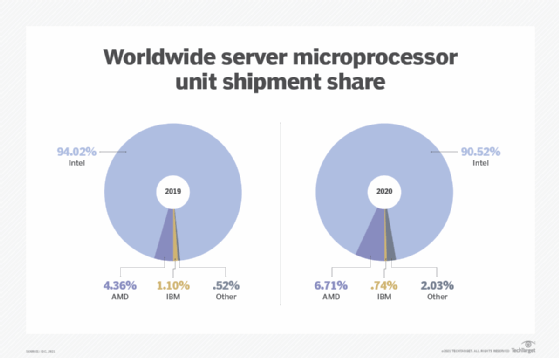

Intel commanded 94% of the worldwide server microprocessor unit share in 2019, with AMD at 4.4%, and IBM at 1.1%, far behind, according to IDC. AMD picked up a bit of ground to 6.7% in 2020, but Intel still dominated at 90.5%.

IDC research vice president Shane Rau said Intel has been expanding its total addressable market (TAM) since its first generation of Scalable Xeon processors. He said more processor SKUs are adaptable to more system types, including data center servers, edge servers, storage and networking. Mixing and matching the CPUs with other data processing types also expands the TAM of workloads the CPUs can support to more AI, cloud and virtualization use cases, Rau said. He said enterprise users, in turn, would be more likely to find a processor to target the workloads they want to run on their servers.

"There are more versions of processors that might appeal to specific end-user or customer types," Rau said. "There will be more choices of processors with more memory support, larger caches, faster clock speeds and, of course, socket types -- one socket, two sockets, four sockets and above."

Carol Sliwa has been a TechTarget senior writer since 2008. Her coverage area includes enterprise architecture, flash, memory and storage drive technology.