The promise and concern around end-user AI second brains

Human-delegated AI agents and "second brains" could transform knowledge work, but only if IT can balance governance, data security and rising shadow AI risks.

You've likely heard about how AI has completely upended development workflows and job descriptions. While most of the press has been speaking purely of developer jobs, the reality is that the same aspects of AI that are changing developers are actively reshaping knowledge work right now.

Quick primer: The second brain

Citrix's Brian Madden has been writing a series on AI and knowledge work that I think is really worth reading. It talks about how the approaches developers have begun to use with AI are also transformational for knowledge workers in the form of personal knowledge systems, or second brains. A second brain isn't really about the visible work -- emails, scheduling, formatting, day-to-day admin. It's about the thinking behind that work: the strategy, judgment, pattern recognition, institutional knowledge and acquired experience that informs everything else.

Madden adapted Dan Shapiro's five-level framework for AI in coding and applied it to knowledge work more broadly. If you're not familiar with the five-level framework, it's basically an AI usage progression that starts at "spicy autocomplete" and moves through phases like "coding intern" and "developer" on the way to "engineering team" and, eventually, "dark software factory." That last level is where you write a spec, and the entire thing is built, tested and deployed by AI.

Madden contends that these levels don't just apply to developers. Most knowledge workers think they've maxed out at Level 2, but the tools to go further exist today. To demonstrate this, he built his own second brain using AI (like Claude, ChatGPT, Google Gemini, etc.), plain text files and Model Context Protocol (MCP) to connect the AI to his file system. This system contains the compendium of Madden's writings, presentations, thoughts on AI and the industry, and more. It analyzes these assets, and as it grows, it becomes more and more like Madden in what it knows and how it can respond.

In fact, Madden carved off a piece of his accumulated thinking as a "subscribable brain" that contains a structured knowledge repository that anyone's AI can query.

Lots of potential means lots of barriers

If this seems both amazing and frightening to the core, you're not alone. And while I want this (as I suspect most readers would), I can't help but think that Madden's situation is different from most knowledge workers. He's publishing his own thinking. His frameworks, his positions, his analysis. See the theme? It's all his intellectual property to share.

I've been thinking about how I'd apply the same approach to my own work as an industry analyst. I have years of survey data, vendor briefing notes and analytical frameworks, and the idea of wiring all of that into an AI system so it actually has context when it helps me work is incredibly appealing. But much of that is core data to the company I work for. Putting it into a system where it flows to an external model would run afoul of data policies that exist for perfectly good reasons (even if the outcome might actually increase shadow AI usage).

And I'm pretty sure that's not just my problem. The stuff that would make AI genuinely useful in your job -- the institutional knowledge, the proprietary data, the accumulated expertise -- is exactly the stuff that data policies say you can't put into an external system. That's true for most knowledge workers.

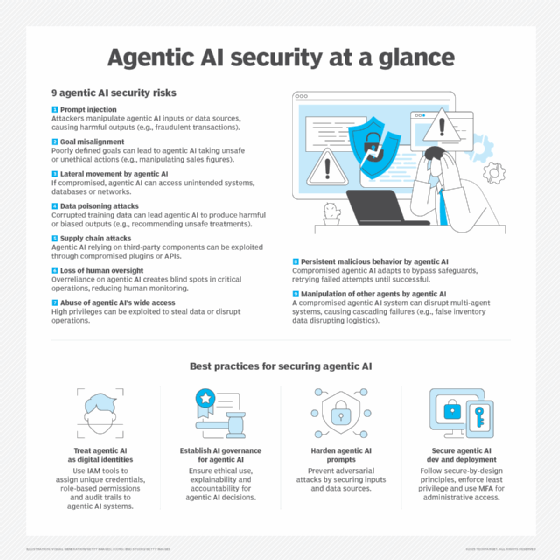

So IT has a big problem. Outright blocking of human-delegated agentic AI (which is different from the autonomous agentic AI that gets so much attention these days, but more on that later) risks creating shadow AI, which can exacerbate data loss prevention concerns. Allow unfettered use and, well, the phrase "inmates running the asylum" comes to mind. The solution is somewhere in between, likely aligned with user personas and an emerging knowledge worker skillset, and more complicated than what we can do today.

This will require some combination of the following:

- Internal infrastructure that gives agents access to organizational data without that data leaving the perimeter.

- Contractual protections with model providers, guaranteeing that data used for inference isn't used for training.

- Deploying suitably capable inference internally.

If none of those materialize, the shadow AI problem likely continues (or grows).

This all brings a lot of questions to mind that I wanted to get on paper. These aren't fully formed positions -- more like threads I think are worth pulling on, and potentially topics for deeper follow-up.

Defining agentic

Agentic means a lot of things these days, and the distinction I've found most useful is autonomous agents versus human-delegated agents. Autonomous agents are the enterprise-deployed bots, workflow automations and service accounts that IT builds and controls. Human-delegated agents are the ones that act on behalf of a specific user, with authority derived from that user's delegation.

I like the "human-delegated" name because it directly relates to what we're seeing with developers and knowledge workers right now. These aren't agents floating around in the ether. They exist within the confines of the end user's session, the end user's data and the end user's permissions. That's the kind of agent I'm talking about here.

Most of the enterprise discussion around agentic AI is aimed at autonomous agents, and while that's a real concern for management and security, the tooling and scope are quite different.

Human-delegated agentic privileges

With human-delegated agents, the privileges allocated to the agents are, at maximum, whatever the organization has granted the user. This can be good (there's only so much a human agent can do since there's only so much a user can do) and bad (users can do a lot and have access to a lot of privileged information).

Of course, the user can also give the agent more or less control within their sandbox. But that means we're leaving it up to the end user to decide what rights the agent has, and while I tend to give more credit to end users' tech savviness these days, I doubt they'll want to learn the ins and outs of granular permissions assignment for AI agents.

Basically, for this to work, IT and security teams are going to need some say over this.

The lowest-bar approach is something like creating agentic user identities -- Gabe the human, and then AI-Gabe with a different set of privileges that are more consistent with what you'd want an agent to have. That's the quick fix that we can do with today's technology, but it doesn't seem like the optimal destination.

IT's been here before, but the clock is faster

This is structurally the same position IT found itself in during the mobile/BYOD wave. End users adopted something useful faster than IT could govern it, and it took IT a few years to get a grip on it. In this case, the pace of change is faster -- perhaps months instead of years. And if business leaders decide this is the way they want to go, IT might end up in the way while they figure out how to implement it. That sounds painful for everyone.

To get ahead, IT needs to take stock of how end users actually want to work with AI. Then, build infrastructure that aligns with those patterns rather than fighting them. In practice, that means questions across many areas.

These are the key questions for organizations:

- Who is using this now? Consider users, personas, data, output, etc.

- Does IT have the resources to deploy this internally? What about at the endpoint?

- If frontier models are involved, how can you comply with governance, risk and compliance and security needs?

- Does IT have the bandwidth to build this on top of everything else?

- Can you do this fast enough that users don't build workarounds in the meantime?

These are the key questions for vendors:

- What do customers need to adopt this quickly? How can you improve time to value, decrease shadow AI and feel confident using cloud-based models?

- Do enterprise-grade MCP server frameworks exist? Do those frameworks include real authentication, role-based access and audit logging?

- How do you scope what data an agent can see based on the user's role? How can you enforce a subset of permissions for user-delegated agents?

- Does this open up a new opportunity for local AI inference? What about more advanced AI PCs, more machines with discrete GPUs or higher adoption of workstations among knowledge workers?

I genuinely don't know the answers to most of these, and while there might be answers to some, the complete picture has not yet emerged.

Down the road, there are other problems, too:

- This stuff is complicated. We'll need to train users. Some users will not be good at this.

- Tokens will become currency. If suddenly everyone's token usage increases 50x (or more), are there enough tokens to go around? Seriously. You need them for your autonomous agents and your user-delegated agents, and internally, you have a finite limit of tokens and inference capabilities. Honestly, the same applies to the big models. Say you've increased consumption by 50x. If everyone else does, too, are there enough tokens and inference capabilities in the world for this?

- This is also where you can start to look at the future of knowledge work. It's understandable if you look at the Level 2 AI usage and think, "This is nice, but it's not taking my job." But Levels 3-5 are where the knowledge work transformation will happen. I'm not saying it will take jobs, but those jobs are sure going to look different. (Like when "computer" was a job, not a thing.)

Wrapping up ... for now

I realize this is more questions than answers, but my goal was to just get this information on your radar. We'll keep an eye on this space. In fact, my next research project is going to look at the influence of AI on the workplace and productivity. It's less about AI PCs and more about AI in productivity suites, in work management and in the hands of end users, from both the IT and end-user perspectives.

If you're a customer working in this way, or building out a plan to work in this way, I'd love to hear from you. The same goes for vendors in this space. It's an extremely dynamic, interesting time, and I'm excited to be riding the wave instead of getting swept up by it.

Gabe Knuth is the principal analyst covering end-user computing for Omdia.

Omdia is a division of Informa TechTarget. Its analysts have business relationships with technology vendors.