lassedesignen - Fotolia

Get to know edge storage and the technology around it

Edge computing changes how data is managed between the network endpoints and data centers or the cloud. Learn about the various components that make up an edge system.

Edge computing is no longer theoretical. It's making its way into the enterprise, with products and services that move compute and storage closer to where data is generated and used.

IDC recently underscored this trend with a prediction that more than half of new enterprise IT infrastructure deployed will happen at the edge within three years. That's up from 10% today. So, what does this mean for edge storage and storage infrastructure in general?

The edge buildout and the massive amounts of data generated there are creating a new set of challenges for storage admins. They face questions about where to locate storage, what kind of storage to deploy and how to cope with harsh conditions in edge environments. Admins must also contend with space and infrastructure limitations.

If you're among those facing these challenges, then it's time to get up to speed on edge computing and storage. The following terminology guide will help you better understand what's going with storage at the edge.

Edge computing

Data is rarely static and often moves from where users are collecting and using it to the cloud or to a central data center for analysis, processing and storage. But data centers and clouds are often far from where the data is collected. Transmission takes time and inserts latency and inefficiencies into the processing equation. That's time that most organizations using IoT functionality just don't have. For instance, an autonomous vehicle can't wait for an answer on whether to swerve right or left; it needs a real-time response. Edge computing closes that data transmission distance and puts compute and storage closer to where the data is collected. This approach essentially decentralizes the traditional data center.

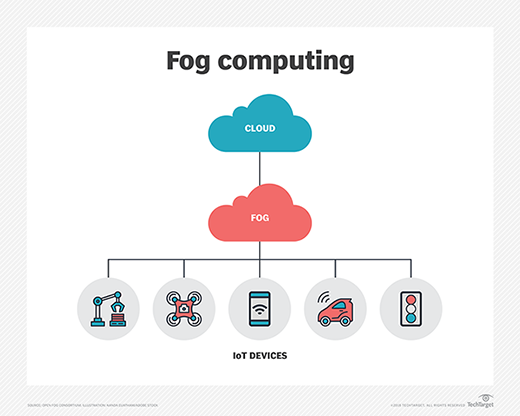

Fog computing

Fog computing refers to a decentralized computing infrastructure in which data, applications, compute and storage sit between where the data originates and the cloud. Fog computing brings the cloud's intelligence, processing, compute and storage capabilities closer to the data for faster analysis and processing. Like edge computing, fog eliminates inefficiencies that come with data transmission and solves privacy and security issues inherent in data transmission.

Fog and edge computing differ in the location of the intelligence and compute power. With fog, intelligence resides on the LAN; data moves from endpoints to a fog gateway and then is routed for processing. With edge computing, intelligence and compute are on an endpoint or a gateway. Edge devices determine whether to store data locally or send it elsewhere for additional analysis. Fog computing is more scalable and has a broader, more holistic view of the network.

Network edge

The network edge is where an enterprise-owned network connects to a WAN, the internet, a public cloud or other network owned by a third party. IoT devices, edge gateways and other edge devices reside at the network edge. It's also the location for edge computing and storage.

Edge device

These devices are usually hardware that sits where networks meet. An edge device controls the flow of data into or out of a network, providing a variety for functions, such as data routing, monitoring, filtering, processing and edge storage. These devices have taken on new roles as IoT technology requires more intelligence, compute power and other services at the network's edge. Devices in this category include firewalls, edge routers, routing switches, as well as IoT actuators and sensors.

Hyper-converged edge system

When dealing with larger edge workloads, enterprises seek edge-optimized, hyper-converged systems. These systems provide stand-alone compute and storage systems and lessen data transmissions to and from a central data center or the cloud to reduce costs and latency-inducing bottlenecks. Hyper-converged systems provide an easily deployed, high-density compute and storage platform. They support a range of enterprise and service provider software stacks, and they can be remotely managed. Compute nodes, memory and edge storage modules can be added to scale a hyper-converged architecture. The systems can also be configured in a distributed, centrally managed cluster.

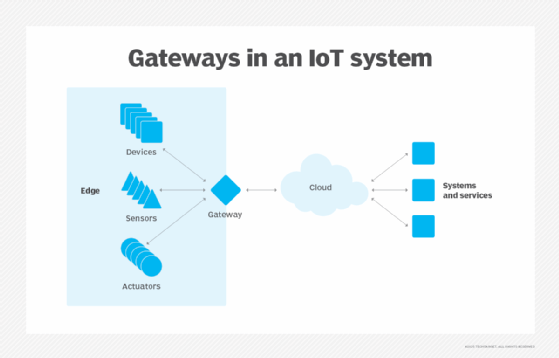

IoT gateways

These small servers sit between an organization's data center or cloud and various endpoint devices. IoT gateways provide the communication, security and admin capabilities needed to keep IoT networks running smoothly. They also run data processing and analytic functions and perform a variety of tasks, such as real-time data analysis and location tracking.

IoT gateways work well for applications that can't tolerate latency and need immediate feedback or instructions. They reduce the amount of data sent across the network for further processing or storage, minimizing network traffic, response times and transmission costs. Because these devices may be in moving vehicles or located in hard-to-reach places, they're often ruggedized to handle extremes in temperature, vibration, dust and pressure. IoT gateways can also provide an extra layer of data and network security with tampering detection, encryption and other safety features.

Micro data centers

Micro data centers are small, modular systems that include servers, storage, UPS, cooling and security functionality in one box to support data generation, processing and management at the edge. One example of their use is to link factory floor sensors to AI software that handles just-in-time manufacturing maintenance. Like other edge devices, they process data closer to its origin, cutting the amount of data transmitted to central data centers or the cloud and the costs of that transmission.

MicroSD cards

These flash memory cards used for storage in smartphones and other mobile devices do the same for edge devices. At a quarter of the size of a regular SD card, microSDs are the smallest memory devices available. One example of their use is video camera backup storage. Micron Technology claims its microSD cards support three years of continuous recording, with a mean time between failure rate of 2 million hours.

NVMe/TCP for edge storage

The NVMe/TCP specification provides NVMe-oF functionality across a standard Ethernet network without the need to reconfigure the network or add specialized equipment. NVMe-oF is a modern storage protocol that takes advantage of flash technology's capabilities in ways that older protocols can't. It fills the gap between DAS and SAN technology, supporting applications that need high throughput and low latency. With NVMe-oF, storage and compute can be disaggregated and independently scaled with minimal impact to latency. With NVMe/TCP, any IP network can be used to bring storage closer to the edge. The technology enables clustering several edge locations into a high-availability storage pool or stateless edge instances using storage at the aggregation layer.

System on a chip

Compact edge devices combine IoT sensors with a system on a chip (SoC). An SoC incorporates all the electronics necessary for a specific compute function on a single integrated chip. It typically includes a CPU, GPU and RAM, along with an OS, applications and other components. The miniature system is tailored for its specific function. For example, a listening device would include an audio receiver, along with other required parts. SOCs use less power and take up less space than systems with multiple chips. They generally perform better than multichip systems and are more reliable.