Amazon SageMaker

What is Amazon SageMaker?

Amazon SageMaker is a managed service in the Amazon Web Services (AWS) public cloud. It provides the tools to build, train and deploy machine learning (ML) models for predictive analytics applications. The platform automates the tedious work of building a production-ready artificial intelligence (AI) pipeline.

Machine learning has a range of uses and benefits. Among them are advanced analytics for customer data and back-end security threat detection.

Deploying ML models is challenging, even for experienced application developers. Amazon SageMaker aims to simplify the process. It uses common algorithms and other tools to accelerate the machine learning process.

Machine learning in AWS SageMaker

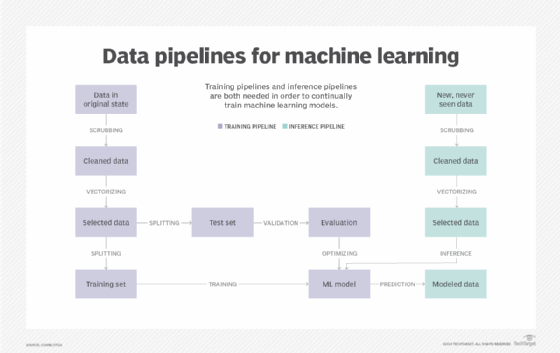

Machine learning is an iterative process. It requires workflow tools and dedicated hardware to process data sets. In a typical scenario, a data science team builds ML models in two steps or pipelines: training and inferencing.

Data training teaches a machine to behave in a certain way based on recurring pattern recognition within data sets. The data is then inferenced or trained how to respond to new data patterns. Once data scientists tune the ML model, software development teams convert the finished model into product or service application program interfaces (APIs).

Many companies don't have the budget to bring in specialists and maintain resources dedicated to AI development. AWS SageMaker uses integrated tools to automate labor-intensive manual processes and reduce human error and hardware costs. ML modeling components are packaged in an AWS SageMaker tool set. Software capabilities are abstracted in intuitive SageMaker templates. They provide a framework to build, host, train and deploy ML models at scale in the Amazon public cloud.

How does Amazon SageMaker work?

AWS SageMaker simplifies ML modeling into three steps: preparation, training and deployment.

Prepare and build AI models

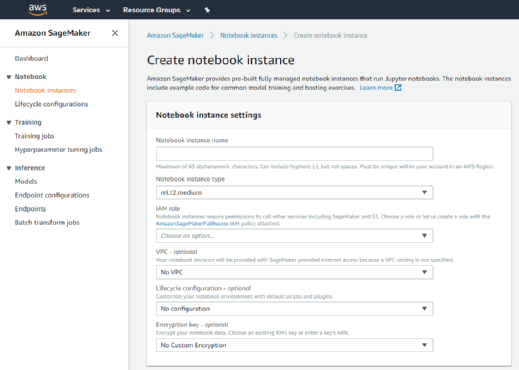

Amazon SageMaker creates a fully managed ML instance in Amazon Elastic Compute Cloud (EC2). It supports the open source Jupyter Notebook web application that enables developers to share live code. SageMaker runs Jupyter computational processing notebooks.

The notebooks include drivers, packages and libraries for common deep learning platforms and frameworks. Developers can launch a prebuilt notebook, which AWS supplies for a variety of applications and use cases. They can then customize it according to the data set and schema that needs to be trained.

Developers also can use custom-built algorithms written in one of the supported ML frameworks or any code that has been packaged as a Docker container image. SageMaker can pull data from Amazon Simple Storage Service (S3), and there is no practical limit to the size of the data set.

To get started, a developer logs into the SageMaker console and launches a notebook instance. SageMaker provides a variety of built-in training algorithms, such as linear regression and image classification, or the developer can import custom algorithms.

Train and tune

Developers doing model training specify the location of the data in an Amazon S3 bucket and the preferred instance type. They then initiate the training process. SageMaker Model Monitor provides continuous automatic model tuning to find the set of parameters, or hyperparameters, to best optimizes the algorithm. During this step, data is transformed to enable feature engineering.

Deploy and analyze

When the model is ready for deployment, the service automatically operates and scales the cloud infrastructure. It uses a set of SageMaker instance types that include several graphics processing unit accelerators optimized for ML workloads.

SageMaker deploys across multiple availability zones, performs health checks, applies security patches, sets up AWS Auto Scaling and establishes secure HTTPS endpoints to connect to an app. A developer can track and trigger alarms for changes in production performance via Amazon CloudWatch metrics.

What features does SageMaker have?

Amazon has rolled out extra features in SageMaker since its 2017 launch. The features are accessible in AWS SageMaker Studio, an integrated development environment (IDE) that consolidates all the capabilities.

Users have two ways to create a Jupyter notebook:

- as an Amazon EC2-powered ML instance directly in Amazon SageMaker; or

- as a web-based IDE instance in SageMaker Studio.

The automation tools in AWS SageMaker Studio help users to automatically debug, manage and track ML models. These SageMaker tools include the following:

- Autopilot enables AI models to be trained for a given data set and ranks each algorithm by accuracy.

- Clarify flags potential bias that could skew ML models.

- Data Wrangler is used to speed up data preparation.

- Debugger monitors the metrics of neural networks to simplify the debugging process.

- Edge Manager extends ML monitoring and management to edge devices.

- Experiments makes it easier to track different ML iterations, including how changes degrade or improve a model's accuracy.

- Ground Truth speeds up data labeling and helps to lower labeling costs when processing large AI training samples.

- JumpStart offers a set of customizable, predesigned AWS CloudFormation templates.

- Model Monitor is an AWS-enabled ML tool to spot application-level deviations that negatively affect the accuracy of predictions.

- Notebook creates Jupyter notebooks with one click and transfers the content of a notebook for collaborative use.

- Pipelines offer developers ML services for continuous delivery and continuous integration.

What are SageMaker use cases?

AWS SageMaker spans diverse industry use cases. Data science teams use SageMaker to do the following:

- access and share code;

- accelerate production-ready AI modules;

- enhance data training and inferences;

- iterate more accurate data models;

- optimize data ingestion and output;

- process large data sets; and

- share modeling code.

According to Amazon, notable brands are using SageMaker in the following industries:

| Automotive | Hospitality |

| Cloud services | Media and entertainment |

| Data analytics | Pharmaceuticals |

| Earth sciences | Publishing |

| Electronics | Retail |

| Energy | Software and service |

| Finance and insurance | Transportation |

| Healthcare | Video and gaming |

Is SageMaker secure?

Because S3 is integrated in AWS SageMaker, the testing, training and validation of data can be stored in a collaborative data lake. This enables users to securely interact with data using the AWS identity and access management framework.

Optionally, Amazon SageMaker encrypts models both in transit and at rest through the AWS Key Management Service. API requests to the service are executed over a secure sockets layer connection. SageMaker also stores code in volumes that are protected by security groups and offer encryption.

For enhanced data security, customers can launch SageMaker in an Amazon Virtual Private Cloud. That approach provides better control of data flowing to SageMaker Studio notebooks.

How does SageMaker's pricing work?

Historically, AWS charged each SageMaker user for the compute, storage and data processing resources used to build, train, perform and log ML models and predictions. Customers also paid for the S3 resources used to store the data sets for training and ongoing predictions.

Today, there are two payment options: on-demand pricing and flexible pricing. Amazon's on-demand pricing is billed by the second and does not require an upfront commitment or a minimum fee.

In April 2021, Amazon announced flexible pricing with the Amazon SageMaker Savings Plan for eligible SageMaker ML instance types. With the savings plan, customers can cut costs by 64% compared with buying capacity on demand, Amazon said. To qualify for the discount, customers must agree to consume a set amount of capacity, measured in dollars per hour, for at least one year.

SageMaker is free on the AWS Free Tier. Customers pay only for Amazon services used within SageMaker Studio.

AWS' main public cloud rivals offer similar services for building ML-enabled infrastructure. Google Vertex AI is part of Google Cloud Foundation. Azure Machine Learning is part of Microsoft Azure.

Find out more about how enterprises are using machine learning in this in-depth guide to the technology.