What is a graphics processing unit (GPU)?

A graphics processing unit (GPU) is a computer chip that renders graphics and images by performing rapid mathematical calculations. GPUs are used for both professional and personal computing. Originally, GPUs were responsible for the rendering of 2D and 3D images, animations and video, but now they have a wider use range.

Like a central processing unit (CPU), a GPU is also a chip component in computing devices. One important difference is that the GPU is specifically designed to handle and accelerate graphics workloads and display graphics content on a device such as a PC or smartphone.

An electronic device with an embedded or discrete GPU can smoothly render 3D graphics and video content, making it suitable for gaming and other visual applications. Over time, technological improvements have resulted in more flexible and programmable GPUs that can be used for many more applications and workloads other than gaming. GPUs are now used for creative content production, video editing, high-performance computing (HPC) and artificial intelligence (AI).

What does a GPU do?

In the early days of computing, the CPU performed the calculations required for graphics applications, such as the rendering of 2D and 3D images, animations and video. As more graphics-intensive applications were developed, however, their demands put a strain on the CPU and decreased the computer's overall performance.

Today, a GPU is a specialized computing system that performs these tasks:

- Handles graphics-related tasks in lieu of the CPU.

- Performs graphics calculations very quickly.

- Uses parallel processing to increase processing performance.

- Delivers graphic content to the computer display.

- Frees up the CPU to handle all other processing tasks.

How does a GPU work?

GPUs work by using parallel processing, where multiple processors handle separate parts of a single task. A GPU will also have its own RAM to store the data it is processing. This RAM is designed specifically to hold the large amounts of information coming into the GPU for highly intensive graphics use cases.

For graphics applications, the CPU sends instructions to the GPU for drawing the graphics content on a screen. The GPU executes the instructions in parallel and at high speeds to display the content on the device -- a process known as the graphics or rendering pipeline.

Are GPUs and graphics cards the same?

GPU and graphics card are two terms that are sometimes used interchangeably. However, there are some important distinctions between the two. The main difference is that the GPU is a specific unit within a graphics card, among other components. The GPU is what performs the image and graphics processing. The graphics card presents images to the display unit.

GPU use cases: What GPUs are used for today

GPUs are widely used for PC gaming, allowing for smooth, high-quality graphics rendering. Modern GPUs are also adapted to a wider variety of tasks than they were originally designed for, partially because they are more programmable than they were in the past. That's why GPUs are now also used to accelerate AI workloads and for machine learning (ML).

Some of the most popular applications of GPUs include:

- Rendering real-time 2D and 3D graphics. These devices perform complicated mathematical calculations using algorithms that convert moving bits into pixels on displays.

- Video editing and video content creation. When used for video content creation and editing, GPUs deliver high-resolution images and videos, enhanced video effects using AI, and provide sufficient RAM to handle the computational requirements of video content.

- Video game graphics. Video game GPUs perform the same kinds of complex calculations using parallel processing as for other graphics tasks, and deliver images and videos by managing tasks such as shading, lighting and texturing, each of which is needed for realistic game visuals.

- Accelerating AI/ML applications. GPUs have the processing power needed to support the massive computations and algorithms used in AI and ML applications, such as image recognition and facial detection and recognition.

- Training deep learning neural networks. GPUs have thousands of smaller core memory units that work in parallel to provide the matrix processing needed for deep learning training and neural networks.

- Cryptomining. GPUs support cryptocurrency mining, especially for coins that employ Proof of Work (PoW) mechanisms for establishing consensus; they also use parallel processing to perform multiple hash functions.

Types of GPUs

Generally, there are three types of GPUs:

- Integrated GPU. An integrated GPU is built into the computer's motherboard or could be integrated with the CPU. Systems with integrated GPUs are generally small and light because less space is required to incorporate the GPU. The use of an internal GPU also reduces system power consumption. When buying a system with an internal GPU, care must be taken as the internal GPU may not be upgradeable. If buying a gaming computer, the GPU is an essential part of the device and should be carefully researched.

- Discrete GPU. This is a physically separate GPU and is mounted separately from the CPU and other peripherals on the motherboard. While such devices typically offer far more processing power than an integrated GPU, they also increase power usage and generate additional heat, which may necessitate additional cooling. Discrete GPUs are used for resource-intensive, high-performance applications such as 3D games.

- Hybrid GPU. A hybrid GPU arrangement can take different forms, such as combining an integrated GPU with a discrete GPU in the same system, with one used for normal graphics and the discrete unit for high-performance requirements. Another approach is to combine discrete graphics performance with specialized accelerators, such as for AI applications.

What is a cloud GPU?

Taking advantage of the convenience, scalability and costs of cloud computing services, users can obtain the GPU functionality they need by obtaining it from a cloud vendor. All three major cloud services, AWS, Microsoft Azure and Google Cloud, offer virtual machines (VMs) with built-in GPU speed and functionality. Cloud GPU services are typically used in compute-intensive situations involving AI, video game development and bitcoin mining. As with any activity involving cloud services, cybersecurity must be carefully managed.

GPU vs. CPU

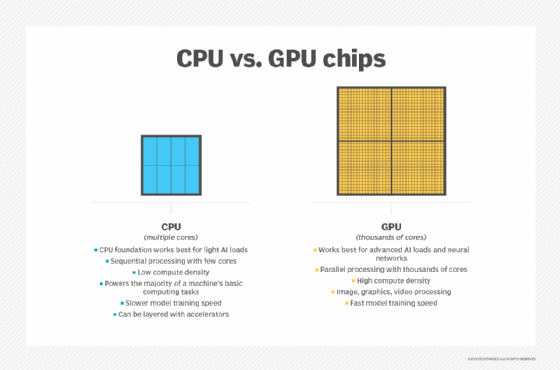

A GPU might be found integrated with a CPU on the same electronic circuit, on a graphics card or in the motherboard of a PC or server. GPUs and CPUs are fairly similar in construction. However, CPUs are used to respond to and process the basic instructions that drive a computer, while GPUs are designed specifically to quickly render high-resolution images and video.

Essentially, CPUs are responsible for interpreting most of a computer's commands, while GPUs perform more complex mathematical and geometric calculations to focus on graphics rendering and other applications that require intensive calculations.

Both processors have different numbers of cores and transistors. The core can be thought of as the processor within the processor. Each core can process its own tasks, or threads. A CPU uses fewer cores and performs tasks sequentially. A GPU, in contrast, might have hundreds or thousands of cores, which allow for parallel processing and lightning-fast graphics output.

A single-core CPU usually lacks the capability for parallel processing, but multicore processors can perform calculations in parallel by combining more than one CPU onto the same chip. GPUs can also contain more transistors than a CPU.

In addition, a CPU has a higher clock speed, meaning it can perform an individual calculation faster than a GPU, so it is often better equipped to handle basic computing tasks.

Data centers use three processing units: CPU, GPU, and DPU. Each has unique functions that can be combined to enhance data center performance. Explore how CPU vs. GPU vs. DPU differ from one another.