multicore processor

What is a multicore processor?

A multicore processor is an integrated circuit that has two or more processor cores attached for enhanced performance and reduced power consumption. These processors also enable more efficient simultaneous processing of multiple tasks, such as with parallel processing and Multithreading. A dual core setup is similar to having multiple, separate processors installed on a computer. However, because the two processors are plugged into the same socket, the connection between them is faster.

The use of multicore processors or microprocessors is one approach to boost processor performance without exceeding the practical limitations of semiconductor design and fabrication. Using multicores also ensure safe operation in areas such as heat generation.

How do multicore processors work?

The heart of every processor is an execution engine, also known as a core. The core is designed to process instructions and data according to the direction of software programs in the computer's memory. Over the years, designers found that every new processor design had limits. Numerous technologies were developed to accelerate performance, including the following ones:

- Clock speed. One approach was to make the processor's clock faster. The clock is the "drumbeat" used to synchronize the processing of instructions and data through the processing engine. Clock speeds have accelerated from several megahertz to several gigahertz (GHz) today. However, transistors use up power with each clock tick. As a result, clock speeds have nearly reached their limits given current semiconductor fabrication and heat management techniques.

- Hyper-threading. Another approach involved the handling of multiple instruction threads. Intel calls this hyper-threading. With hyper-threading, processor cores are designed to handle two separate instruction threads at the same time. When properly enabled and supported by both the computer's firmware and operating system (OS), hyper-threading techniques enable one physical core to function as two logical cores. Still, the processor only possesses a single physical core. The logical abstraction of the physical processor added little real performance to the processor other than to help streamline the behavior of multiple simultaneous applications running on the computer.

- More chips. The next step was to add processor chips -- or dies -- to the processor package, which is the physical device that plugs into the motherboard. A dual-core processor includes two separate processor cores. A quad-core processor includes four separate cores. Today's multicore processors can easily include 12, 24 or even more processor cores. The multicore approach is almost identical to the use of multiprocessor motherboards, which have two or four separate processor sockets. The effect is the same. Today's huge processor performance involves the use of processor products that combine fast clock speeds and multiple hyper-threaded cores.

However, multicore chips have several issues to consider. First, the addition of more processor cores doesn't automatically improve computer performance. The OS and applications must direct software program instructions to recognize and use the multiple cores. This must be done in parallel, using various threads to different cores within the processor package. Some software applications may need to be refactored to support and use multicore processor platforms. Otherwise, only the default first processor core is used, and any additional cores are unused or idle.

Second, the performance benefit of additional cores is not a direct multiple. That is, adding a second core does not double the processor's performance, or a quad-core processor does not multiply the processor's performance by a factor of four. This happens because of the shared elements of the processor, such as access to internal memory or caches, external buses and computer system memory.

The benefit of multiple cores can be substantial, but there are practical limits. Still, the acceleration is typically better than a traditional multiprocessor system because the coupling between cores in the same package is tighter and there are shorter distances and fewer components between cores.

Consider the analogy of cars on a road. Each car might be a processor, but each car must share the common roads and traffic limitations. More cars can transport more people and goods in a given time, but more cars also cause congestion and other problems.

What are multicore processors used for?

Multicore processors work on any modern computer hardware platform. Virtually all PCs and laptops today build in some multicore processor model. However, the true power and benefit of these processors depend on software applications designed to emphasize parallelism. A parallel approach divides application work into numerous processing threads, and then distributes and manages those threads across two or more processor cores.

There are several major use cases for multicore processors, including the following five:

- Virtualization. A virtualization platform, such as VMware, is designed to abstract the software environment from the underlying hardware. Virtualization is capable of abstracting physical processor cores into virtual processors or central processing units (vCPUs) which are then assigned to virtual machines (VMs). Each VM becomes a virtual server capable of running its own OS and application. It is possible to assign more than one vCPU to each VM, allowing each VM and its application to run parallel processing software if desired.

- Databases. A database is a complex software platform that frequently needs to run many simultaneous tasks such as queries. As a result, databases are highly dependent on multicore processors to distribute and handle these many task threads. The use of multiple processors in databases is often coupled with extremely high memory capacity that can reach 1 terabyte or more on the physical server.

- Analytics and HPC. Big data analytics, such as machine learning, and high-performance computing (HPC) both require breaking large, complex tasks into smaller and more manageable pieces. Each piece of the computational effort can then be solved by distributing each piece of the problem to a different processor. This approach enables each processor to work in parallel to solve the overarching problem far faster and more efficiently than with a single processor.

- Cloud. Organizations building a cloud will almost certainly adopt multicore processors to support all the virtualization needed to accommodate the highly scalable and highly transactional demands of cloud software platforms such as OpenStack. A set of servers with multicore processors can allow the cloud to create and scale up more VM instances on demand.

- Visualization. Graphics applications, such as games and data-rendering engines, have the same parallelism requirements as other HPC applications. Visual rendering is math- and task-intensive, and visualization applications can make extensive use of multiple processors to distribute the calculations required. Many graphics applications rely on graphics processing units (GPUs) rather than CPUs. GPUs are tailored to optimize graphics-related tasks. GPU packages often contain multiple GPU cores, similar in principle to multicore processors.

Pros and cons of multicore processors

Multicore processor technology is mature and well-defined. However, the technology poses its share of pros and cons, which should be considered when buying and deploying new servers.

Multicore advantages

Better application performance. The principle benefit of multicore processors is more potential processing capability. Each processor core is effectively a separate processor that OSes and applications can use. In a virtualized server, each VM can employ one or more virtualized processor cores, enabling many VMs to coexist and operate simultaneously on a physical server. Similarly, an application designed for high levels of parallelism may use any number of cores to provide high application performance that would be impossible with single-chip systems.

Better hardware performance. By placing two or more processor cores on the same device, it can use shared components -- such as common internal buses and processor caches -- more efficiently. It also benefits from superior performance compared with multiprocessor systems that have separate processor packages on the same motherboard.

Multicore disadvantages

Software dependent. The application uses processors -- not the other way around. OSes and applications will always default to use the first processor core, dubbed core 0. Any additional cores in the processor package will remain unused or idle until software applications are enabled to use the them. Such applications include database applications and big data processing tools like Hadoop. A business should consider what a server will be used for and the applications it plans to use before making a multicore system investment to ensure that the system delivers its optimum computing potential.

Performance boosts are limited. Multiple processors in a processor package must share common system buses and processor caches. The more processor cores share a package, the more sharing must take place across common processor interfaces and resources. This results in diminishing returns to performance as cores are added. For most situations, the performance benefit of having multiple cores far outweighs the performance lost to such sharing, but it's a factor to consider when testing application performance.

Power, heat and clock restrictions. A computer may not be able to drive a processor with many cores as hard as a processor with fewer cores or a single-core processor. A modern processor core may contain over 500 million transistors. Each transistor generates heat when it switches, and this heat increases as the clock speed increases. All of that heat generation must be safely dissipated from the core through the processor package. When more cores are running, this heat can multiply and quickly exceed the cooling capability of the processor package. Thus, some multicore processors may actually reduce clock speeds -- for instance, from 3.5 GHz to 3.0 GHz -- to help manage heat. This reduces the performance of all processor cores in the package. High-end multicore processors require complex cooling systems and careful deployment and monitoring to ensure long-term system reliability.

Architecture of multicore processors

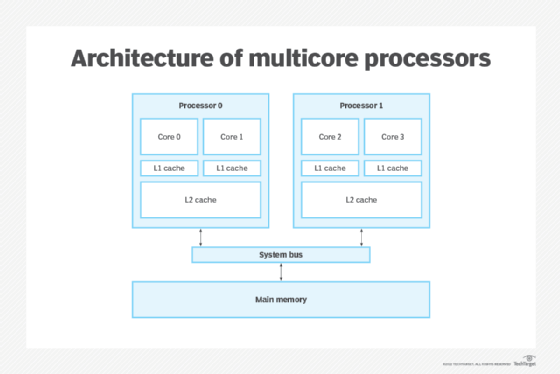

For the purposes of this definition, every multicore processor consists of two or more cores along with a series of caches.

- Cores are the central components or multicore processors. Cores contain all of the registers and circuitry -- sometimes hundreds of millions of individual transistors -- needed to perform the closely-synchronized tasks of ingesting data and instruction, processing that content and outputting logical decisions or results.

- Processor support circuitry includes an assortment of input/output control and management circuitry, such as clocks, cache consistency, power and thermal control and external bus access.

- Caches are relatively small areas of very fast memory. A cache retains often-used instructions or data, making that content readily available to the core without the need to access system memory. A processor checks the cache first. If the required content is present, the core takes that content from the cache, enhancing performance benefits. If the content is absent, the core will access system memory for the required content. A Level 1, or L1, cache is the smallest and fastest cache unique to every core. A Level 2, or L2, cache is a larger storage space shared among the cores. Some multicore processor architectures may dedicate both L1 and L2 caches.

Homogenous vs. heterogeneous multicore processors

The cores within a multicore processor may be homogeneous or heterogeneous. Mainstream Intel and AMD multicore processors for X86 computer architectures are homogeneous and provide identical cores. Consequently, most discussion of multicore processors are about homogeneous processors.

However, dedicating a complex device to do a simple job or to get greatest efficiency is often wasteful. There is a heterogeneous multicore processor market that uses processors with different cores for different purposes. Heterogeneous cores are generally found in embedded or Arm processors that might mix microprocessor and microcontroller cores in the same package.

There are three general goals for heterogeneous multicore processors:

- Optimized performance. While homogeneous multicore processors are typically intended to provide vanilla or universal processing capabilities, many processors are not intended for such generic system use cases. Instead, they are designed and sold for use in embedded -- dedicated or task-specific -- systems that can benefit from the unique strengths of different processors. For example, a processor intended for a signal processing device might use an Arm processor that contains a Cortex-A general-purpose processor with a Cortex-M core for dedicated signal processing tasks.

- Optimized power. Providing simpler processor cores reduces the transistor count and eases power demands. This makes the processor package and the overall system cooler and more power-efficient.

- Optimized security. Jobs or processes can be divided among different types of cores, enabling designers to deliberately build high levels of isolation that tightly control access among the various processor cores. This greater control and isolation offer better stability and security for the overall system, though at the cost of general flexibility.

Examples of multicore processors

Most modern processors designed and sold for general-purpose x86 computing include multiple processor cores. Examples of latest Intel 12th-generation multicore processors include the following:

- Intel Core i9 12900 family provides 8 cores and 24 threads.

- Intel Core i7 12700 family provides 8 cores and 20 threads.

- Top Intel Core i5 12600K processors offer 6 cores and 16 threads.

Examples of latest AMD Zen multicore processors include:

- AMD Zen 3 family provides 4 to 16 cores.

- AMD Zen 2 family provides up to 64 cores.

- AMD Zen+ family provides 4 to 32 cores.