shyshka - Fotolia

Set up a data lake on AWS with Lake Formation

Modern businesses must organize an unprecedent amount of information. Find out how AWS Lake Formation can help your business manage and analyze high volumes of data.

Businesses can generate and access large amounts of structured and unstructured data -- and this poses a serious problem, because data can easily overwhelm.

Businesses collect data from myriad sources, including log files, sales records, social media and IoT networks. A business needs to know what data is available, how various data sources relate and how to use that diverse array of data to discover new opportunities and make better business decisions.

Businesses can address this issue with a data lake, and on AWS, they can do so with Lake Formation. Data analysts can use this managed service to ingest, catalog and transform vast volumes of data, which can then be used for tasks such as analytics, predictions and machine learning. Let's take a closer look at how organizations can utilize a data lake on AWS with Lake Formation.

Data lake basics

While a data lake can store a large amount of data, AWS Lake Formation provides more than capacity. Users can implement capacity within the cloud with Amazon S3 buckets or with any local storage array. The true value of a data lake is the quality of the information it holds.

A typical DIY data lake relies on curation by a suite of tightly integrated services that ensure data quality. These services gather, organize, secure, process and present diverse datasets to users for further analysis and decision-making.

IT teams often struggle to implement and manage the necessary integrated services to support a data lake. These services can cover a broad range of capabilities, including tools that ingest structured and unstructured data from a range of sources; deduplicate and oversee the ingested data for integrity; place the ingested data into prepared partitions within storage; incorporate encryption and key management; invoke and audit authentication and authorization features; identify relationships or similarities between data, such as matching records; and define and schedule data transformation tasks.

AWS Lake Formation and other cloud-based data lake services are particularly helpful in coordinating these efforts because all of those services are already integrated with the data lake. Data analysts and admins can then focus on defining data sources, establishing security policies and creating algorithms to process and catalog the data. Once data is ingested and prepared, it can be used by data analytics and machine learning services such as Amazon RedShift, Amazon Athena and Amazon EMR for Apache Spark.

How AWS Lake Formation works

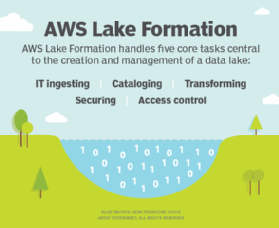

AWS Lake Formation handles five core tasks that are central to the creation and management of a data lake -- ingesting, cataloging, transforming, securing and access control.

With Lake Formation, users define their desired data sources and the service routinely crawls those sources for new or changed content before ingestion. Data is cataloged during ingestion, which efficiently spreads, organizes and correlates data around tags, such as query terms. It is vital to catalog resources within a data lake so the metadata can be used to better understand and locate data.

In addition, Lake Formation routinely transforms data in preparation for further processing. Lake Formation can deduplicate redundant data and find matching records, as well as reformat data for analytics tools such as Apache Parquet and Optimized Row Columnar. AWS Lake Formation also emphasizes data security and business governance through an array of policy definitions, which are implemented and enforced even as the service accesses data for analysis. Lake Formation has granular control features to ensure data is only accessed by approved users.

AWS Lake Formation relies on other related services to form a complete data lake architecture, especially Amazon S3, which serve as the primary repository for the service. S3 can also be a target for the data that AWS Lake Formation ingests, catalogs and transforms. For example, data scientists who conduct analytics and machine learning in AWS routinely store the results of their efforts in S3.

Aside from basic transformations, AWS Lake Formation itself does not perform major analytics. Instead, Lake Formation is coupled with other AWS analytics and machine learning services -- Amazon Redshift, Athena and EMR for Apache Spark. This enables flexibility in analytics, allowing users to deploy preferred services -- or even utilize third party analytics tools or platforms such as Tableau.

Lake Formation pricing and availability

AWS Lake Formation is currently available in all commercial U.S. regions and nearly every international region. There is no additional charge for using Lake Formation. However, Lake Formation requires interaction with numerous other Amazon services in order to implement a complete data lake. Usage of related services with Lake Formation, such as Amazon S3, AWS Glue, Amazon EMR and Amazon Cloudtrail, come with additional charges.

AWS says most common tasks with Data Lake cost less than $20. But the size of your data lake and the corresponding costs will only rise over time as you store larger data sets in S3, run more AWS Glue jobs and utilize more analytics tools. And this will only escalate when the resources are accessed by multiple businesses or users through the organization. To understand this assortment of fees that come with AWS Lake Formation, enterprise users should regularly review monthly charges of all the services used to support the data lake service implementation.

Lake Formation use cases

Data lakes exist to organize and prepare business data for further processing and decision-making tasks by other applications and services. Let's take a look at some examples in which AWS Lake Formation, paired with analytics and machine learning services, is an asset to organizations across a range of industries.

Research analytics. Scientific research, such as genomics or drug development, yields enormous volumes of test data. But correlating the myriad factors and assessing the effectiveness of one choice over another can be impossible for humans. Lake Formation can ingest scientific data and use analytics tasks to help form hypotheses, adjust or disprove previous assumptions or relationships and determine the actual results of a test suite. This leads to more efficiently designed products.

Customer analytics. Businesses collect a diverse range of customer data, including relationship management platform data, social media content, purchasing history data, help desk ticket transactions, email and messaging history. By ingesting and cataloging all that information into a data lake for analysis, a business can dig deeper on factors such as customer demographics and locations, the underlying causes of user dissatisfaction or the best ways to promote customer loyalty.

Operations analytics. Complex manufacturing and other industrial installations can involve many different processes related by physical factors, such as pressure and temperature conditions. The growth of the internet of things allows devices to collect and provide previously unavailable details about the industrial environment. Data lakes can hold that data, which can then be used to correlate factory conditions to product or industrial outcomes, such as the best conditions for a strong weld or the most efficient way to position a wind turbine.

Financial analytics. Financial institutions use detailed records and activity logs to track countless transactions across the globe. There is a growing need for financial security and fraud detection in these institutions. With a data lake on AWS, organizations can funnel transaction data into Lake Formation, and the analytics team can then search for possible fraudulent activity, such as purchases made too far away from an account holder.

Lake Formation alternatives

AWS Lake Formation is just one option among many. IBM, Cloudera and Cazena offer their own data lake services, as do public cloud providers Microsoft and Google.

Azure Data Lake is designed to ingest and analyze petabyte-size files and trillions of objects using analytics tools such as U-SQL, Apache Hadoop, Azure HDInsight and Apache Spark. Azure uses the Hadoop Distributed File System as the principal data lake storage format, which offers compatibility with other open source analytics tools for structured, semi-structured and unstructured data.

Similarly, Google BigQuery is a highly-available, petabyte-scale data warehouse service with an in-memory business intelligence engine and integrated machine learning capabilities. BigQuery works with GCP's Cloud Dataproc and Cloud Dataflow services, which integrates with other big data platforms to handle existing Hadoop, Spark and Beam workloads.