Pros and cons of cloud computing explained

Scalability, flexibility, lower costs and fast connectivity are among the cloud's advantages that must be weighed against vendor lock-in, internet dependence and complex pricing.

The concept of computing as a utility is hardly new and traces its fundamental roots back to time-shared mainframes of the 1960s and 1970s accessed through dial-up telephone networks. But it wasn't until the dawn of the 21st century when a reintroduction of hardware virtualization technology -- along with the ready availability of plentiful and cheap computing, storage and network capacity -- set the stage for a practical, reliable and affordable reimagining of utility computing: cloud computing.

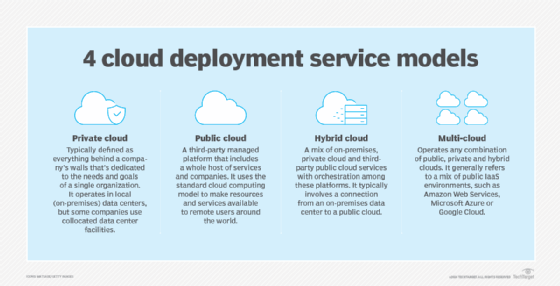

Public cloud computing provides a wealth of hardware resources and well-defined services that users can access through an internet connection, integrate as desired, scale as needed to host enterprise workloads and data, and then pay only for the resources and services consumed. This has enabled businesses of all sizes to migrate IT infrastructures from in-house capital investments to manageable and controllable recurring monthly expenses.

The immense variety of cloud computing services available today would stun even the visionaries at Amazon who reinvented the concept of rentable infrastructure for the internet era. The industry's powerful innovations have sparked a rapid pace of technological growth that's divided into three subcategories -- IaaS, PaaS and SaaS -- with hundreds of services spread across scores of vendors.

Nonetheless, when most enterprise IT operations teams think of cloud computing, they gravitate toward IaaS and SaaS. This is because many businesses turn to cloud services to improve IT efficiency, flexibility and responsiveness to changing business needs. PaaS, which is targeted at developers, is far less popular than the other two categories.

To familiarize themselves with cloud technology basics, users should understand the pros and cons of cloud computing, popular use cases, major players in the current IT market and how those players influence other vendors.

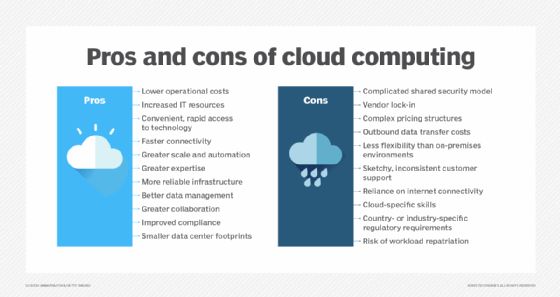

Pros of cloud computing

Early public cloud adopters, particularly those in testing and development, were drawn by the cost and convenience of cloud infrastructure. For them, cloud services eliminated the approvals and budgeting procedures required to buy servers and the time needed to configure a workload deployment environment. As the early cloud evolved, businesses realized that the cloud was capable and reliable enough to host all but the most sophisticated and demanding enterprise workloads, making the public cloud an attractive alternative to complex and costly on-premises IT data centers.

Many businesses still see cost as a significant benefit when they weigh the pros and cons of cloud computing. However, as enterprises gain experience with sizable fleets of cloud resources, IT teams learn that cloud cost calculations are complicated and nuanced. It's often cheaper to deploy static workloads with large data sets on-premises with dedicated servers. Today, the cloud cost discussion is viewed more from the perspective of value rather than bottom-line price. The goal is to use a cloud infrastructure capable of delivering optimum business value in terms of workload performance, security and reliability.

Although on-premises infrastructure can be more affordable in some cases, seasoned cloud users are still attracted by the financial flexibility and efficiency of the cloud. They prefer to replace large, upfront capital expenses and ongoing hardware and software support charges with monthly or annual operational expenses. The following are some other notable cloud benefits.

- Lower operational costs. The cloud vendor assumes many equipment and software management tasks, from servers and networking gear to cloud storage. That includes applying software updates and security patches.

- Increased IT resources. Enterprises can access more resources for internal service development and digital transformation projects that directly support business units for easier business experimentation and innovation.

- Convenient, rapid access to technology. Enterprises can work with the latest hardware and software -- such as new CPUs, GPUs, Tensor Processing Units, machine learning and AI applications and network interfaces -- often before it's available or affordable to enterprise buyers.

- Faster connectivity. Cloud providers invest in the latest network interface cards and switches, along with multi-Gbps circuits to internet exchange points. This provides the fastest access to data and applications both within the data center and by customers.

- Greater scale and automation. The public cloud is engineered for massive scale. Providers can easily expand resource capacity for individual services to meet customers' workload demands. Many cloud resources and services can be provisioned and scaled with a high degree of automation using cloud providers' tools and monitoring services. This allows more resources to be provisioned as workload demands increase and also reduces resource use and cost during periods of off-peak demand.

- Greater expertise. Few businesses possess the internal expertise in secure infrastructure and security engineering offered by cloud providers. This expertise allows for highly specialized services, such as powerful analytics and AI, which might be impossible to implement with local data center staff.

- More reliable infrastructure. The resilience and redundancy found in cloud providers' physical infrastructure far outstrips what most companies can afford to build or operate. Cloud customers also can access multiple cloud locations, which simplifies redundant deployments. Some cloud services offer built-in multisite redundancy. The cloud offers significant uptime benefits and has emerged as a reliable infrastructure for resilient and disaster-resistant computing.

- Better data management. Cloud computing offers varied types and tiers of data storage. The onus is on businesses to understand what data is migrated to the cloud and how that data is protected, used, retained, shared and ultimately destroyed. The cloud is also a well-proven platform for protection against data loss, as well as enhancing backup and disaster recovery (DR) initiatives. More recently, cloud data management has emerged as a central resource for machine learning training and validation of data sets.

- Greater collaboration. The ease and speed with which anyone can use the public cloud make coordination and collaboration essential. Businesses that seek better communication and collaboration between teams and departments will find ample opportunities arising from cloud computing projects. Without careful collaboration, it's easy to waste money on redundant and unneeded resources that blunt the business benefits of the cloud.

- Improved compliance. Cloud providers are increasingly tailoring resources and services to industry sectors that require adherence to specific compliance requirements such as HIPAA compliance for healthcare businesses. Businesses without specific industry obligations can also implement cloud use, data protection, security and business continuance policies that can help enhance general regulatory requirements.

- Smaller data center footprints. Using a public cloud allows businesses to reduce the number of resources and services required within their data centers. This lets businesses reduce, repurpose or even eliminate on-premises infrastructure entirely.

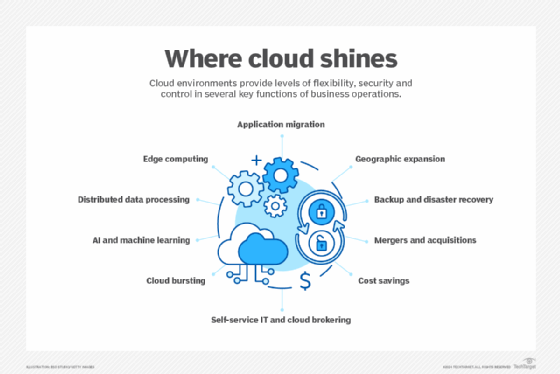

Data backup and DR are often a company's first foray into the cloud, but the richness of services means no application or workload type is off limits -- a primary reason why many businesses design next-generation applications around the cloud. Many also look to migrate legacy systems to the cloud, with the following categories as the most common:

- Databases of various sizes and types, along with complex back-end systems for enterprise applications such as ERP, CRM and financial processing.

- Websites, web-based applications and content distribution such as streaming.

- Data analytics for big data, machine learning and AI projects, including IoT-streamed data consolidation and analysis.

- Miscellaneous legacy software running on internal VM environments.

- Software development, testing and DevOps workflows.

Businesses have also discovered that the cloud is an excellent fit for containerized applications using microservices and AI application development and deployment. Cloud providers have responded with a rich suite of container engine and orchestration services, such as Docker and Kubernetes, to deploy and manage containers with a high degree of automation.

Cons of cloud computing

Although the cloud has been a boon for IT companies, cloud services aren't a panacea for all IT operational problems. A company must balance its many benefits with the following downsides:

- A complicated shared security model. Security policies and management are split between the provider and user. Understanding the division in this shared responsibility is crucial, as mistakes or neglect can expose vast amounts of sensitive data.

- Vendor lock-in. Cloud vendors aren't ubiquitous. Cloud providers share many common service types, but access techniques such as APIs, service levels and pricing can vary dramatically. It might not be possible to migrate a workload from one cloud provider to another without some amount of rearchitecting of the new cloud environment.

- Complex pricing structures. Some services, such as compute instances, have multiple subscription tiers and pricing schemes. These variables make understanding the pricing and total cost of ownership tedious, error-prone and time-consuming; doing so typically requires software assistance from built-in or third-party tools and advanced practices such as FinOps. The addition of free service levels and discount availability only adds complexity to pricing considerations.

- Outbound data transfer costs. It's expensive to egress large data sets from a cloud provider to the local data center or another cloud, which also creates a disincentive for a company to move from one cloud provider to another. Data egress costs, combined with limited bandwidth for data movement, can slow vital data recovery processes when restoring data from cloud storage to on-premises storage.

- Less flexibility than on-premises environments. Many configuration choices are made by the provider, so customers have limited control over the resources, services and performance of a cloud provider's infrastructure.

- Sketchy, inconsistent customer support. Cloud service providers can be difficult to reach or slow to respond to technical issues or cost concerns. As a result, many businesses contract with a third-party cloud management and support partner.

- Reliance on internet connectivity. Cloud computing requires either reliable connections to networks and the internet or a direct private link to the provider. This is especially important for remote locations such as edge facilities. Internet connectivity must provide the bandwidth and latency needed to support remote workloads running in the cloud. Any disruptions to internet connectivity can disrupt cloud access.

- Cloud-specific skills. Most internal IT companies don't possess the cloud design and operations expertise found on a cloud provider's payroll. Such cloud-skilled staff can be hard to recruit and retain, as workers with those advanced skills are attractive to other organizations as well as to the cloud providers themselves.

- Country- or industry-specific regulatory requirements. Businesses must plan carefully, especially when data and workloads are hosted outside one's residence or country with strict privacy laws. Note that a cloud provider's presence in a particular location might imply jurisdiction and a need to comply with local regulations. In addition, emerging data sovereignty requirements can complicate data placement and retention demands in different global jurisdictions.

- Risk of workload repatriation. Ideally, a workload, its data and its dependencies can be successfully migrated to the cloud. But there are cases when workloads can't perform in a way required for the business. There are circumstances when a troubled workload might need to be migrated from the cloud back to on-premises infrastructure. The repatriation process can prove problematic without careful planning and preparation.

Weighing cloud options for your business

Most businesses find cloud services are a superior alternative to traditional data centers for some workloads. The trick for enterprises is to find the balance of cloud and on-premises resources by assessing the best fit for both their legacy and future applications. Weigh and compare all factors -- performance, efficiency, speed, scalability, reliability, security and lifetime cost -- between a cloud service and on-premises systems, or even a private cloud setup. If the scales tip toward the cloud, lock it in with clearly defined service-level agreements and take advantage of long-term usage discounts if possible. Emerging FinOps practices can help improve a company's cost control over cloud utilization.

For some businesses, particularly SMBs and startups, an all-cloud future makes sense, whereas large enterprises generally converge on an optimal mix of cloud and traditional infrastructure.

The big 3 dominate the cloud market

Most of the focus of the cloud market falls on the top three providers: AWS, Microsoft and Google. All three now offer hundreds of tools and services, but it wasn't always this way.

In 2006, AWS was relaunched with three services that are still core to its portfolio: Amazon Elastic Compute Cloud (Amazon EC2), Amazon Simple Storage Service (Amazon S3) and Amazon Simple Queue Service (Amazon SQS). Over its first decade, AWS regularly improved the capabilities and purchase flexibility of each service and vastly expanded its range of infrastructure services. These additions include container clusters, serverless functions, block and network file storage, multiple SQL and NoSQL databases, network and content delivery systems, as well as a host of monitoring, management and security features.

Google, which had long used its own internal cloud infrastructure to power its search engine, ad brokerage and consumer applications, made its first foray into the IaaS market in 2008 with the limited functionality of App Engine. Microsoft entered the cloud market in 2010 with Azure and added Azure Stack, its hybrid infrastructure, six years later.

Throughout the last decade, the Big Three grew to dominate the market. A November 2024 report by Synergy Research Group noted that Amazon possesses 31% of cloud worldwide market share in Q1 2024, Microsoft holds 20% and Google ranks third at 13%. Oracle, Huawei, Snowflake and Cloudflare make up the remaining global cloud market.

The shift from infrastructure to apps

The latter half of the 2010s saw cloud vendors expand into higher-level services, a trend that continues to this day. Many of these products encapsulate the core back-office functions required by enterprise IT. For example, database services have been a central offering for years, and cloud providers quickly began to offer packaged services for security, identity management, and monitoring and management automation, which administrators and developers can use to streamline daily tasks.

In addition to these infrastructure management tools, cloud vendors have added more sophisticated virtual network offerings, DevOps services such as code repositories and CI/CD pipeline automation, and cost and configuration management services. Other services, such as container support, are handled through services such as Docker and Kubernetes.

More recently, cloud competition has shifted to packaged applications for developers and data analysts, such as AI and machine learning services. As cloud vendors have moved up the software stack, services decouple users from the underlying infrastructure by automatically provisioning the compute and storage capacity required to handle a workload and then decommissioning it when the task is done. These serverless products further reduce management overhead by eliminating the need for users to provision and configure infrastructure services. Instead, IT pros merely need to invoke the proper APIs.

Editor's note: This article was updated in January 2025 to reflect the latest advantages and disadvantages of cloud computing.

Stephen J. Bigelow, senior technology editor at TechTarget, has more than 30 years of technical writing experience in the PC and technology industry.