Classical vs. quantum computing: What are the differences?

Classical and quantum computers differ in their computing capabilities, how they operate and the resources they need. Know the differences to plan for a quantum future.

Classical computing has been the norm for decades, but in recent years, quantum computing has continued to develop rapidly. While the technology is still in its early stages, it has significant potential for AI, cybersecurity, optimization, modeling and other applications.

It could be years before quantum computing is widely available. However, it's not too early to acquire a strong grasp of the differences between classical and quantum computing to be ready for the time when quantum is a realistic option for business and IT leaders.

Differences between classical computing vs. quantum computing

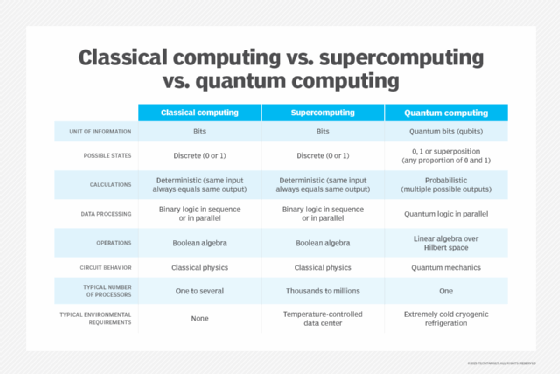

Classical and quantum computers differ in three primary ways.

First, while classical computers use bits -- the familiar 0s and 1s -- of binary computing to represent data and logic, quantum computers use qubits, which can be 0, 1, both simultaneously or any state in between.

Second, quantum computers must typically be operated in more finely controlled physical conditions than classical computers. That's because some properties of quantum mechanics -- the branch of physics dealing with the behavior of subatomic particles -- mean qubits are easily disturbed by heat and vibration.

Third, in theory, quantum computers will have more compute power and be more scalable than classical computers because of qubits' unique properties.

Units of data: Bits vs. qubits

Classical computers use bits as their basic unit of data and binary (two-value) logic for calculations. The 1s and 0s typically indicate the state of on or off, respectively. They can also indicate true or false, or yes or no, for example.

Classical computers also typically use serial processing, meaning one operation must complete before another can start. However, many high-end systems use parallel processing, which means they can perform tasks simultaneously. All classical computers also return the same result each time a computation is repeated; the results don't vary.

Quantum computing follows a different set of rules. As noted, qubits can exist in multiple states at once because of a unique quantum feature called superposition, where properties are not defined until they are measured. Superposition enables groups of qubits to handle complex, multidimensional computations.

Another important characteristic of quantum mechanics is entanglement, in which quantum particles are linked together and share the same fate, even if they're separated by great distances. When qubits become entangled, changes to one qubit directly affect the other, which in turn enables parallel processing. This parallelism theoretically enables quantum computers to scale up compute capacity faster than classical computers. It also makes them more able to account for multiple outcomes having different probabilities. This provides further advantages for complex computations, especially to solve optimization, simulation and modeling problems -- another important difference between the two computing paradigms.

But there's an important caveat: Quantum computers have yet to reach the scale of classical computers, especially in large data centers or supercomputers. Building a quantum computer with sufficient qubits to reach comparable scale remains a major challenge.

Power of classical vs. quantum computers

Most classical computers operate through Boolean logic and algebra, and power increases linearly with the number of semiconductor transistors in a system -- the 1s and 0s. Compute power increases in a 1-1 relationship with the number of transistors.

In comparison, because a quantum computer's qubits can represent a 1 and 0 at the same time, its compute power increases exponentially in relation to the number of qubits. The number of possible computations is 2N, where N is the number of qubits.

Operating environments

Classical computers are well suited for everyday use in normal environments. Consider something as simple as a standard laptop: Most people can take one out of their briefcase and use it in an air-conditioned café or outside on a sunny summer day. In such environments, the performance of all but the most compute- and disk-intensive applications won't take a hit.

Data centers and parallel-processing supercomputers are more complex and sensitive to temperature but still operate within what most people consider reasonable temperatures, such as room temperature. For example, ASHRAE recommends that data center hardware stay at 18 to 27 degrees Celsius, or 64.4 to 80.6 degrees Fahrenheit.

Some quantum computers, in contrast, must be in stringently controlled environments. Some need to be kept at absolute zero, which is around minus 273.15 degrees Celsius or minus 459.67 Fahrenheit, although several companies are working to develop room-temperature quantum computers.

The reason for the extremely cold operating environments is that mechanical and thermal disturbances can cause the atoms to lose their quantum coherence -- essentially, the ability of a qubit to represent both a 1 and a 0 -- which can cause errors in computations.

Why data center managers should take note of quantum computing

Like most technologies, quantum computing poses opportunities and risks. While it might be a while before quantum computers really take off, data center managers are starting to have conversations with their leadership and developing plans for quantum computing.

Even organizations that don't plan on implementing quantum computing in their business still need to prepare for the external threats quantum computing could pose. Quantum computers can potentially crack even the most advanced security measures. For example, a motivated hacker could, in theory, use quantum computing to quickly break the cryptographic keys commonly used in encryption.

Organizations considering quantum computers for their data centers or certain applications will have to prepare their facilities. Like any other piece of infrastructure, quantum computers need space, electricity and other resources to operate. It's a good idea to begin examining the available options to accommodate them, including budget, facilities and staffing.

Ryan Arel is a former TechTarget associate site editor.