putilov_denis - stock.adobe.com

AI risk management: A strategic guide for enterprise leaders

Enterprises across business sectors are adopting AI technologies, but are they prepared to deal with the risks? Our AI risk management guide can help.

AI adoption across all business sectors continues to grow steadily. According to a McKinsey & Company survey, "The state of AI: How organizations are rewiring to capture value," 78% of respondents report that their organizations are using AI in at least one business function, up from 55% in 2023. Experts expect these numbers to grow further as more companies rush to adopt AI for different business functions. But are organizations prepared for adoption at this scale?

AI adoption brings numerous benefits to businesses, such as increased productivity, enhanced customer experience, stronger competitive positioning and reduced operational costs; however, failing to adopt AI effectively can limit its advantages and even impede organizational objectives. Leaders must prepare for the risks associated with AI, including security and operational concerns. Having a proactive, comprehensive risk management strategy is critical to ensure success and sustained trust among stakeholders.

Discover the risks associated with AI implementations throughout their lifecycle and the best methods to mitigate and manage them. Then use the checklist below as a template to assess AI risks and their effect on business processes and implement control plans to contain each risk.

Understanding the AI lifecycle and risks

Developing and deploying effective AI systems requires following a predefined lifecycle composed of distinct phases, each with its own risks and challenges. To better prepare for each unique challenge, teams and stakeholders must understand the following phases of the AI lifecycle.

Design and development. In the first phase, data is collected, AI models are trained on it and initial testing occurs. For example, when designing a fraud detection system, the first step is to collect historical transaction data to train the model, then select appropriate algorithms (e.g., neural networks) and train the model to identify suspicious patterns.

Deployment. Next, the AI system is integrated with existing infrastructure and becomes accessible to end users. In the fraud detection example, the system would integrate with the bank's transaction software to automatically detect fraudulent transactions.

Maintenance and monitoring. This is a continuous phase as teams must monitor their AI system to ensure it behaves properly. This involves tracking AI performance, updating the training data sets and addressing any emerging issues that require a core system update. In our example, continually updating the fraud detection system ensures it remains effective against evolving fraud tactics.

Understanding the risks associated with each of these phases is critical to a responsible AI risk management strategy.

Categories of AI risks

AI systems are subject to risks that fall into four major categories:

- Technical.

- Operational.

- Ethical.

- Regulatory.

Understanding these risks is critical to securing AI systems and ensuring their reliability, compliance and trustworthiness throughout their lifecycle. Consider the following categories:

Technical risks. Technical risks include both security vulnerabilities inherent to AI systems and the limitations of AI systems themselves. For example, data quality affects how an AI system operates; if the data is incomplete, biased or manipulated (e.g., through data poisoning), the model can produce inaccurate or misleading outputs, because it is limited to its training data. A medical diagnostic system trained on incomplete or unrepresentative data sets could produce unsafe or unreliable recommendations, endangering patients.

AI systems are also vulnerable to adversarial attacks, including adversarial inputs designed to manipulate model outputs, prompt injection attacks in generative AI systems and model extraction attacks that aim to replicate model functionality, thereby stealing intellectual property.

Operational risks. How an organization integrates, manages and maintains an AI system within its existing systems is important to consider. An AI-powered customer support chatbot might not integrate seamlessly with the organization's CRM. This leads to fragmented customer service, which affects the customer experience.

Another operational risk for an AI system is a lack of explainability. The AI decision-making process must be clear so that users can understand why certain decisions were made. In a bank system where AI approves loans, bankers need to understand why AI approved or rejected a loan.

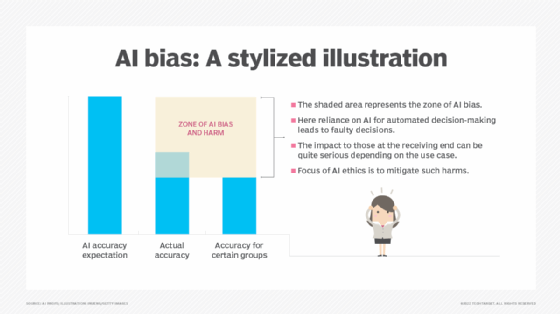

Ethical risks. Using AI systems comes with inherent ethical risks. AI systems could discriminate against specific ethnic groups during recruitment. With access to vast amounts of personal data, such as surveillance cameras that record people's faces or medical systems that record sensitive patient information, privacy also becomes a risk with AI systems.

A lack of accountability becomes another ethical risk with AI. Who is to blame when the AI system makes mistakes? Think of an autonomous vehicle causing an accident. Who is responsible: the manufacturer, the software developer or the operator?

Regulatory risks. These risks include noncompliance with data protection regulations and laws that govern the use of AI at work. If an organization's AI system processes personal data of EU citizens, it should be subject to the GDPR.

In the U.S., the NIST AI Risk Management Framework (AI RMF) provides guidelines for the safe implementation of AI systems. Although the U.S. framework is voluntary, failing to follow its best practices could make businesses subject to legal challenges and reputational damage.

Evaluating and prioritizing AI risks

Effective AI management requires organizations to have a framework for evaluating and prioritizing risks. This ensures adequate resources are allocated to the correct place to mitigate the most critical threats. Consider the following tips for evaluating and prioritizing risks to AI systems.

Risk identification across the AI lifecycle

Identifying AI risks is a continual process integrated across the entire AI lifecycle and across all risk categories. Consider a diagnostic AI system in healthcare. In the design and development phase, technical risks could arise from insufficient data sets for model training, leading to errors in diagnosing some diseases or patients. Ethical AI risks could include a lack of transparency into how the system reaches its conclusions or results, which can severely undermine clinical trust. Operational risks during deployment could create integration issues with the AI system and existing hospital medical systems, leading to fragmented patient diagnoses across systems. If the AI system improperly processes patients' sensitive data, it can breach regulatory protocols, such as HIPAA.

Assessing business impacts

After identifying risks, assess their potential business impact. If a financial organization's AI-powered credit scoring system suffers from model bias, this technical risk could result in regulatory fines and lost revenue due to unfairly rejected applicants. This also erodes customer trust. This could also affect operations as the organization increases manual workloads to correct the AI system's biased decisions. The business could also face legal risks, such as customers filing lawsuits for discrimination, which would cost the organization more time and money to handle.

Likelihood and impact matrices

These matrices can prioritize risks by mapping their probability of occurrence against their severity. For example, we can categorize likelihood in the matrix as follows:

- Rare (unlikely to occur).

- Possible (could occur).

- Likely (expected to occur).

- Almost certain (highly probable).

Then, we can categorize impact as follows:

- Low (minimal disruption).

- Medium (moderate disruption).

- High (significant disruption).

- Critical (catastrophic consequences).

A risk with a "critical" impact and an "almost certain" likelihood in an AI library would be a top priority. In contrast, a "rare" likelihood and "low" impact risk that requires significant resources to execute and causes minor damage would be a lower priority.

Risk scoring

Risk scoring assigns a numeric value to each risk. This lets stakeholders compare and prioritize risks by severity. Do this by multiplying the assigned numerical values for likelihood and impact. For instance, if likelihood is scored from "1 - Rare" to "4 - Almost certain" and impact from "1 - Low" to "4 - Critical", a risk with a "4 - Almost certain" likelihood and "4 - Critical" impact would receive a score of 16, which indicates extreme priority.

AI risk management checklist

Use this free checklist to establish AI risk management best practices within your business.

Mitigating and controlling AI risks

Effective AI governance spans beyond identifying and prioritizing risks. It requires developing and implementing comprehensive mitigation and control strategies. This enables organizations to mitigate risks from adversarial attacks and to ensure responsible, trustworthy AI deployments.

The following strategies suggest security controls to mitigate AI risks:

Technical controls

Technical controls protect against the inherent vulnerabilities of AI systems. Consider the following focus areas to implement technical controls:

- Data quality. Implement data validation to ensure that the data fed into the AI system is consistent and clean.

- Explainability. To address model explainability, implement tools such as local interpretable model-agnostic explanations (LIME) or Shapley additive explanations (SHAP), which help interpret a model's decision-making process.

- Security measures. Implement encryption to protect AI model data at rest and in transit.

- Red teaming. Conduct regular red teaming exercises to ensure in-house teams discover security vulnerabilities before threat actors exploit them.

Operational controls

Operational controls concern the deployment and maintenance of AI systems and their integration with existing infrastructure. Ensure performance monitoring to prevent model drift from causing inaccurate results. Have a ready incident response plan to counter any AI system failure. Finally, include a guideline on when a human observer should interfere in the AI's autonomous decision-making process. This provides failure containment, avoiding damage.

Ethical controls

Ethical controls ensure fairness, privacy and accountability within the AI system. These controls are critical for reducing harm, ensuring user trust in AI output and aligning AI deployments with organizational and regulatory expectations. Organizations should regularly audit their AI models to detect and mitigate bias in both training data and model outputs, so they can correct it before it affects the final output. Design AI models using a privacy-by-design approach. This means embedding data protection measures across the entire AI design lifecycle. Establish clear ownership and governance over AI systems. For mission-critical AI systems, have a human in the loop to validate or override automated decisions.

Regulatory compliance

Regulatory compliance ensures that AI systems operate in alignment with enforced government and industry standards. This is especially critical in highly regulated industries where misusing personal data or using automated decision-making processes can lead to legal, financial and reputational consequences. For AI systems that process sensitive user data, maintain data protection and AI use regulations, such as the GDPR. For jurisdictions with no clear AI-specific legislation, it is beneficial to follow frameworks such as the NIST AI RMF or ISO 42001, as they provide a structured approach to managing AI risks and demonstrating due diligence.

Nihad A. Hassan is an independent cybersecurity consultant, digital forensics and cyber OSINT expert, online blogger and author with more than 15 years of experience in information security research. He has authored six books and numerous articles on information security. Nihad is highly involved in security training, education and motivation.