Sergey Nivens - Fotolia

When to use canary vs. blue/green vs. rolling deployment

App deployment strategies such as rolling updates and blue/green environments help IT organizations manage change with minimal risk. See which strategy fits your application.

Rolling, blue/green and canary deployments are all popular options for new releases of an application. Each approach fits some use cases better than others.

Because each deployment method has pros and cons, IT organizations should compare them for each type of app they support. For example, the rolling deployment method benefits applications that experience incremental changes on a recurring basis. Blue/green, which requires a large infrastructure budget, best suits applications that receive major updates with each new release. Canary deployment can work well for fast-evolving applications and fits situations where rolling deployment is not an option due to infrastructure limitations.

Have a sense of the application's demands and your organization's budget for IT resources? Evaluate the three deployment choices below.

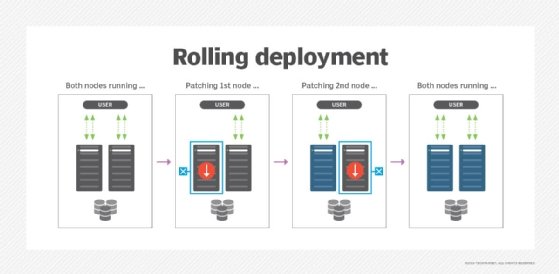

Rolling deployment

In a rolling deployment, IT teams maintain one production environment for a distributed application. It consists of multiple servers or cloud instances, each hosting a copy of the application. There is also usually a load balancer that routes traffic between servers.

To deploy a new version of the application, IT admins stagger the change releases so that the update activates on some servers or instances before others. In this case, some servers run the new version of the application, while others continue to host the older one. As traffic comes in to the application, some users interact with the new code, while others land on the known-good production version.

As long as no issues appear with the new version, deployment continues across the hosting environment until all of the servers run the updated application. If errors occur, however, the operations team can redirect all traffic to the servers that still host the known-good version until the problem with the new release is resolved.

Rolling deployments work well for IT organizations with a hosting infrastructure large enough for some servers to be taken out of service -- without performance degradation -- in the event that a problem occurs with an application update. It is also a useful technique for deployments that involve small changes.

There are some use cases where rolling deployment isn't a great fit. For example, when app developers release major changes, some users -- those whose requests are directed to servers running version A of the application -- experience notably different functionality from those using version B. Tech support must know both versions, and be able to identify which one a user is on. Distinct versions can disrupt teamwork and contractor relationships as well.

A simple web app with a UI that evolves gradually is a good candidate for rolling deployment. Changes to an app like this are incremental enough that they can be pushed out safely through a rolling deployment pattern without major inconsistencies to the user experience.

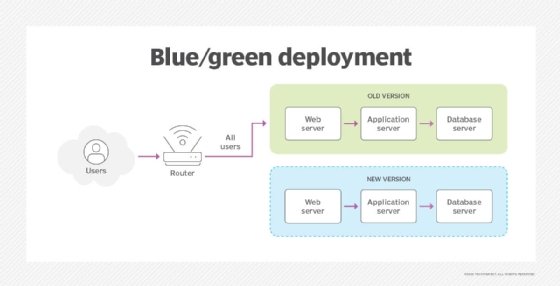

Blue/green deployment

In the blue/green deployment model, teams maintain two distinct application hosting infrastructures -- a blue one and a green one. At any given moment, one of these infrastructure configurations hosts the production version of the application, while the other is held in reserve.

IT admins deploy a new application version to the reserve infrastructure, also referred to as the staging environment. Once they test the deployment sufficiently, the admins can swap traffic from one infrastructure to the other. The former staging environment becomes the production environment, and the former production environment goes offline.

Blue/green deployment patterns offer IT operations teams greater opportunity to test a new release with a production-quality environment before they make it public. It also enables them to switch all users over to a new release at once, versus the canary and rolling deployment approaches. That makes it good for applications that receive major overhauls in each new release, rather than incremental changes. One example is a rapidly evolving SaaS application.

Because this strategy requires IT organizations to maintain two identical hosting environments, each large enough for production, infrastructure costs double. Either the application's footprint must be small enough, or the team's budget large enough, to accommodate the large infrastructure requirements.

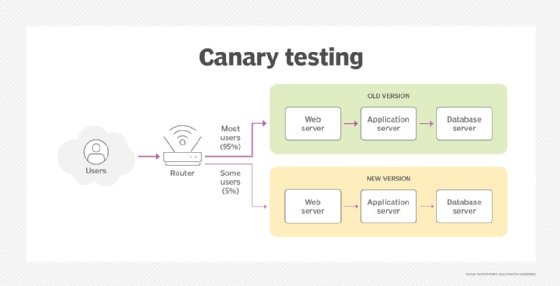

Canary deployment

The canary deployment pattern is similar to a rolling deployment in that the IT team makes the new release available to some users before others. However, the canary technique targets certain users to receive access to the new application version, rather than certain servers.

This app deployment strategy offers a key advantage over blue/green switchovers: access to early feedback and bug identification. The specified subset of users, i.e., canaries, find weaknesses and improve the update before the IT team rolls it out to all users.

Canary deployments make sense for IT applications with an identifiable group of users who are more tolerant of bugs -- or better suited to help identify them -- than the general user base. For example, a common strategy is to deploy the new release internally -- to employees -- for user acceptance testing before it goes public. Along similar lines, IT organizations can create a canary group of opt-in users who want to receive updated versions of the application before others. These Power users are willing to accept the risk of bugs to gain faster access to new features.

IT organizations benefit from canary deployments vs. blue/green techniques and even rolling deployments because a canary rollout doesn't require any spare hosting infrastructure. As such, it's a helpful technique for startups or other organizations with tight budgetary constraints.