Getty Images

Stop overpaying for storage: A FinOps guide for CIOs

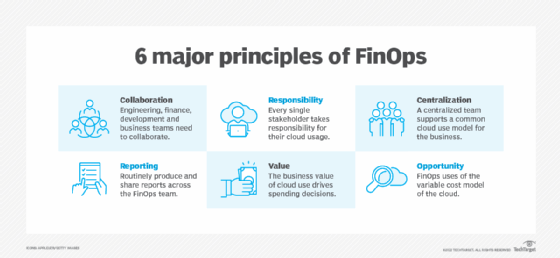

FinOps principles can help optimize storage. It can reduce costs without performance trade-offs through frameworks, lifecycle automation and cross-team accountability strategies.

Data storage remains a persistent blind spot in enterprise economics, affecting cost-optimization and performance efforts. The result is wasted resources and frequent overspending.

Traditional optimization efforts miss storage because they focus on highly visible consumption, such as compute and application tuning. Unmanaged storage consumption tends to grow quietly in the background.

Applying FinOps principles to data storage results in cost reductions without performance trade-offs, improving business agility, freeing capital for other initiatives and maintaining better data governance.

This article demonstrates how to apply FinOps principles to storage optimization, including practical frameworks, a roadmap and strategic impacts. Key outcomes include cost visibility, lifecycle automation and cross-team accountability.

Why storage remains the most overlooked cost center

Storage tends to be a quiet consumer. Capacity grows slowly over time through backups, logs, analytical datasets, snapshots and compliance-based retention policies. These resources are rarely reviewed after configuration. Because the per-GB price appears low, cumulative impact across environments is unmanaged and underestimated, especially in hybrid on-premises and cloud environments.

Operationally, storage also falls into a governance gap. Compute is actively managed by applications teams and optimized through scaling policies, while storage is often provisioned centrally and left static.

Hidden drivers often include:

- Orphaned volumes and snapshots.

- Replication and redundancy defaults.

- Cloud egress fees and data movement costs.

- Data sovereignty compliance settings.

Without lifecycle enforcement, data persists indefinitely, making storage an under-optimized component of enterprise storage spending.

Applying FinOps principles to storage optimization

Optimizing storage in enterprise environments means shifting it from a passive infrastructure resource to an actively governed financial asset that impacts business strategy. Taking a FinOps approach to managing storage accomplishes this transformation.

Applying FinOps to storage relies on three foundational practices:

- Right-sizing storage based on actual (not assumed or predicted) access patterns.

- Data lifecycle management policies, including retention, archiving and deletion.

- Tier-aware budgeting aligned to workload value.

Effective management establishes storage cost visibility as a leadership capability that will enable informed decision-making and directly impact operational practices. Management practices can include:

- Ensuring SLAs with cost discipline.

- Setting performance guardrails.

- Establishing workload classification by latency sensitivity.

These practices balance efficient performance and responsible storage spending.

An effective governance model requires cross-team collaboration, with various teams playing specific roles. Examples include:

- The finance team sets cost guardrails.

- The platform team enforces policies.

- The operations team owns consumption and reporting.

Practical frameworks for sustainable storage cost control

Sustainable storage control requires more than one-off cleanup efforts -- it depends on repeatable frameworks that align technical decisions with financial outcomes. For CIOs and IT leaders, the goal is to replace reactive cost management with structured practices that continuously evaluate data value, placement and movement.

The following frameworks translate FinOps principles into operational guardrails by:

- Identifying low-value data before it accumulates costs.

- Aligning storage tiers with workload requirements.

- Preventing avoidable transfer charges.

- Making spending transparent across teams.

Together, they create a system where storage automatically trends toward the right performance level at the best cost.

Cold data identification framework

Cost control begins with understanding which data no longer requires high-performance storage. Organizations should establish an identification framework for discovering such data using methods such as:

- Access frequency analysis.

- Business value tagging.

- Compliance-driven retention mapping.

- Candidate selection for archival tiers.

A structured approach to identifying infrequently accessed or low-business-value data enables organizations to reduce costs without impacting operational performance.

Storage tiering strategy framework

Once data value and access patterns are understood, organizations can align storage performance levels with actual workload needs, through:

- Performance vs. cost matrix.

- Automated storage tiering across environments.

- Policy-driven placement and migration.

- Guardrails for performance-sensitive workloads.

A formal tiering strategy ensures data resides on the most cost-effective medium while maintaining required service levels.

Egress risk reduction model

Unplanned data movement is a frequent source of unexpected storage-related costs. Ways to help prevent these costs include:

- Data locality planning.

- Cross-region transfer controls.

- Workload placement optimization.

- Forecasting and budgeting for cloud egress fees.

A proactive model for managing data locality and transfer patterns helps organizations anticipate, control and reduce avoidable egress charges.

Financial transparency model

Cost optimization is unsustainable without shared visibility into spending drivers and organizational discipline. Ways to increase shared visibility include:

- Audit the storage footprint across on-premises and cloud environments.

- Implement and share storage cost dashboards.

- Classify data by access frequency and business value.

- Identify top cost drivers and quick wins.

Establishing standardized reporting, accountability and metrics enables finance and operations teams to manage storage as a measurable business resource.

The 30-60-90-day storage FinOps roadmap

The pace of a FinOps roadmap varies by organization size, cloud complexity and total storage utilization. The roadmap below depicts an update schedule over a three-month period. Longer, more detailed roadmaps are available for more extensive deployments.

Strategic impact for the enterprise

Shifting storage optimization from technical cleanup to a strategic tool enables cost efficiency. Applying FinOps principles to storage delivers predictable cost reductions, stabilizes hybrid cloud spending and improves budget forecasting by identifying hidden growth drivers.

Equally important, it aligns technology investment with business value, ensuring high-performance storage is reserved for revenue-critical workloads. The result is stronger financial discipline without slowing innovation.

CIOs and IT leaders who treat storage as a governed financial asset -- not just infrastructure -- position their organizations to capture immediate savings and long-term efficiency gains. The next step is to operationalize these principles through a structured roadmap that embeds cost awareness into everyday technology decisions.