workload

What is a workload?

In computing, a workload is typically any program or application that runs on a computer. A workload can be a simple alarm clock or contact app running on a smartphone. Or it can be a complex enterprise application hosted on one or more servers with thousands of clients, or user systems connected and interacting with the application servers across a vast network. The terms workload, application, software and program are used interchangeably.

Workload can also refer to the amount of work -- or load -- that software imposes on the underlying computing resources. Broadly stated, an application's workload is related to the amount of time and computing resources required to perform a specific task or produce an output from inputs provided.

A light workload accomplishes its intended tasks or performance goals using relatively little computing power and limited resources, such as processors, central processing units, clock cycles, storage input/output and so on. A heavy workload demands significant amounts of computing resources.

A workload's tasks vary widely depending on the complexity and intended purpose of the application. For example, a web server application might gauge load by the number of webpages the server delivers per second, while other applications might gauge load by the number of transactions accomplished per second with a specific number of concurrent network users. Standardized metrics used to measure and report on an application's performance or load are collectively referred to as benchmarks.

Types of workloads

Workloads are created to perform many tasks in countless ways, so it's difficult to classify all of them with one set of uniform criteria. The following are some examples of how workloads are classified:

- Static vs. dynamic. Workloads might be classified as static or dynamic. A static workload is always on and running, such as an operating system, email system, enterprise resource planning, customer relationship management and other applications central to a business's operations. A dynamic workload is ephemeral; it loads and runs only when needed. Examples include temporary instances spun up to test software and applications that perform end-of-month billing.

- Transactional vs. batch. Classical mainframe-era workloads were often categorized as transactional or batch workloads. Transactional workloads exchange and process data on an ongoing basis. Examples include order entry systems and banking or accounting systems. They often exemplify static workloads. Batch workloads exchange and process data on demand or as needed, such as monthly billing systems. They typically represent dynamic workloads.

- Real-time software. This is a third traditional workload type. It emphasizes high-throughput and low-latency performance to operate in sensitive, real-world computing environments such as medical, military and industrial systems.

- Analytic. The dramatic diversification of software development has introduced countless other workload classifications. For example, analytical workloads analyze enormous amounts of data, sometimes from varied and disassociated sources, to find trends, make predictions and drive adjustments to business operations and relationships. This is the underlying notion behind more advanced programming such as big data and machine learning software technologies.

- High-performance computing (HPC). These are frequently related to analytical workloads and perform significant computational work. This means HPC workloads typically demand large amounts of processing power and storage resources to accomplish demanding computational tasks within a limited timeframe, even in real time.

- Database. These workloads have evolved as a unique workload type, because almost every enterprise application relies on an underlying database as a dependency or service within the enterprise infrastructure. Database workloads are extensively tuned and optimized to maximize the search performance for other applications that depend on the database. If the database performs poorly, that causes a bottleneck and reduces the performance of applications using the database.

The emergence of cloud computing over the last decade has also driven the development of more workload types including software as a service, microservices-based applications and serverless computing.

Choosing where to run workloads: Cloud vs. on premises

Workload deployment -- determining where and how the workload runs -- is an essential part of workload management. Today, an enterprise can choose to deploy a workload on premises, as well as in a cloud.

Traditionally, workloads are deployed in the enterprise data center, which contains all the server, storage, network, services and other infrastructure required to operate the workload. The business owns the data center facility and computing resources and fully controls the provisioning, optimization and maintenance of those resources. The enterprise establishes policies and practices for the data center and workload deployment to meet business goals and regulatory obligations.

With the rise of the internet, cloud computing has become a viable alternative to on-premises workload deployments. Public cloud computing is essentially computing as an on-demand utility. An organization uses a provider's computing resources and services to deploy workloads to remote data center facilities in locations around the world, yet pays for only those resources and services it actually consumes over a given timeframe -- typically, per month. The cloud provider deploys complex software-defined technologies that let users provision and use its resources and services to architect suitable infrastructures for each workload on the cloud platform.

The challenge for any business is deciding just where to deploy a given workload. Most general-purpose workloads can operate successfully in the public cloud, and applications are increasingly designed and developed to run solely in a public cloud.

However, the most demanding workloads might struggle in the public cloud. Some workloads require high-performance network storage or depend on internet throughput. For example, database clusters that need high throughput and low latency might be unsuited to the cloud; the cloud provider might offer high-performance database services as an alternative. Applications that rely on low latency or aren't designed for distributed computing infrastructures are usually kept on premises.

Technical issues aside, a business can decide to keep workloads on premises for business continuity or regulatory reasons. Cloud clients have little insight into the underlying hardware and other infrastructure that hosts the workloads and data. That can be a problem for businesses that must meet data security and other regulatory requirements, such as auditing and proof of data residency. By keeping those sensitive workloads in the local data center, a business can control its own infrastructure and implement the necessary auditing and controls.

Cloud providers are also independent businesses that serve their own business interests and might not be able to meet an enterprise's specific uptime and resilience expectations for a workload. Outages happen and can last for hours and even days, adversely affecting a client's business and customer base. Consequently, organizations often opt to keep critical workloads in the local data center where dedicated IT staff can maintain them.

Some organizations implement a hybrid cloud strategy that mixes on-premises, private cloud and public cloud services. This provides flexibility to run workloads and manage data where it makes the most sense, for reasons ranging from costs to security to governance and compliance. This presents tradeoffs: for example, an organization might keep sensitive data and workloads in its own data center to preserve direct control over them, but it also then takes on more security responsibilities for them.

Benefits and drawbacks of running cloud workloads

Businesses deploy workloads to the public cloud for an array of potential benefits including the following:

- Cost management. Businesses pay for public cloud resources and services on demand or as consumed and are billed on a monthly basis. This cost model allows businesses to shed many capital expenditures involved in building and maintaining a local data center.

- Scalability. Public cloud providers support a massive number of resources. Users can easily scale up and scale down workloads as needed to handle almost any demand.

- Performance. Businesses can deploy workloads to one or more public cloud global regions to optimize workload performance and lower latency for important customer areas that might be too remote for an on-premises workload.

- Data residency. Businesses might be subject to varied data protection and data residency regulations in different countries around the world. Using a public cloud with a global data center footprint lets a business maintain a workload, its data and data processing operations within the geopolitical area subject to the same set of regulations.

There are also serious risks involved with public cloud computing that every cloud user should consider:

- Visibility. Users can see the resources and services that are used, but they generally lack visibility into, much less control of, the cloud provider's underlying multi-tenant infrastructure. This makes it impossible for a business to validate or audit regulatory demands for workloads in the public cloud.

- Outages. When outages occur in the public cloud, users are completely dependent on the cloud provider to troubleshoot and remediate problems within the provider's service-level agreement (SLA). Prolonged outages adversely impact businesses that use the cloud, as well as business customers that depend on workloads deployed in the cloud.

- Partnerships. Ultimately, a public cloud is a business partner, and partnerships change over time. New services appear, while other services are deprecated. The provider might merge or be acquired, causing disruption to the cloud and support. Cloud users always need a workload failover plan to handle cloud disruptions.

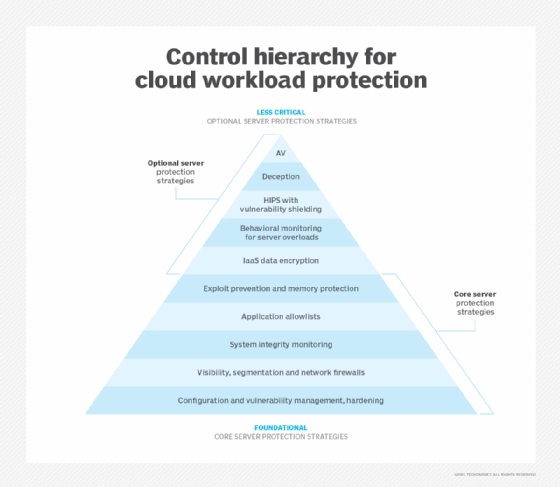

- Security. Security-related risks are heightened with public-facing cloud workloads. These risks include the following:

-

- Cyberattacks. Malicious actors can exploit security vulnerabilities, such as a lack of strong passwords or adequate access controls, to steal sensitive cloud data. These actors can be inside or outside the company storing the data.

- Misconfigurations. Poorly or misconfigured cloud resources can also cause data breaches. Examples include inadequate access controls, mismanaged authentication settings that allow unauthorized access and misconfigured firewall settings.

- Shared infrastructure. If one organization's cloud data is breached or compromised, other organizations that share the same cloud infrastructure are vulnerable and that infrastructure is no longer safe to use.

Benefits and drawbacks of running on-premises workloads

Many businesses continue to build and maintain more traditional on-premises data centers, which can provide business benefits including the following:

- Visibility and compliance. A business has complete control and visibility into the data center infrastructure including all server, storage, network and other hardware, as well as operating systems and other elements of the software stack. On-premises data centers are the preferred deployment target for business-critical and demanding workloads that might be unsuitable for deployment in the public cloud, especially if they involve regulatory compliance or high-security issues.

- Infrastructure control. The business has access to all log files and can troubleshoot, correct and audit all activity within the on-premises data center. Businesses can take proactive steps to protect the on-premises environment, provide adequate staffing to address issues in a timely manner and maintain the established SLAs to the organization's users.

However, on-premises data centers are also subject to important drawbacks that can affect business operations, such as the following:

- Costs. Building a traditional data center can be a significant undertaking, especially to provide high levels of resilience to meet the needs of some critical workloads. There are high capital expenses for construction and outfitting, as well as ongoing operational expenses, such as maintenance and utilities that must continue regardless of workload use. These might not be cost-effective for all organizations.

- Protection. The business is completely responsible for all data and system uptime and protection, including backups, snapshots and the implementation of high-availability infrastructures. Workload protection is vital for business continuity and often tied to regulatory demands. Securing data and keeping critical workloads running in-house requires clear policies, suitable tools and staff expertise.

Workload management tools

Software tools are vital elements of workload management. Tools can report on the availability, health and performance of important workloads within local, cloud and multi-cloud environments.

Tools, typically, track the resources and services available within the infrastructure and report on the behaviors of desired applications. Administrators use tools to quickly determine whether an application is online, which resources it consumes and other metrics related to its activity, such as transactions and concurrent users. Some tools can support both on-premises workloads and workloads in major public clouds within the same pane of glass.

There are many workload management tools available. Often, these fall under the categories of application performance monitoring or application performance management tools. Some examples include the following:

- AppDynamics.

- AppEnsure.

- Datadog Application Performance Monitoring.

- DX Application Performance Management.

- Dynatrace Application Performance Monitoring.

- ManageEngine Applications Manager.

- New Relic APM 360.

- SolarWinds Server and Application Monitor.

- Splunk IT Service Intelligence.

- WhatsUp Gold Application Performance Monitoring.

Cloud providers generally offer dedicated tools designed to report on the resources and services a business consumes, as well as the health and performance of applications running in the cloud environment. Examples include the AWS Management Console and Microsoft Azure portal.

Cloud computing and cloud management require a vast skill set, ranging from workload management to various programming languages. Learn the top cloud computing skills for a career in this field.