Getty Images/iStockphoto

Can you use Kubernetes without Docker?

Although Docker and Kubernetes are often used together, the two serve different roles in IT environments -- and Docker containers aren't the only option for Kubernetes deployments.

The emergence of containers spawned exciting possibilities for software development and workload operations across the modern enterprise. But the dramatic growth in containers' popularity poses problems for container management that engines such as Docker cannot handle.

Platforms such as Kubernetes address these complex container management challenges through automation and orchestration. Kubernetes is a free, effective platform that accommodates numerous container runtimes, including Docker Engine.

Because both Docker and Kubernetes appeared early in the container age, the two have been tightly intertwined for years, to the point where they're sometimes referenced interchangeably. But although Docker and Kubernetes are complementary, they are different types of tools that serve distinct purposes in IT environments.

Understanding containers and container engines

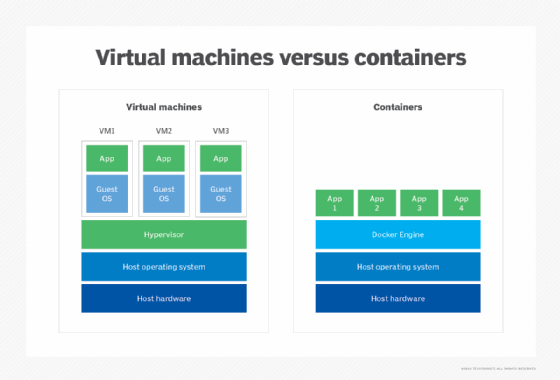

A container is a specialized type of VM. Like any VM, a container packages and manages software, abstracting it from the underlying computing environment of servers, storage and networks. This abstraction makes it easy for containers and VMs to move between computing environments.

Unlike VMs, which include an OS, containers include only the code and dependencies necessary to run the container's workload, such as runtimes, system tools, system libraries and corresponding settings. The result is an agile, resource-efficient package that can run with few -- if any -- requirements, regardless of the computing environment.

The two components not packaged within a container are the OS and container engine. The OS supports the code running within the container, and the container engine handles the mechanics of loading and running the container itself.

A container is created and stored as a container image. Invoking a container loads the image file into the container engine, effectively changing the image into a running container. This packaging and abstraction ensures a container runs the same way on almost any infrastructure.

A container engine is a software platform or layer necessary to load, run and manage containers. Container engines are often referred to as a hypervisor or OS for containers because they occupy the same layer as the hypervisor in a VM.

Docker's role in the container landscape

Docker is one of several popular container engines. Once Docker or another container engine is available on a computer, the system can load and run containers atop the container engine layer.

Docker offers the following key functionalities:

- Maintaining isolation and abstraction between containers and the underlying hardware.

- Loading and executing containers.

- Handling container security.

- Supporting container management tasks, such as basic orchestration.

The heart of any container engine, including Docker, is the container runtime. A container runtime does the heavy lifting of loading and running containers, as well as implementing namespaces and cgroups or logical OS constructs for containers.

There are numerous container runtimes available, including containerd, CRI-O, runC and Mirantis Container Runtime. Some runtimes incorporate higher-level features such as container unpacking, management and image sharing. Some also provide an API to let developers create software that interacts with the runtime directly.

Orchestrating and managing containers with Kubernetes

Containers have become enormously popular because of their ease of use and relatively small computing footprint. Enterprise servers can host dozens or even thousands of containers that compose applications and services for the business.

But the high count and short lifecycle of many containers poses a serious challenge for IT administrators who must manually deploy and manage large, dynamic container fleets. Orchestrating container deployment and performing management in real time requires highly automated tools.

This is the role of the Kubernetes platform, sometimes abbreviated as K8s. Originally developed by Google, Kubernetes is an open source automation and orchestration tool that handles deployment, scaling and management of containerized applications. With Kubernetes, IT admins can organize, schedule and automate most tasks needed for container-based architectures.

Kubernetes is certainly not the only container automation and orchestration platform available. For example, Docker has its own tool called Docker Swarm. Cloud providers also offer managed Kubernetes services, such as Azure Kubernetes Service and Amazon Elastic Kubernetes Service. Other third-party alternatives include SUSE Rancher and HashiCorp Nomad.

Kubernetes' extensive features, lack of a price tag, broad container runtime support and extensibility cemented its place as a leading automation and orchestration platform. Major Kubernetes features include the following:

- Container deployment. Standing up and configuring containers.

- Automatic bin packing. Optimizing container placement based on resource requirements.

- Container updates. Replacing an existing container with a newer version or rollback.

- Service discovery. Locating containers in an organization's IT infrastructure.

- Storage provisioning. Connecting containers with storage resources.

- Load balancing. Ensuring network traffic is divided among identical containers.

- Health monitoring. Confirming containers are responding and working properly.

Of all Kubernetes' features, container support and extensibility are the most powerful. These traits let Kubernetes interoperate with a variety of container runtimes, including containerd, CRI-O and any other implementation of the Kubernetes Container Runtime Interface (CRI).

In addition, Kubernetes provides an API that has spawned an entire ecosystem of open source tools for Kubernetes. These include the Istio service mesh and the Knative serverless computing platform.

What's the difference between Docker and Kubernetes?

Although Docker and Kubernetes are related, the two are distinct infrastructure tools that are deployed and managed separately within IT environments. Specifically, Docker is a container engine: the software layer or platform on which virtualized containers load and execute. In contrast, Kubernetes is an automation and orchestration platform: the software tool that organizes and manages the relationships among containers.

Does Kubernetes require Docker?

Early in Kubernetes' development, Docker was by far the dominant container engine, and support for its container runtime was hardcoded into Kubernetes in the form of a Kubernetes component called dockershim. A shim refers to any software modification that intercepts and alters the flow of data to provide additional features or functions.

The dockershim component enabled Kubernetes to interact with Docker as if Docker were using a CRI-compatible runtime. As Kubernetes evolved, it embraced additional container runtimes, and the CRI was invented so that any container runtime could interoperate with Kubernetes in a standardized way. Eventually, the dependencies posed by dockershim became a legacy problem that impeded further Kubernetes development.

With the release of Kubernetes 1.24 in early 2022, the Cloud Native Computing Foundation -- now the custodians and developers of Kubernetes -- decided to deprecate the dockershim component. By eliminating dockershim, Kubernetes' developers intended to streamline and simplify the project's code by removing legacy support in favor of a standardized runtime.

Will Kubernetes stop supporting Docker?

Although the Kubernetes project has deprecated dockershim, Docker containers still work with Kubernetes, and images produced with the docker build command still work with all CRI implementations. However, the removal of dockershim raises some potential issues for Docker users.

Docker tools and UIs that had a dependency on dockershim might no longer work. Likewise, containers that schedule with the container runtime will no longer be visible to Docker, and gathering information using the docker ps or docker inspect commands will not work.

Because containers are no longer listed, admins cannot get logs, stop containers or run something inside a container using docker exec. And although admins can still pull or build images using docker build, those images will not be visible to the container runtime and Kubernetes.

Evaluating whether to use Kubernetes without Docker

Given these problems, Docker users have two main options.

The first is to continue to use Docker as always. Existing containers will work: Mirantis and Docker have both committed to maintaining dockershim after its deprecation by Kubernetes, so users will have access to a suitable dockershim component for the foreseeable future.

The second is to migrate to a different container engine that uses a CRI-compliant container runtime, such as containerd or CRI-O. A wide assortment of container projects and tools use both runtimes, and the container engines and Kubernetes versions that cloud providers support are all CRI compliant. A business that runs a container infrastructure and provider-supported Kubernetes version in the cloud should not be directly affected by the dockershim deprecation.

Alternatives to Docker and Kubernetes

Today, other container engines and automation and orchestration tools are gaining share in containerized environments. Offerings range from simple, low-level runtimes to full-featured, cloud-native platforms:

- Apache Mesos.

- Containerd.

- CoreOS rkt.

- HashiCorp Vagrant.

- Hyper-V Containers.

- LXC Linux Containers.

- OpenVZ.

- RunC.

- VirtualBox.

Similarly, IT leaders can select from a growing list of automation and orchestration platforms to organize and manage vast container fleets. Kubernetes alternatives, often based on Kubernetes' open source code, include cloud-based and third-party options:

- Amazon Elastic Kubernetes Service.

- AWS Fargate.

- Azure Container Instances.

- Azure Kubernetes Service.

- Docker Swarm.

- Google Cloud Run.

- Google Kubernetes Engine.

- HashiCorp Nomad.

- Linode Kubernetes Engine.

- Mirantis Kubernetes Engine.

- Rancher.

- Red Hat OpenShift Container Platform.

- Volcano.

Choosing a container engine or automation and orchestration platform depends on factors such as cost, complexity, performance, stability, feature set, security and interoperability. As with any critical infrastructure choice, test and evaluate combinations of tools to ensure they meet technical and business requirements.