Getty Images/iStockphoto

How does Kubernetes use etcd?

Etcd is a lightweight, highly available key-value store accessible to each node in a Kubernetes cluster. Find out how etcd works and learn how to use it inside Kubernetes.

One of Kubernetes' essential components is etcd, a key-value store whose main job is to store cluster data. If you work with Kubernetes, it's important to understand how etcd works and how to use it inside a Kubernetes cluster.

Etcd (pronounced "ett-see-dee") is an open source key-value store that stores data in a variety of situations. A key-value store is a nonrelational database type. Rather than using the complex table and row structure of a conventional database, key-value stores such as etcd hold a variety of keys, each of which has an assigned value.

Etcd is efficient, reliable and fast. It works well in distributed environments where the key-value store instance runs on a different server than the applications and services whose configuration data is stored in the key-value store.

Etcd name origins

Etcd is so named because it serves a function similar to the /etc directory on Linux systems. In Linux, most configuration files and installed applications reside in various subdirectories and files under /etc. Similarly, in Kubernetes, cluster configuration data, along with data that tracks cluster state, lives in the etcd key-value store.

Etcd is essentially a distributed version of /etc. Unlike the /etc directory, which stores data for a single server, etcd works in distributed environments -- hence the "d" in etcd.

How does etcd work in Kubernetes?

Because it was not originally designed for Kubernetes, etcd has a variety of use cases beyond those involving Kubernetes specifically. Indeed, etcd's history predates the public emergence of Kubernetes.

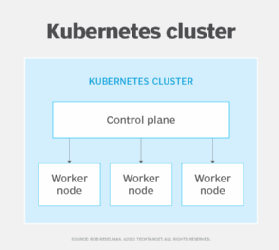

However, from the start, Kubernetes has used etcd to store cluster data. In Kubernetes, etcd provides a highly available key-value store of the information necessary for Kubernetes to manage nodes, pods and services.

There are two ways to deploy etcd in Kubernetes: on control plane nodes or dedicated clusters.

Etcd on control plane nodes

The simplest approach -- and the one that most Kubernetes environments use by default -- is to run an etcd instance on every control plane node inside a cluster.

Although this approach is easy to set up, it's not highly reliable: If a control plane node fails, etcd will stop working. In the worst-case scenario, file system corruption or hardware failure on the control plane node could destroy etcd data permanently.

Dedicated etcd cluster

The second approach is to run etcd instances on a cluster as part of the Kubernetes environment and store cluster data inside it.

Launch a single-node etcd cluster using a command such as the following:

etcd --listen-client-urls=http://$PRIVATE_IP:2379 \ --advertise-client-urls=http://$PRIVATE_IP:2379

Next, start the Kubernetes API server with the following flag and adjust the network port configuration as needed:

--etcd-servers=$PRIVATE_IP:2379

This is only useful for testing purposes because using a single-node etcd cluster carries the same reliability risks as running etcd directly on control plane nodes.

For higher availability, run a multi-node cluster with the following command:

etcd --listen-client-

urls=http://$IP1:2379,http://$IP2:2379,http://$IP3:2379,http://$IP4:2379,http://$I

P5:2379 --advertise-client-

urls=http://$IP1:2379,http://$IP2:2379,http://$IP3:2379,http://$IP4:2379,http://$I

P5:2379

Then start the Kubernetes API server with a corresponding --etcd flag:

--etcd-servers=$IP1:2379,$IP2:2379,$IP3:2379,$IP4:2379,$IP5:2379

For more complex etcd cluster deployment scenarios, consider using a Kubernetes operator such as Etcd Cluster Operator. Operators simplify the deployment process and enable customized etcd cluster configurations that don't require adjusting command-line arguments.

Backing up etcd

Backing up etcd ensures that the data stored in etcd remains available if a cluster fails. Backups also provide a way to restore a cluster to a stable or secure state in the event of a configuration mistake or security breach.

To back up etcd, first connect to your etcd instance. You can do this from your Kubernetes control plane nodes if you run etcd there. If you run an etcd cluster inside Kubernetes, connect with the nodes that host the cluster or the etcdctl utility using the --endpoints http://hostname:port argument.

Once connected, back up etcd with the etcdctl snapshot command:

etcdctl snapshot save snapshot_location.db --cert /etc/kubernetes/pki/etcd/server.crt --cacert /etc/kubernetes/pki/etcd/ca.crt --key /etc/kubernetes/pki/etcd/server.key

To restore etcd from a snapshot, use the etcdctl snapshot restore snapshot.db command.