Improve application rollout planning with advanced options

Enterprise IT teams have several advanced options for deploying application updates. The right one depends on what makes the most sense for the business, available IT infrastructure and your confidence in the update.

Application rollout planning requires IT operations teams to strike a precarious balance between development's demands, user expectations and the realities of everyday business. As software releases arrive faster, the headaches get bigger.

Traditionally, the IT operations team deploys an update by taking the application's server or servers offline, rolling out the update and returning the infrastructure to production with some testing to verify the update functions as intended. Continuous software development, as seen in DevOps methodologies, demands tighter operational integration for efficient and effective deployment with high workload availability and minimal user disruption.

There is no one right way to roll out software -- especially across an application server cluster -- but there are some guidelines to pick the best fit. Different strategies suit different workloads and types of updates. Each also has its own implications for the production IT infrastructure, which makes application rollout planning crucial.

Caging the app update

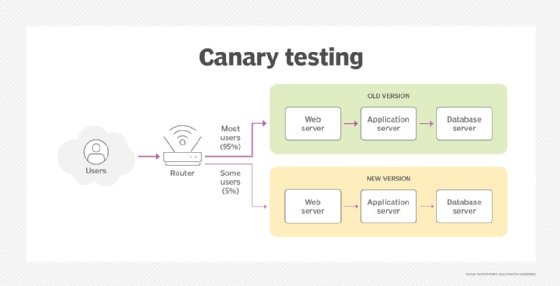

Canary deployment, sometimes called phased or incremental rollout, takes its name from the old mining practice of taking caged canaries into mines as an early warning system for toxic gases that could endanger the miners. Canary deployment rolls out the update to a small portion of the infrastructure first, allowing a small subset of users to act as the test group that catches errors and issues before they spread, affecting the entire user base.

Canary deployments have minimal infrastructure requirements, alleviating a great deal of pre-rollout capacity planning. Typically the canary group comprises a single server or cluster, or a limited set of servers. Load balancers are configured to stop traffic to those systems for the update, while the bulk of the application servers continue to service users with the software version that is known to be good. Load balancers then route a small portion of overall traffic onto the updated server or clusters during the testing phase. Canary users may be a random sampling of users, internal employees only or some other user profile or demographic.

Canary deployments are reasonably resilient. If there are problems with the new release, the deployment team easily routes users to the servers running the existing software version while the team deploys patches and fixes on the update, as many times as necessary. If the developers and stakeholders are confident in the new version's function, the IT administrators can deploy it to additional servers, until all of the application servers run the new version. The strategy requires no additional IT resources, although test systems may be added if desired.

One drawback of canary deployment to consider during application rollout planning is the time it takes to complete an update, as the new version is tested and phased gradually into production. This means the application owners must manage more than one version simultaneously, and it demands careful change and version management on the part of IT operations staff. The incremental increase in usage allows ample opportunity to gather load metrics, however, allowing production IT capacity planners to see how load demands change with the updated code. And the canary process provides a relatively safe and rapid rollback process if unintended consequences occur.

Plan a canary app rollout for relatively small changes where confidence in the release is high, such as testing problem fixes or regressions. If you're testing more significant software changes like major upgrades, alternative implementations or changes to the software's underlying architecture, scroll down to read about blue/green deployment.

Roll on, application, roll on

Rolling deployment is an alternate form of phased software deployment where the new software version is systematically staggered across one or more existing servers, usually within a server cluster.

For example, consider an application server cluster with four nodes. Each of the four application server nodes receives traffic through a load balancer. A rolling deployment takes one node offline, and the load balancer directs traffic to the remaining servers that still run the current software version. The idle server is updated while the active servers continue to support user traffic. The software is tested and optimized on the idle server, then the process repeats systematically for all application servers within the cluster until they all run the same version.

Rolling deployments require no additional hardware or infrastructure, and the application remains continuously available because the un-updated servers carry the user load. If errors or other unforeseen consequences occur in the new software version, its server can be restored from backups or protected VM images until a new version is ready. IT teams should have a rollback plan with backup and restoration in place.

Rolling deployments are conceptually almost identical to canary deployments, just used in a different manner. In application roll out planning, a canary deployment usually means an initial test deployment or slow ramp up, while the systematic process of updating remaining servers is generally termed a rolling deployment. For example, canary testing might take place on one server over several days and slowly roll out to additional servers over weeks. By comparison, a rolling deployment is more deliberate and typically updates all production systems in a matter of hours or days. There is usually less concern with load testing and gathering performance metrics when creating the application deployment plan.

In addition, traffic is typically not deliberately restricted to select groups or demographics as it is with canary testing. Use rolling deployments when there is more confidence in the software update, and deploy it on a defined schedule.

A colorful approach for mature apps

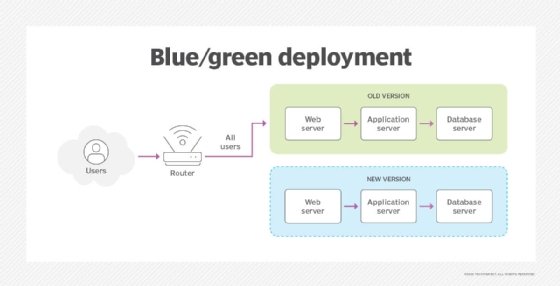

Blue/green deployment relies on a broader cutover approach. The IT team runs duplicate sets of IT infrastructure, whether on hardware in the data center or on cloud instances, that are as identical as possible. One set of hardware runs the current software version and handles the application traffic, while the companion hardware set is idle. The monikers blue and green are common, or sometimes A and B.

When a new software release is ready, the team deploys it onto the blue idle hardware set. The green active set runs the entire known-good software's workload. When the new deployment's testing completes, IT reroutes traffic to the blue hardware set, and the green hardware that had been active is left idle. This process will reverse when the next software iteration is ready for release.

Blue/green deployment needs two identical sets of hardware, and that hardware carries added costs and overhead without actually adding capacity or improving utilization. It's not particularly efficient compared to canary or rolling deployments. The benefit of this additional overhead in a blue/green application deployment plan is immediate rollback capabilities, because the alternate hardware set is always running the last known good software version. This is compelling when planning rollouts of mission-critical business workloads. The rollback is as simple as rerouting the application traffic from one hardware set to the other.

Since a cutover will impact all application users at once, the business must have extremely high confidence in the software's integrity. Blue/green deployments are often reserved for large and mature applications that have already been thoroughly tested. Blue/green deployments are sometimes used for evaluating major software changes, such as significant architectural updates. Consider monitoring metrics or key performance indicators to objectively compare operational differences between the latest and previous versions.

Weigh the pros and cons during application rollout planning, and apply each strategy where it yields the best results for the business.