benchmark

What is a benchmark?

A benchmark is a standard or point of reference people can use to measure something else.

Benchmarks in surveying

In surveying, a benchmark -- or bench mark or survey benchmark -- is a post or other permanent mark established at a known elevation that is used as the basis for measuring the elevation of other topographical points.

Benchmarks in tech

In computer and Internet technology, benchmark has a number of meanings. These include the following:

- A set of conditions against which a product or system is measured. For example, tech reviewers can test and compare several new computers or devices using the same set of applications, user interactions and contextual situations. The benchmark is the total context against which tester are measuring and comparing all products.

- A program specially designed to provide measurements for a particular operating system or application.

- A known and familiar product that users can use to compare other newer products.

- A set of performance criteria that a product is expected to meet.

Laboratory benchmarks sometimes fail to reflect real-world product use. For this reason, the benchmarks are not always an accurate measure of computer performance.

Still, benchmarks can be useful and some companies offer benchmark programs for downloading or a benchmark testing service on their own site.

Benchmarks in business

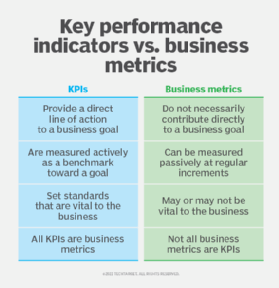

Like key performance indicators, benchmarks are also useful to measure performance, although the former measures an organization against itself and benchmarks look outward. Organizations can use a number of benchmark types to measure their own or their employees' performance. In these cases, business leaders can use key business or performance metrics to identify how well they are doing as compared with peers, other organizations or competitors.

Climate benchmarks

Benchmarks related to organizational carbon emissions and other sustainability issues are rapidly developing areas.