What are hot and cold aisles in the data center?

Hot and cold aisles in the data center are part of an energy-efficient layout for server racks and other computing equipment. The goal of a hot/cold aisle configuration is to manage airflow in a way that conserves energy and lowers cooling costs.

Understanding hot and cold aisle containment in data centers

A key element in the design of hot and cold aisles often is a containment system that isolates hot aisles and cold aisles from the other and prevents hot and cold air from mixing. In its simplest form, hot/cold aisle data center design involves lining up server racks in alternating rows, with cold air intakes facing one way and the hot air exhausts facing the other.

The rows facing the rack fronts are called cold aisles. Typically, cold aisles face air conditioner output ducts, and cold air circulates through perforated floor tiles placed in a raised double floor between the racks. The rows that the heated exhaust pours into are called hot aisles. Typically, hot aisles face air conditioner return ducts. After the cold air flows through the equipment racks, it becomes hot and exits from the back of the racks. It is this heated exhaust that forms the hot aisles behind the racks.

Containment systems originally were physical barriers that separated the hot and cold aisles with vinyl plastic sheeting or plexiglass covers. Today, vendors offer plenum spaces and other commercial options that combine containment with variable fan drives to prevent cold air and hot air from mixing.

The importance of using hot/cold aisles

The principal reason for configuring data centers with hot and cold aisles is to manage airflow in the data center in order to conserve energy and improve its energy efficiency. Data centers that have not been retrofitted with hot/cold aisles are likely to use more energy.

Considering how energy costs have increased in recent years, data center managers should consider replacing their legacy rack configurations with hot/cold aisle arrangements. Those arrangements help to isolate cold air in one area. This prevents -- or at least minimizes -- the possibility of the cold air mixing with warmer air from other areas of the data center. In doing so, it improves cooling efficiency and prevents bypass airflows and hot spots from forming on equipment. This helps to create a more energy-efficient data center with higher cooling capacity and controlled cooling costs.

Legacy data centers vs. hot/cold aisles

Equipment racks in data centers are used to secure servers, communications equipment, power supplies and air-handling equipment. Data centers usually have cooling units that should be strategically positioned for optimum airflow.

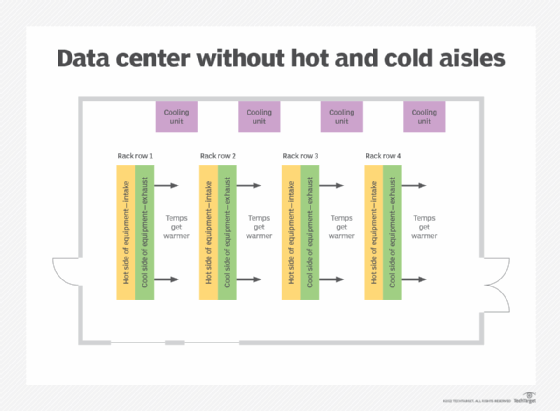

Legacy data center layout

A traditional data center configuration is shown in Figure 1. Heat from the fronts of the servers passes through the cooling system fans as exhaust air. It then travels to the next aisle, where it is heated further by passing through the next rack, before it is passed into the next aisle and heated further. In such an arrangement, the ambient temperatures of each aisle get slightly warmer, and the cooling system has to work harder to keep the computer room air at the correct temperature.

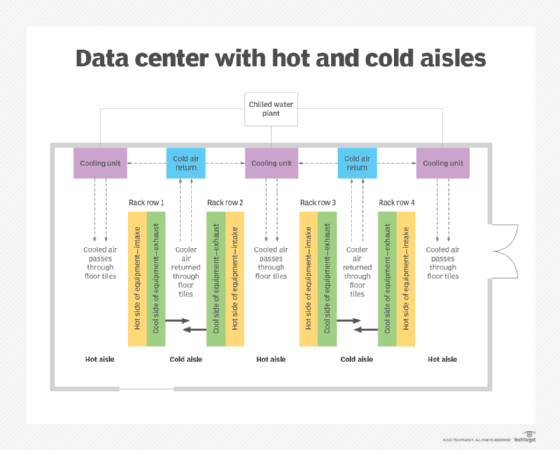

Hot/cold aisle layout

By contrast, when racks are grouped so that heating and cooling sides alternate, it creates hot and cold aisles as shown in Figure 2. This arrangement generates less heat in the hot aisles, and the computer room air conditioning system works less. In the cool air aisles, air is routed back to cold air returns, so temperatures remain low. This arrangement provides more efficient air handling and cooling.

Implementing hot and cold aisle containment

In modern data center design, the hot/cold aisle system is considered the de facto arrangement. The arrangement usually includes server cabinets that are placed face-to-face. The cold air is fed up through perforated parts in the floor, flows through the racks, and then exits from the back of those racks.

The easiest way to implement a hot and cold aisle containment system is to refer to the ANSI/TIA-942 standard. This globally accepted infrastructure standard specifies the minimum requirements for data centers, including the requirements for site location, architecture, topologies, design, physical security and cooling systems.

TIA-942 recommends the use of cooling equipment and a raised-floor system to improve airflow and reduce the amount of heat generated in the data center. It also recommends implementing hot and cold aisles with perforated tiles in the raised floor of the cold aisles. The standard specifies a recommended cold aisle width of 1.2 meters, or approximately 4 feet, to optimize cooling efficiency.

Best practices for implementing hot/cold aisle containment

The following are four best practices when implementing a hot and cold aisle layout:

- Raise the floor 1.5 feet so that air conditioning equipment can push air through that space.

- Deploy high cubic-feet-per-minute rack grills that have airflow output in the range of 600 CFM.

- Place devices with side or top exhausts in their own part of the data center.

- Install automatic doors in the data center.

In addition to those guidelines, the capital expenditure must be carefully considered when planning the layout in the data center. This applies to any major change to a data center. For a data center with fewer servers, a cold aisle containment system might be a more suitable and cost-effective option. But for a data center with many heat-generating servers, a hot aisle system might be better since it provides better cooling efficiency than a cold aisle system. That said, a hot aisle system is usually the more costly option.

Also, data center expansions should be designed with hot and cold aisles as part of an overall green data center strategy. A green approach optimizes the data center's energy efficiency and also minimizes its environmental impact. Hot and cold aisles play a vital role in implementing this approach.

Migrating to hot/cold aisles

When building a new data center, hot/cold aisles can be part of the data center design from the start. When upgrading a legacy data center to a modern hot/cold aisle arrangement, the process is more complicated.

Designers should do a cost-benefit analysis to determine if the investment will generate sufficient savings and return on investment. The factors to consider include the following:

- Hiring data center architects and specialized environment and power engineers.

- Costs to move equipment racks and reconfigure aisles.

- Costs to reconfigure routing of electrical cables and power distribution units.

- Heating, ventilation and air conditioning system modifications or replacement.

- System downtime during relocation of equipment and racks.

- Labor costs to perform.

Pros and cons of hot and cold aisle containment

Data centers with a hot/cold aisle system tend to be more energy-efficient than those without it. The system manages airflow and minimizes overheating, helping to lower cooling costs and protect equipment and components from heat-related failures. Costs can be further lowered by adopting a cold aisle containment system.

That said, the hot/cold aisle system is not perfect. Cold air and hot air can mix due to air leaks in the data center. Such leaks are usually due to design errors, such as gaps in the floor. Placing a physical barrier between the hot and cold aisles can minimize leaks, improve airflow and keep cooling costs under control.

Did you know that you can increase your data center's efficiency with data center temperature monitoring? Learn more about monitoring and controlling temperature in your data center.