Getty Images

Neo4j updates graph database to improve performance speed

The vendor's latest update includes new parallel analytical query and transactional query runtime capabilities as well as automated real-time data tracking via change data capture.

Graph database vendor Neo4j on Wednesday unveiled a set of new capabilities designed to help customers query and analyze data more efficiently to fuel real-time decisions.

Included is faster query performance with the ability to simultaneously run both transactional and analytical processing in the same database and change data capture capabilities that enable automatic tracking of data changes as they occur.

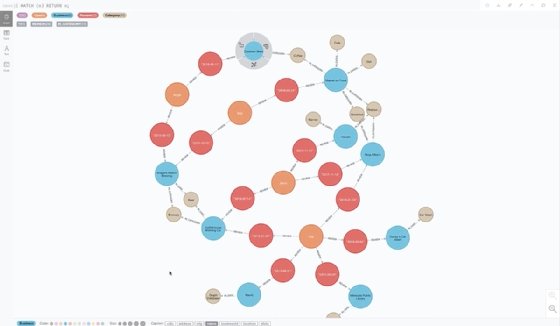

Based in San Mateo, Calif., Neo4j is a graph database vendor whose tools enable users to connect data points and discover relationships between data points in different ways than traditional relational databases.

Relational databases enable data points to connect with just one other data point at a time while graph databases enable data points to simultaneously connect with multiple data points. As a result, graph databases can speed up the data discovery process, translating to faster decision-making.

Other graph database vendors include TigerGraph; tech giants including AWS and Oracle also offer graph databases.

New capabilities

In late August, Neo4j updated its database platform to include vector search.

As generative AI has gained momentum in the 11 months since OpenAI released ChatGPT, which marked significant improvement in generative AI and large language model functionality, vector search has become more important.

Generative AI models require huge amounts of data to be accurate, including both structured and unstructured data. However, unstructured data -- such as text, audio files and images -- needs to be given structure before it can be used in conjunction with structured data. That structure comes in the form of a vector, which is a numerical representation of data that previously had no such representation.

Beyond making unstructured data usable by giving it structure, vectors enable users to find commonality within their data so that the right data can be discovered and used to train models.

Two months after adding vector search capabilities, Neo4j's latest update focuses on the performance of the vendor's database.

Performance, meanwhile, is critical for graph database vendors, according to Merv Adrian, principal at IT Market Strategy.

Typically, graph databases are fast for certain specialized queries but don't provide the same query speed for a broad number of applications. Relational databases, however, don't suffer the same drop in performance depending on the use case.

"Graph databases are exceptionally useful -- and fast -- for queries that navigate complex networks of relationships that are clumsy and difficult to model and optimize with typical relational database architectures," Adrian said. "But optimizing for graph analytics is a special case that has come at the expense of doing so for the more typical analyses that dominate many business use cases."

As a result, graph database vendors need to continue to improve query performance to compete with relational databases, he continued.

"For a graph database to compete with relational databases that have matured and innovated to enhance performance -- sometimes for decades -- it must enhance its performance for [broad] use cases," Adrian said. "Otherwise, it will be perceived as a niche offering, not a general-purpose solution."

Specifically, Neo4j's update includes the following:

- Parallel runtimes for different processing types across multiple CPU cores and featuring a technique called morsel-based parallelism to optimize resource utilization that results in substantially faster analytical queries.

- New change data capture capabilities (CDC) that automate real-time data tracking and notify users of changes to data stored in their database.

- CDC integration with Neo4j's connectors to streaming data tools Apache Kafka and Confluent to stream changes so they can viewed in data platforms and applications beyond Neo4j.

- Simplified creation of knowledge graphs using new embedding models that automatically discover relationships and connections between data.

- New pathfinding algorithms that automatically identify sequences and paths between nodes on a graph to make complex workflows more efficient.

Parallel runtimes for different processing types is perhaps the most significant new capability given that it broadens Neo4j's potential applications for graph databases, according to Adrian.

"Neo4j's enhanced parallelism removes a performance blocker for more general analytics and makes it more suitable for more use cases," he said. "It represents a maturation not unlike the wave of columnar processing that redefined analytical efficiency for relational databases a decade ago."

In addition, Adrian noted the importance of new support for Kafka.

"Support for Kafka helps Neo4j align with the dominant event processing technology already in use in enterprises today, letting it tap into existing and new use cases," he said.

Like Adrian, Sudhir Hasbe, Neo4j's chief product officer, called parallel runtimes for different processing among the most beneficial of the new features for users. In addition, he spotlighted the change data capture capabilities.

Both, however, fit into a larger whole of better enabling developers to build, maintain and integrate applications so that Neo4j can become the primary database for organizations, he continued, noting the importance of connectors to Apache Kafka and Confluent.

"Being able to plug into any enterprise architecture is how I think [of the update] on a broad level," Hasbe said. "Now, you can use Neo4j for different use cases as well as integrate it into the enterprise environment ... to be the system of record."

The impetus for the new features came largely from conversations with customers, he added.

Hasbe noted complex analytical and transactional queries historically had to be run separately with the data used to run an analytical query extracted from a graph and put into a data warehouse or data lake before it could be queried.

Users, however, wanted to be able to run different query types within Neo4j, and wanted to be able to run them at the same time without losing performance, he said.

Similarly, customers came to Neo4j and said they wanted to be alerted to changes in their data so they could take immediate action, according to Hasbe.

Future plans

With parallel workloads, new CDC capabilities and other new database features now available, Neo4j's roadmap will focus on three major areas, according to Hasbe.

One is better native integration with major cloud service providers. A second is improving the scalability of the Neo4j database platform to meet the needs of customers as the volume and complexity of their data continues to increase. The third is to give users more generative AI capabilities such as retrieval-augmented generation.

Improving support for AI, meanwhile, is critical not only for Neo4j but also for all data management vendors, according to Adrian.

Generative AI has been the dominant trend in analytics and data management over the better part of the last year and many organizations are now eschewing public large language models (LLMs) such as ChatGPT and Google Bard to develop their own LLMs trained using proprietary data.

"Like all data management players, Neo4j must align with emerging expectations for AI support [such as] working with LLMs," Adrian said. "I expect to see many of these happen in 2024."

Eric Avidon is a senior news writer for TechTarget Editorial and a journalist with more than 25 years of experience. He covers analytics and data management.