sdecoret - stock.adobe.com

Is the AI memory shortage stifling enterprise adoption?

Enterprises bought up computer memory to fuel AI adoption. Now, amid a memory shortage, analysts share insights on what this means for enterprise AI adoption and what's next.

Rapid, enthusiastic enterprise AI adoption has had some unanticipated consequences. Among them is the global RAM shortage that has shaken the tech market.

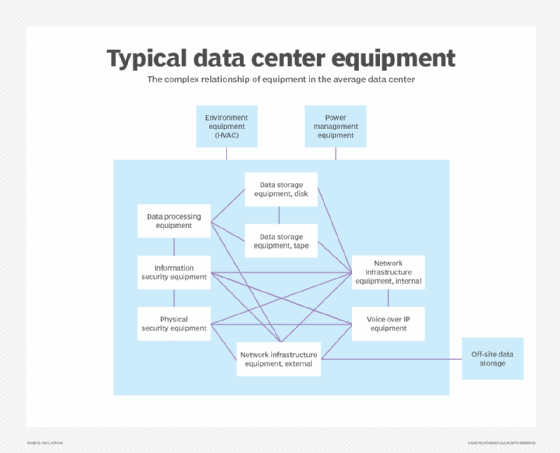

The AI data centers that power the tools we use every day require substantial memory to run their workloads. Hyperscalers and data center developers bought large amounts of memory to provide this capability. However, manufacturing constraints, coupled with competition from the consumer market, have led to a memory shortage that began in 2025.

To counter the shortage, distributors tried limiting consumer purchases of hardware, and some manufacturers pivoted from consumer sales to high-demand, enterprise clients. As we enter 2026, the shortage persists. This begs the question: Will the global memory shortage impact enterprise AI adoption and innovation?

Understanding the DRAMa

RAM is short-term computer memory that stores data for devices so it's readily available. It's mainly sold as dynamic RAM, or DRAM, which provides memory for all types of computers, including smartphones, gaming consoles and laptops.

In 2025, AI expansion exploded as hyperscalers raced to build data centers capable of supporting large-scale AI models, like ChatGPT, Claude and Gemini, that enterprises and individuals use. According to McKinsey & Company's 2025 State of AI survey, 88% of respondents said their organizations regularly use AI in at least one business function. AI has become an integral part of some business functions and workloads, and organizations are intent on maintaining or scaling them.

However, AI data centers require more memory than RAM and DRAM alone can provide. So, manufacturers and vendors shifted away from the production and distribution of RAM and DRAM to high-bandwidth memory. HBM transfers data faster than RAM and DRAM, while optimizing its power consumption. It uses multiple DRAM dies stacked on top of one another to power its storage capabilities. With all the DRAM being purchased for AI storage and demand for HBM exceeding production capacity, a memory shortage has ensued.

The memory shortage has affected consumer markets, prompting distributors worldwide to ration hardware, like SSDs that provide virtual RAM, and to raise prices. Enterprises are also facing high hardware and device prices, as well as rising prices from cloud vendors that provide AI tools and infrastructure and have to absorb or pass on the increasing cost of memory.

"Cloud and SaaS providers can absorb some of the supply-side shock, but they don't remove the cost," said Jeff Groom, director of engineering, AI, at Acre Security, which provides cloud and physical security technology. "In practice, those constraints often manifest elsewhere through pricing changes, usage caps, throttling or performance trade-offs. The shortage doesn't disappear; it just becomes less visible inside a service layer."

The scalability, efficiency and processing power of HBM let AI systems continue to operate. With HBM devices in short supply, can enterprises continue to adopt and scale AI?

What the memory shortage means for enterprise adoption

With 88% of organizations reporting that they use AI in at least one business function, it's clear they are serious about implementing AI. Faced with the current memory shortage, enterprises still seeking to adopt and scale AI are turning to the cloud said Alvin Nguyen, a senior analyst at Forrester Research.

"Bosses don't want to have to become subject matter experts if they don't have to. I think cloud kind of helps," he said. "Most companies just need to know they can run the AI models that meet regulations, provide an ROI and beat out the other solutions."

That has always been a major benefit of cloud services: replacing the complexity of purchasing and owning on-premises hardware with pay-as-you-go compute services delivered by experts who can help as needed. But if cloud prices are just going to increase in response to the memory shortage, as Groom predicts, is this an adequate solution to the problem? It could be if the only other option to retain a competitive advantage with AI is to own and run the hardware on-premises.

For enterprises that do depend on owning their own hardware, Nguyen suggested what many are already doing: stockpiling now while possible. However, he also has other tips: Keep what you have longer or start buying used.

"Why buy yesterday's technology for today's prices?" he asked. "If you can go used, go with used IT assets. That may drive new behaviors and cause changes in the secondary market. This doesn't just impact AI; it impacts everything with IT."

Despite the memory shortage, Groom doesn't believe enterprises should be solely focused on the hardware. Instead, this will test the resiliency of businesses' AI systems.

"When RAM becomes expensive or constrained, weak architecture gets exposed," he said. "Many organizations built AI strategies around large, general-purpose models rather than using smaller models tuned for specific tasks, which would be more efficient and more predictable."

Whether organizations shift to buying used hardware or migrate to cloud services, "a RAM shortage is unlikely to slow enterprise AI adoption on its own," Groom said.

What's next in the memory market?

Experts agree that the memory shortage isn't permanent. However, it could take two years to see relief and a market correction from the effects of the shortage. And a lot can happen in two years.

The memory shortage "will reward organizations with disciplined architecture and strong controls, and expose the ones that scaled faster than their guardrails," Groom said. An extended shortage like the one we're experiencing could open some organizations to AI security and governance concerns, he added.

"When RAM or GPU capacity becomes a bottleneck, teams are more likely to look for workarounds to keep projects moving," Groom said. "That can lead to merged workloads, weaker separation controls or shortcuts in deployment and access management if governance isn't strong."

According to Forrester's Nguyen, this shortage could create an extreme market correction if manufacturing can't keep pace with demand. However, hyperscalers are continuing to buy memory wherever they can, often at inflated prices, to maintain their competitive advantage.

"Having a clear advantage can sway who you go with," he said. "Hyperscalers are basically locked into this until they see it's time to quit, whether one of their competitors or multiple competitors quit funding this AI race or until customers declare a winner by dumping the other [hyperscalers]. It's a race to the finish," he said.

However, instead of crossing a finish line, Nguyen said we could end up popping a bubble, or even bubbles. Without realizing more gains and innovation from AI, or if we experience severe market correction spurred by other shortages or disruptions to AI development, the proverbial sword of Damocles could fall, and the AI bubble could burst. While it might be painful for global markets, Nguyen said he doesn't think this will be the end.

"Saudi Arabia, U.K., U.S., Japan, China, all of these countries are invested," he said. "I have a feeling it's going to be too big to fail. Not that they don't come out without pain."

Everett Bishop is the assistant site editor for AI & Emerging Tech and the previous assistant site editor for SearchCloudComputing. He graduated from the University of New Haven in 2019.