Insider threat vs. insider risk: What's the difference?

Identifying, managing and mitigating insider threats is far different than protecting against insider risks. Read up on the difference and types of internal risks here.

Insider threat, a long-used term in the infosec industry, is one Joe Payne is ready to retire. Its replacement? Insider risk.

Payne's reasoning is that much "malicious" insider activity -- such as deleting, copying or uploading files to collaboration apps or cloud storage platforms -- is not a threat, per se, but a consequence of the collaboration culture spreading in today's enterprises.

But that doesn't mean risky behavior can be ignored.

"We're careful not to call them threats because they might not actually be threats at all; they might just be an indicator that something needs following up," said Payne, president and CEO of Code42 and co-author of Inside Jobs.

Insider risk, Payne noted, may be even more challenging for enterprises to solve than the traditional insider threat.

In this excerpt from Chapter 3 of Inside Jobs, published by Skyhorse Publishing, Payne and co-authors Jadee Hanson and Mark Wojtasiak explain the various insider risk profiles common in collaboration culture and how even well-meaning employees may present security indicators that warrant further investigation.

Also, check out a Q&A with Payne to learn more about insider risk indicators and when an insider risk becomes an insider threat.

Insider Threat

The very word conjures up images of negativity and malice. Threat tends to center on a specific person or entity and insider threat solutions typically take a user-centric approach. In the security world, threat is often personified by faceless actors in hoodies who seek to deliberately harm an organization. Collectively, there's a reluctance to believe that such sinister characters exist inside our organizations. If they do, they exist at a very minimal level. The reality, however, is that when it comes to securing our collaboration culture, it's not just about malicious users.

Insider Risk

While Insider Risk might sound like a synonym for insider threat, it's not; and there is an important distinction to be made. In fact, we see Insider Risk as a different ball game. When it comes to managing or mitigating Insider Risk, the focus shifts from centering solely on the user to taking a broader, more holistic, and data-centric approach.

Insider threat, as we defined it in Chapter 1, is an event that occurs when an employee, contractor, or freelancer moves data outside your organization's authorized collaboration tools, company network, and/or file-sharing platforms. The focus is on the user -- the individual, the person, the employee.

We define Insider Risk, on the other hand, as data exposure events -- loss, leak, theft, sabotage, espionage -- that jeopardize the well-being of a company and its employees, customers, or partners. Unlike insider threat, which focuses on specific users, Insider Risk, first and foremost, focuses on data.

Insider Risk cannot be viewed in absolute terms. We cannot assume that an employee uploading a file to a personal Google Drive is doing so maliciously -- a risk but not a threat. At the same time, we cannot naively assume we don't have employees who intend to harm the organization by leaking financials through a personal email -- a risk and a threat. The point is risks can come in shades of gray. In fact, as employees that work for a company, we all personally represent some level of Insider Risk.

Insider Risk is a game of odds, and the stakes are high. As we learned in the previous chapter, the collaboration culture is here to stay. Technology has made it easy for employees to work anytime, from anywhere -- a reality that opens up companies to even more risk.

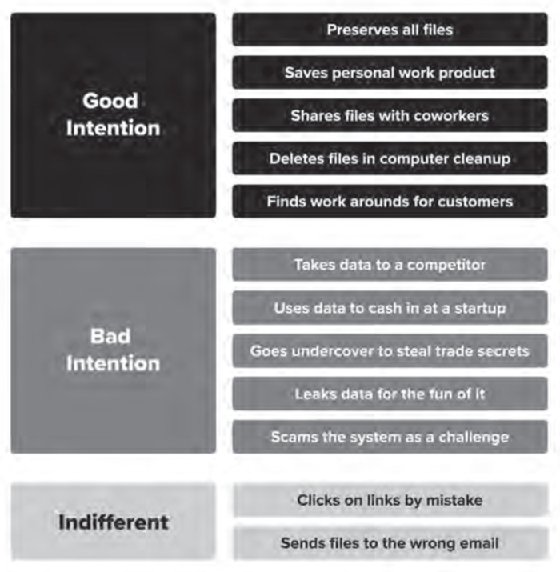

In this chapter, we reveal the many faces of an insider and the risk they pose to the organization. When assessing Insider Risk, intent -- good, bad, or indifferent -- doesn't really factor into the equation.

The Good

As organizations become more rooted in collaboration, Insider Risks are inevitable. Why? Because empowering employees to do their best work means giving them access to the tools and data they need to get their jobs done. As we learned in the previous chapter, most employees are well-intentioned, but that doesn't mean well-intentioned behaviors do not cause risk. Employees with good intentions generally assume one of the following Insider Risk personae.

The Saver

This employee typically thinks like this: "I can't stand the thought of losing files, so I save all my files to my personal cloud account. Sure, we have a company policy against this, but the files are too valuable. I just want to make sure they are safe."

Savers' intentions are good. Since when is protecting work product from loss not positive intent? Savers are the most risk-averse people in the organization. They are extremely organized, with elaborate systems for cataloging the files they've accumulated over time. A telltale sign of a Saver is how much storage they use. The challenge with Savers is they don't delete anything, and they tend to automate file syncs to personal cloud services and external hard drives. Herein lies the Insider Risk.

For example, Tom works in product marketing and never lets a good idea go to waste. Tom uses everything from Microsoft PowerPoint and Evernote to Gmail and Slack to capture, organize, and share his ideas. Tom is also an avid Mac user and has his company-issued MacBook set up to sync all of his files to iCloud. That way, he can pull them down and work on them at home on his iMac or iPad Pro. Now, iCloud is not a corporate-sanctioned cloud service, but Tom doesn't care, because as long as he delivers quality work on time, the company benefits. Little does the organization know that since Tom is in product marketing, he has the supersecret product road map on his laptop, and now it's on the loose at home on his iMac and on his personal iCloud. Imagine if Tom worked in Finance or HR. The Saver would have employee records, customer records, and prerelease earnings reports running wild, and you wouldn't even know it. This is just one example of an insider with good intentions.

The Planner

This employee often rationalizes actions like this: "I was just pulling together a portfolio of my best work because I am proud of it. Plus, you never know when work samples may come in handy landing my next big role." Planners should not be confused with Savers. Savers tend to hold on to everything they accumulate. Planners, on the other hand, tend to focus only on the work they've created themselves. If Tom were a Planner, his storage use would be much lower, but the contents of his iCloud could be even more valuable to the organization. He may not have saved the product road map, but he might have the go-to-market strategy he built for the product, and who's to say that file with the go-to-market strategy doesn't contain sensitive information?

The Sharer

A Sharer tends to think like this: "I wanted to provide a colleague my work in progress to ensure the project keeps moving forward." Sharers are often trying to get more coworkers involved in a project. The risk with Sharers is they may send information to people who should not have access to it. What's more, how they share that information could pose significant Insider Risk. What if their preferred method of sharing is a thumb drive, personal email, or some personal productivity web application like Basecamp or Evernote? We ran into this exact situation with an organization. A group of employees loved using their personal Evernote accounts to collaborate. They shared everything from analyst research reports -- which incidentally were under NDA so sharing them was a breach of contract -- to customer notes, and product strategy and planning docs. All of this living information was being shared outside the organization's sanctioned cloud services and flying under the radar of IT and security.

The Helper

A Helper's actions are influenced by these kinds of thoughts: "I was just deleting files off my laptop to help clean it up for the next user." Helpers are typically preparing to leave the organization. Helpers are sometimes like Marianne -- well-meaning employees, yet unaware of the risk they introduce to the organization. Let's consider a business case. This employee -- let's call her Helen -- is leaving her organization on good terms for a step up in her career at another company. Though we are sad to see Helen go, we are excited for her growth and opportunity. Helen was the person everyone sought out for help. Always positive, always getting it done and doing it right. Helen's replacement was starting the next day. To help make the transition as smooth as possible, Helen synced everything from her hard drive to her corporate Google Drive account. We are talking thousands of files uploaded and guess what -- all of them shared as open file links. Now Helen worked in R&D, and little did the organization realize, Helen had source code on her laptop. What if the source code is some groundbreaking tech? What if it's the difference between time to market and getting beat to market? We don't know, but what we do know is it's now out on Google for the world to find and see.

The Adviser

Finally, there is the Adviser, whose actions are guided by this type of motive: "I thought my client would benefit from knowing how our other customers structure their contracts." We see Advisers a lot, ironically in services or sales roles. Advisers only want the best for their customers. These well-intentioned employees are some of the best performers at the organization, never giving reason for pause or suspicion and always having a customer-first attitude. Being customer first, advisers want to make things as easy as possible for their customers, and in this case, what may be best for the client is rooted in risk. Consider for instance Ben. Ben is in advisory sales for a consulting firm. Ben has dozens of clients in the biotech industry. Some of his clients use Box to collaborate with vendors and partners. Some use Google Drive. Some use Dropbox. Ben's default collaboration tool is the company's sanctioned Microsoft OneDrive, but Ben cannot allow external access to files living on the corporate cloud, so what does Ben do? Upload files to customers via their collaboration tool of choice. Great for the client, but high risk for Ben's organization. Do the files contain personally identifiable information? Are the files sensitive in nature? Most likely they are, and now they live outside the secure confines of the corporate Microsoft OneDrive account -- a situation that is clearly fraught with risk.

The Bad

The malicious insiders -- the bad -- are the people security teams worry about the most. They come in all shapes and sizes. Their actions create splashy news headlines. Like well-intentioned employees, malicious-minded employees fit into several categories.

The Sellout

This employee just received a very lucrative offer to join a competitor -- and not necessarily for their talent, but for their data access. We typically see Sellouts in sales or product roles where access to intellectual property, like customer lists and source code, makes them ripe for poaching. Remember the SunPower story from Chapter 1? SunPower sued more than twenty employees and competitor SunEdison for trade secret misappropriation, computer fraud, and breach of contract after 14,000 files containing market research, sales road maps, proprietary dealer information, and distribution channel strategies were removed and given to a competitor. SunPower's lawsuit alleged that its employees were "solicited and encouraged" to move to competitor SunEdison.

The Startup

This employee is looking for a payday at a smaller, sleeker startup on the verge of making it big. Startups are always on the outlook for IP they can take elsewhere to cash in for stock options or a lucrative executive position, or both. Remember the Jawbone story? Jawbone employees left for Fitbit and took really important trade secrets with them. Although Jawbone attempted to fight back by filing lawsuits alleging trade secret theft, in the end Fitbit won. Jawbone no longer exists. In both the Jawbone case and the SunPower case, the companies turned to the courts. But relying on the courts is an expensive and slow process. By the time a case gets heard, it's probably too late -- the damage is done.

The Mole

This worker is an insider for hire -- often referred to as corporate espionage. We see Moles most often in technology and telecom, as well as sectors with lucrative research and development programs, like government, higher education, biotech, and big pharma. A recent example of Mole activity is the US government's case against Huawei. According to an article on Quartz, "The U.S. describes alleged efforts by Huawei to steal technical specifications related to Tappy, a robot that Bellevue, Washington-based T-Mobile USA developed for testing phones. The indictment alleges that Huawei's China office directed Huawei engineers working with T-Mobile in the United States to fulfill a contract to supply phones to steal photos, measure the robot, and even steal a part." A US investigation uncovered a bonus program offered to Huawei employees to "steal competitors' secrets." They even had a place where employees could post the information on an internal website. Talk about bold.

Sometimes, malicious insiders come in a combination of forms. The Startup/Sellout/Mole, for example, is a trifecta of insider personae. One of the most notorious examples is the Apple employee who downloaded a treasure trove of Apple autonomous car IP and transferred it to his wife's computer. This all went down in 2018 when he resigned from Apple to move to China to work for XMotors, a Chinese startup that also focuses on autonomous vehicle technology. According to reports, XMotors denied any IP misappropriation and fired the former Apple employee. There are also other types of bad-intentioned insiders who don't make the headlines.

The Prankster

This insider just likes to cause trouble for the fun of it.

The Gamer

This is an employee who is trying to "game the system" to prove that he can.

Perhaps the Prankster and the Gamer are not intentionally meaning to do harm. They're likely just having some fun at the organization's expense. Regardless, the point is that intent doesn't matter if they are creating Insider Risk.

The Indifferent

Indifferent or careless insiders make up a majority of Insider Risks. Their actions are commonly referred to as human error, yet the consequences -- the stakes -- remain very high.

The Careless Insider

These workers click on links without thinking, accidentally send documents to the wrong email, or just don't realize their actions can be harmful to the company, employees, customers, or partners. Their actions are not driven by intent, but rather out of ignorance, accident, or error. And no one is immune to making mistakes. According to Code42's 2019 Data Exposure Report, 78 percent of CSOs and 65 percent of CEOs admit to clicking on links they shouldn't have. Whoops!

Odds are every organization has careless insiders, and no matter how much security-awareness training you do, you're playing the odds when it comes to securing your data. The stakes? The brand reputation of your company.

Remember Marianne from Chapter 1? She didn't seem like someone who would put our data at risk. She was in HR, had been with the company for more than ten years, and was generally loved by everyone. Before her last day, she copied a trove of sensitive data from her laptop hard drive to an external hard drive -- everything from payroll data to the Social Security numbers of every employee and board member. If we hadn't detected the incident in time, it would have been a major breach -- an embarrassing moment for a security company. When we confronted Marianne, her response, "I just was trying to copy my contacts."

Every company has a Marianne. Boeing, for example, had an employee who emailed a company spreadsheet to his spouse, asking for help. Little did he know the spreadsheet contained hidden columns with personal information on Boeing coworkers. According to a Puget Sound Business Journal article, "The electronic spreadsheet contained 36,000 workers' first and last names, place of birth, employee ID data, and accounting department codes in visible columns. Hidden columns in the spreadsheet also included each employee's precise date of birth and Social Security number." Similar to the Marianne story, this could have been a major breach for Boeing, potentially ending in millions of dollars in fines. To make sure the information never went beyond the spouse's personal email, Boeing had to conduct a forensic examination of the employee's computer and the device belonging to his spouse -- and like in the Marianne story -- confirm all copies of the file were deleted. Fortunately, the breach was detected and contained.

Another careless insider scenario that made news headlines involves a Prince Edward Island dental practice employee who wanted to prove that they were actually at work. No kidding! Now, we don't know the personal reasons underlying the need for such proof, but we do know that the employee in question emailed a family member a file containing personally identifiable information on over one thousand patients. After investigating, Prince Edward Island's Privacy Commissioner found that the disclosures "were not for any illegal or nefarious purposes, e.g., to facilitate the commission of crimes such as theft, fraud, or harm to property, or to embarrass or harass the clients."

Regardless, the employee's actions resulted in costs -- investigative costs, free credit monitoring for patients impacted, public embarrassment, and the potential for future disclosure. Even though written confirmation was provided that "every email and attachment had been 'securely destroyed' and none of the emails had been forwarded to another address," the risk remained. As the company's privacy commissioner points out, "The fact remains that the recipient viewed personal health information, and that the personal health information cannot be unseen by the recipient." And the careless insider's risk lives on.