computational storage

What is computational storage?

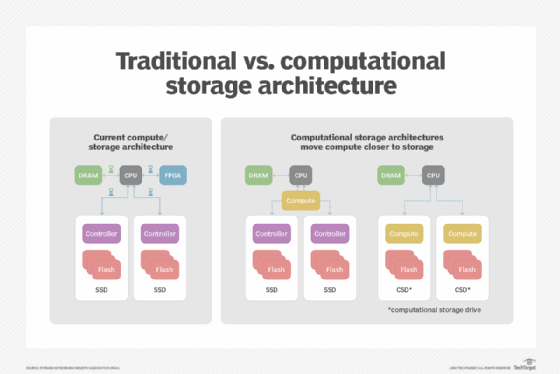

Computational storage is an IT architecture in which data is processed at the storage device level to reduce the amount of data that must move between the storage plane and the compute plane.

The lack of movement in computational storage facilitates real-time data analysis and improves performance by reducing input/output bottlenecks.

How computational storage works

In many respects, a computational storage device might look just like every other solid-state drive (SSD). Some products have a large number of NAND flash memory devices that actually store the data, a controller that manages writing the data to the flash devices and RAM to provide a read/write buffer.

What is unique about computational storage devices is the inclusion of one or more multicore processors. These processors can perform many functions, such as indexing data as it enters the storage device, searching the contents for specific entries, and providing support for sophisticated artificial intelligence programs.

With the growing need to store and analyze data in real time, the computational storage market is expected to grow. Organizations can implement computational storage by using products defined by the Storage Networking Industry Association Computational Storage Technical Work Group, including the following:

- Computational storage drive: A device that provides compute services in the storage system and supports persistent data storage -- including NAND flash or other non-volatile memory.

- Computational storage processor: A device that provides compute services in the storage system, but cannot store data on any form of persistent storage.

The ability to provide compute services at the device level was not truly available until the adoption rate of SSDs was in place. Traditional storage devices, like hard disk drives (HDDs) and tape drives, were not able to process the data locally in the way intelligent computation storage is.

Why computational storage is important

Traditionally, there has always been a mismatch between storage capacity and the amount of memory that the central processing unit (CPU) uses to analyze data. This mismatch requires that all stored data be moved in phases from one location to another for analysis, which in turn prevents data analysis from being real-time. Using these products, the host platform can deliver application performance and results from the storage system without requiring all data to be exported from the storage devices into memory for analysis.

By providing compute services at the storage device level, the analysis of the raw data can be completed in place and the amount of data moved to memory is mitigated into a manageable and easy-to-process subset of the data.

Computational storage is important in modern architectures due to the continued growth of the raw data being collected from sensors and actuators in the internet of things (IoT).

The ability to scale storage without having to be concerned about latency is an important consideration for hyperscale computing data centers that are trying to manage all the stored data from companies using public cloud services. Additional uses for computational storage include edge computing or industrial IoT environments in which too much latency can cause an accident, and the need to conserve space and power is even more important.