kirill_makarov - stock.adobe.com

5 deep learning model training tips

Deep learning model training requires not only the right amount of data, but the right type of data. Enterprises must be inventive and careful when training their models.

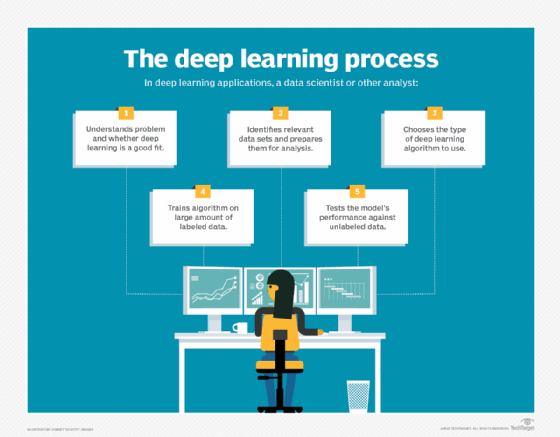

When used well, deep learning technology can boost enterprises looking to collect, analyze and interpret big data. Successful use cases for deep learning vary from natural language processing to medical diagnosis automation, but all require big data analytics.

For a deep learning investment to be deployed effectively, enterprises need to first accurately train the models despite bias and data challenges. Through proper data gathering, new data approaches, reinforcement learning, strong workflows and federated deep learning, companies can properly tackle the challenges of deep learning model training. These five tips can help guide an enterprise into training deep models the right way.

Gathering more data is the smart approach

A deep learning training program is only as good as the data it is trained on. When it comes to training, more data is preferable to the alternative. Some deep learning use cases can require up to millions of records during the process of training in order to be effective. TechTarget editor Ed Burns speaks with Patrick Lucey, now chief scientist at Stats Perform, about how much data is the right amount for deep learning projects.

When using limited data sets, focus on the approach

For companies that struggle to gather large data sets, there are still pathways to deep learning model training success. Companies can use a grow-more approach and use generative adversarial networks to generate more date to train a model on, or use a know-more approach and use transfer learning. George Lawton discusses the advantages of both these approaches as well as the importance of labelled data when it comes to deep learning and machine learning.

Decrease strain through federated deep learning

Training deep learning models requires significant compute resources which can create a drag on an enterprise's infrastructure. A recent development known as federated deep learning was created to combat this drag. Federated deep learning spreads out the compute power to numerous individual devices in order to ease the burden. George Lawton writes on specific use cases for federated deep learning and industries that have adopted the approach.

Consider reinforcement learning

Reinforcement learning is a relatively new training method that is based on rewarding desired behaviors and punishing undesired ones. This is an approach to unsupervised learning for models that can teach themselves despite limited data. Maria Korolov discusses what role reinforcement learning has in deep learning model training, as well as practical applications of the technology and other advancements.

Continue to retrain the model and build the right workflow

To prevent model decay and loss of accuracy, companies must continuously check on their models and adjust. This is true for both machine learning as well as deep learning models. Jack Vaughan speaks with James Kobielus, a research director as well as a tech consultant and analyst, about the importance of this and how creating a DevOps workflow can ease this burden.